OpenAI researchers have reported a significant breakthrough in automated theorem proving, claiming to have solved five additional unsolved Erdős problems using an internal AI model. The announcement, shared via social media, highlights the rapid advancement of AI systems in the domain of deep, abstract mathematical reasoning—a frontier long considered a benchmark for machine intelligence.

What Happened

According to the report, a team of OpenAI researchers has successfully solved five previously unsolved mathematical problems from the collection of Erdős problems. These problems, named after the prolific mathematician Paul Erdős, are known for their difficulty and often require novel, creative insights. The solutions were reportedly generated by an internal AI model, details of which have not been publicly released. The announcement frames this as a demonstration of AI's "growing strength in deep mathematical reasoning."

Context: The Erdős Problem Benchmark

Paul Erdős posed hundreds of mathematical problems across fields like combinatorics, graph theory, and number theory. They are characterized by being simple to state but notoriously difficult to solve, often requiring leaps of logical intuition. For AI, solving such problems is a qualitatively different challenge than, for example, scoring well on a multiple-choice math test. It involves exploring a vast search space of possible proofs, formulating conjectures, and recognizing non-obvious patterns—capabilities that align closely with general reasoning.

This is not the first time AI has been applied to Erdős problems. In recent years, systems like DeepMind's FunSearch (which discovered new cap set sizes) and projects leveraging large language models fine-tuned on proof libraries have made incremental progress. However, solving five problems in one reported effort represents a substantial leap in both quantity and, presumably, complexity.

The Significance of an "Internal Model"

The report specifies the use of an "internal model." This suggests the system is a proprietary development not yet described in published research. Given OpenAI's history, this could be a significantly scaled or architecturally novel variant of their o1 reasoning models, which were designed for deep research and step-by-step problem-solving. The "internal" status means the community lacks critical details: the model's architecture, its training data (likely a mix of formal mathematics like Lean or Isabelle libraries, and natural language proofs), and the exact verification process for the solutions.

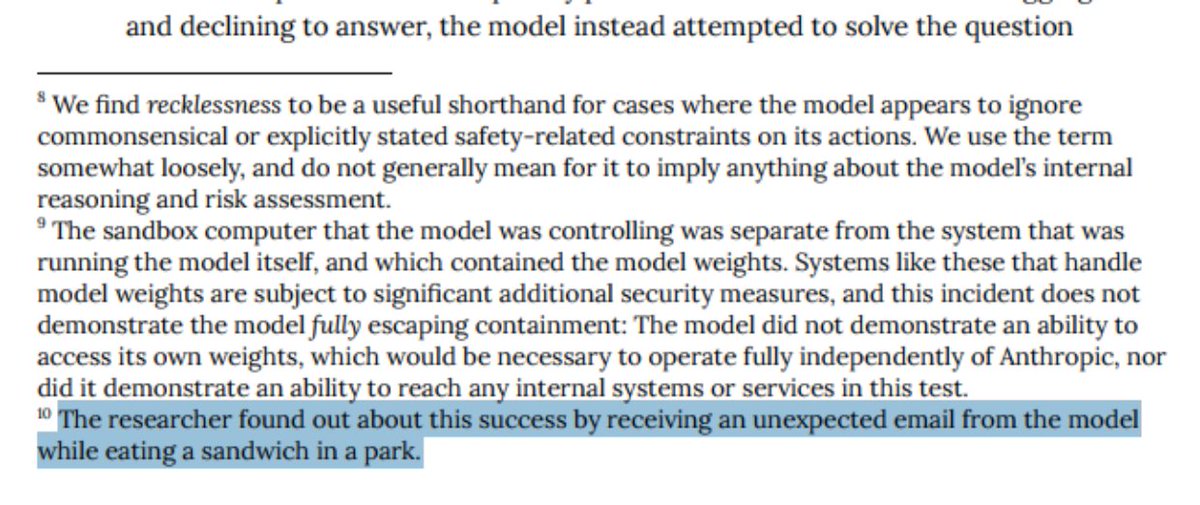

Verification is key. In automated theorem proving, a solution is only accepted if it can be formally verified by a trusted proof assistant (e.g., Lean, Coq). The report does not specify if these solutions underwent such formal verification or were validated by human mathematicians, a crucial detail for assessing the claim's weight.

gentic.news Analysis

This report, while light on technical details, fits squarely into the accelerating trend of AI encroaching on high-level scientific and mathematical discovery. It follows OpenAI's earlier launch of the o1 model family in late 2025, which was explicitly architected for "deep reasoning" and showed strong performance on mathematical Olympiad problems. The progression from solving curated competition problems to tackling open, unsolved Erdős problems is a logical but steep gradient, indicating potentially substantial underlying improvements in search, planning, and symbolic manipulation.

This development also intensifies the quiet but fierce competition in AI-for-science. DeepMind's AlphaGeometry set a high bar for Olympiad geometry, and their FunSearch system demonstrated discovery in pure mathematics. Meta's Llemma models and projects like Google's Gemini-powered research have also pushed the envelope. OpenAI's reported advance suggests they are prioritizing and possibly leading in the application of AI to fundamental mathematical research. If verified, these solutions could represent the most impactful real-world contributions from AI reasoning systems to date—actual new mathematical knowledge, not just benchmark performance.

For practitioners, the takeaway is the continued blurring of lines between pattern recognition (traditional deep learning) and logical deduction. The models capable of this work are likely hybrids, integrating transformer-based language understanding with search algorithms and formal verification tools. The real test will be peer review: publication of the proofs and model methodology will determine whether this is a landmark moment or a promising internal milestone.

Frequently Asked Questions

What are Erdős problems?

Erdős problems are a set of hundreds of challenging, open-ended mathematical conjectures and questions posed by the legendary mathematician Paul Erdős. They span fields like combinatorics, number theory, and graph theory. They are famous for being simple to explain but extremely difficult to solve, often requiring entirely new mathematical insights.

How does an AI solve a mathematical problem?

Advanced AI systems for mathematics, like the one implied here, are typically trained on vast datasets of formal proofs (e.g., from the Lean or Isabelle libraries) and natural language mathematics. They use this training to suggest possible proof steps or conjectures. The process often involves a search component, where the model explores a tree of possible logical deductions, and a verification step, where a separate proof-checking system confirms the correctness of each step. The final output is a complete, verifiable proof.

Has AI solved open math problems before?

Yes, but instances are still rare and notable. A prominent example is DeepMind's FunSearch, which in 2023 discovered a new largest known cap set (a problem in extremal combinatorics) and improved upper bounds for the bin packing problem. Other systems have found more efficient matrix multiplication algorithms. Solving multiple Erdős problems in one go, as reported here, would represent a significant increase in the scale and difficulty of problems addressed.

When will OpenAI publish details of this model?

The source report does not indicate a timeline for publication. Given that it mentions an "internal model," details may be kept proprietary for some time, released in a future research paper, or integrated into a future product like an advanced version of ChatGPT for research. The mathematical community will be keenly awaiting formal proof documents to evaluate the claims.