Google DeepMind has released the technical report for MedGemma 1.5, providing detailed specifications and performance benchmarks for its open-source, multimodal medical AI model. The report, shared via social media by researchers, offers the community its first comprehensive look under the hood of this specialized model designed for medical imaging and text analysis.

What's in the Technical Report?

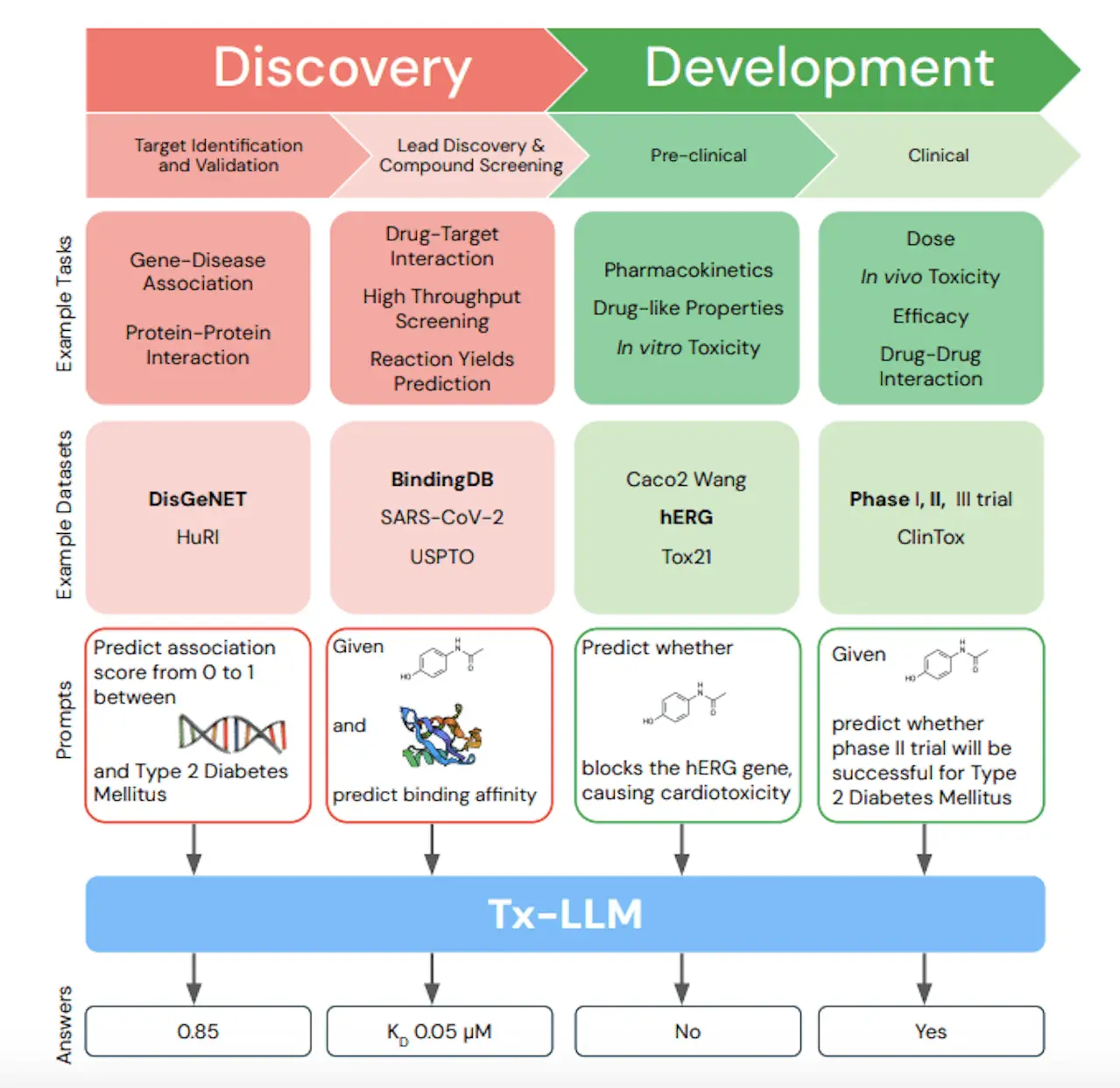

The MedGemma 1.5 technical report details the model's architecture, training methodology, and evaluation across multiple medical benchmarks. As an open model built on the Gemma 2B architecture, MedGemma 1.5 represents Google's commitment to making specialized medical AI more accessible to researchers and developers.

Key aspects covered in the report include:

- Model Architecture: Based on Gemma 2B with modifications for medical multimodal tasks

- Training Data: Combination of medical imaging datasets and text corpora

- Multimodal Capabilities: Ability to process both medical images (X-rays, CT scans, etc.) and associated text reports

- Evaluation Benchmarks: Performance on medical question answering, image classification, and report generation tasks

Technical Details and Performance

While specific benchmark numbers from the full report weren't included in the social media announcement, the technical report is expected to provide comprehensive comparisons against other medical AI models. MedGemma 1.5 follows the original MedGemma release and represents Google's continued investment in open medical AI.

The model is particularly significant because:

- Open Source: Unlike many proprietary medical AI systems, MedGemma 1.5 is openly available

- Multimodal: Handles both visual and textual medical data in a unified framework

- Specialized: Fine-tuned specifically for medical domains rather than general-purpose AI

Context and Development Timeline

This release continues Google's pattern of medical AI development that began with Med-PaLM and evolved through Med-PaLM 2. The MedGemma series represents a different approach—smaller, open models rather than massive proprietary ones. The technical report provides the documentation needed for researchers to properly evaluate, compare, and build upon this work.

gentic.news Analysis

This technical report release represents a strategic move in the increasingly competitive medical AI landscape. Google DeepMind is positioning MedGemma as the open alternative to proprietary systems like those from Anthropic (Claude Medical) and proprietary hospital implementations. By releasing detailed technical documentation, Google enables broader academic scrutiny and third-party validation—a crucial factor for medical AI adoption where trust and transparency are paramount.

The timing is significant. With regulatory frameworks for medical AI evolving rapidly in 2026, having well-documented, open models helps establish benchmarks and best practices. This aligns with trends we've covered in medical AI validation and the push for reproducible research in healthcare applications.

From a technical perspective, the MedGemma approach—adapting smaller base models (Gemma 2B) rather than training massive medical models from scratch—reflects the industry's maturation. The focus has shifted from pure scale to efficient specialization, similar to trends we've seen in other domain-specific AI applications. This efficiency matters practically: smaller models are more deployable in resource-constrained clinical settings where infrastructure limitations often hinder AI adoption.

Frequently Asked Questions

What is MedGemma 1.5?

MedGemma 1.5 is Google DeepMind's open-source, multimodal AI model specifically designed for medical applications. It can process both medical images and text, performing tasks like medical question answering, image classification, and report generation.

How does MedGemma differ from general AI models?

Unlike general AI models, MedGemma is specifically fine-tuned on medical data and optimized for healthcare applications. This specialization typically results in better performance on medical tasks while requiring less computational resources than using massive general models for medical purposes.

Is MedGemma 1.5 available for commercial use?

As an open model based on Google's Gemma architecture, MedGemma 1.5 is likely available under terms similar to other Gemma variants, though specific licensing details would be in the technical report. Medical AI applications often have additional regulatory considerations beyond standard software licensing.

How does MedGemma compare to other medical AI systems?

The technical report should provide direct comparisons to other medical AI models. MedGemma's distinguishing features are its open-source nature, multimodal capabilities, and relatively compact size compared to proprietary medical AI systems from companies like Anthropic or specialized healthcare AI vendors.