Google researchers have published a paper detailing a new AI system, AutoWrite, designed to autonomously generate complete research papers. The system takes a high-level research idea or topic as input and produces a structured manuscript containing abstract, introduction, methodology, results, discussion, and references, including generating figures and tables.

What the System Does

AutoWrite is not a simple text completion tool. It is a multi-agent framework where different specialized AI modules handle distinct parts of the paper-writing pipeline:

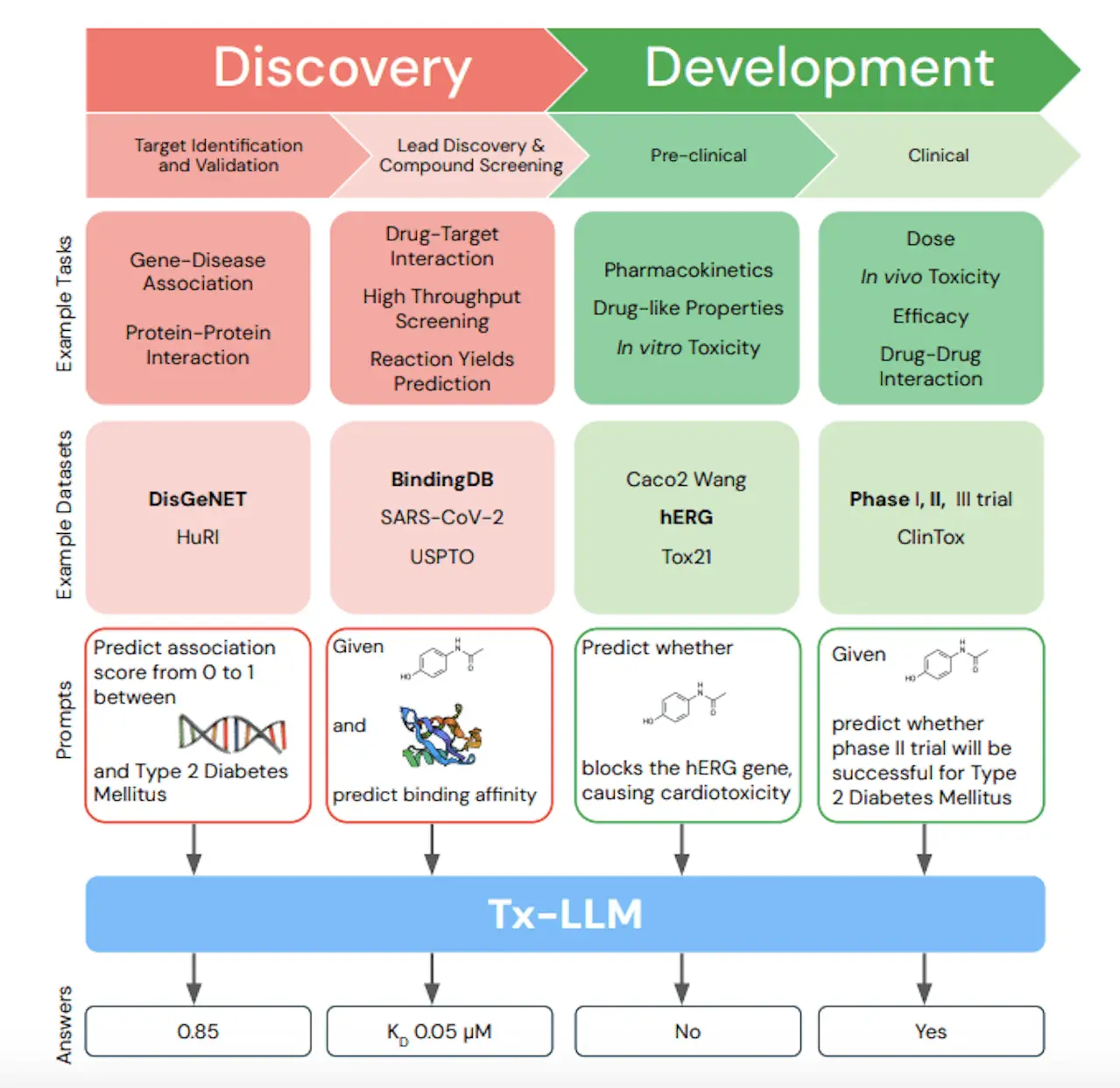

- Research Planner: Analyzes the input topic and outlines a logical paper structure, defining key sections and the narrative flow.

- Literature Review Agent: Searches and synthesizes relevant academic literature from connected databases (e.g., arXiv, PubMed) to build a background section and inform the methodology.

- Methodology Generator: Proposes a plausible research methodology or experimental setup based on the topic and current literature.

- Data & Figure Synthesizer: Generates simulated data, charts, and diagrams to visually represent "findings." This module can create graphs, plots, and schematics that adhere to scientific conventions.

- Results & Discussion Writer: Interprets the synthesized data to write a results section, then crafts a discussion contextualizing the "findings" within the broader field.

- Citation Manager: Automatically inserts and formats citations throughout the text, building a corresponding references section.

The output is a formatted LaTeX or PDF document that structurally resembles a genuine academic paper.

Technical Architecture & Training

The system leverages a large language model (LLM) as its core orchestrator, likely a variant of Google's Gemini series. This main LLM coordinates the specialized agents, which are themselves fine-tuned models or tools. Key technical components include:

- Retrieval-Augmented Generation (RAG): The literature agent uses dense vector retrieval to fetch and ground its writing in real academic texts.

- Code Execution for Figures: The figure synthesizer often works by generating Python code (e.g., using Matplotlib or Seaborn) to create plots, which is then executed in a sandboxed environment.

- Reinforcement Learning from Human Feedback (RLHF): The models were fine-tuned using feedback from researchers to improve the coherence, plausibility, and academic tone of the output.

The training data consisted of hundreds of thousands of published papers from arXiv, PubMed Central, and other open-access repositories, allowing the model to learn scientific writing style, structure, and jargon.

Potential Applications and Immediate Limitations

The researchers position AutoWrite as a productivity tool for scientists, not a replacement. Proposed use cases include:

- Rapid Drafting: Generating a first-draft manuscript from a set of notes and results, saving researchers time on structuring and initial writing.

- Grant and Proposal Writing: Assisting in the creation of complex, structured documents required for funding applications.

- Educational Tool: Helping students learn scientific writing by generating example papers for critique and analysis.

However, the paper clearly outlines major limitations:

- No Novel Science: AutoWrite synthesizes and recombines existing knowledge. It cannot conduct actual experiments or generate fundamentally new scientific insights.

- Factual Hallucinations: The system can "hallucinate" citations, fabricate data points, or propose unsound methodologies if not carefully supervised.

- Ethical & Integrity Risks: The technology could lower the barrier for generating fraudulent or low-quality papers, posing a significant challenge to academic publishers and peer review.

The Brotherhood of Scientific AI Tools

AutoWrite enters a growing ecosystem of AI tools aimed at the research workflow. It is conceptually adjacent to AI literature review assistants like Elicit and Scite, and code-generating research tools like AlphaCode. Its differentiation lies in its end-to-end ambition to produce a complete document, moving beyond assistance with a single task.

The Google team emphasizes that human researchers must remain "in the loop," using AutoWrite as a drafting assistant while providing rigorous fact-checking, ethical oversight, and intellectual direction.

gentic.news Analysis

This development is a logical, yet bold, extension of the trajectory we've tracked since the release of GPT-3. The automation has marched from code (GitHub Copilot, 2021) to scientific reasoning (DeepMind's AlphaFold, 2020; GNoME, 2022) and now to the final, formal output of the research process: the paper itself. It represents the commoditization of scientific rhetoric.

Strategically, this aligns with Google DeepMind's established focus on AI for science, as seen in projects like AlphaFold for protein folding and GNoME for materials discovery. AutoWrite can be viewed as the complementary tool that accelerates the dissemination of findings from such discovery engines. If Google can integrate AutoWrite with its vast scholarly index (Google Scholar) and its experimental simulation tools, it could create a powerful, closed-loop research platform.

However, the ethical stakes here are exceptionally high. The peer-review system is already strained. Widespread use of such technology could lead to an inflation of plausible but unsubstantiated papers, forcing a crisis in scholarly trust. The onus will shift even more heavily onto reviewers and journals to detect AI-generated fabrications, potentially accelerating the adoption of AI-powered peer-review tools—an arms race in academic publishing. For practitioners, AutoWrite is a powerful drafting lever, but its value is entirely dependent on the human expert's ability to critically evaluate and validate its every output. The core skill for researchers may shift from writing to extreme-accuracy editing and fact-checking.

Frequently Asked Questions

Can Google's AutoWrite AI conduct original scientific research?

No. AutoWrite is a sophisticated text and document synthesis engine. It cannot formulate novel hypotheses, design physical experiments, or analyze real, novel data. It works by recombining and reformatting existing information from its training data and retrieved documents. The "science" it presents is simulated based on patterns in past literature.

What are the main ethical concerns with AI-generated research papers?

The primary concerns are academic integrity and pollution of the scientific record. The tool could be used to: 1) Mass-produce low-quality or fraudulent papers for the purpose of padding publication records. 2) Generate convincing but entirely fabricated studies, which could mislead other researchers before being caught. 3) Undermine trust in published literature, as readers may doubt whether a paper represents human intellectual work. This necessitates new tools for AI-generated text detection and potentially new norms for disclosing AI use in manuscript preparation.

How is AutoWrite different from using ChatGPT to write a paper?

While both are LLM-based, AutoWrite is a structured, multi-agent system built specifically for the academic paper genre. Unlike a general-purpose chatbot, it has dedicated modules for literature retrieval, figure generation, and citation management, and it outputs a formally structured document. ChatGPT might help you write sections, but AutoWrite aims to manage the entire pipeline from outline to formatted references, with a deeper baked-in understanding of scientific paper conventions.

Will tools like AutoWrite put researchers out of work?

Unlikely for the foreseeable future. The tool is designed as an assistant to automate the labor-intensive writing and formatting process, not the core intellectual work of research—asking questions, designing experiments, and interpreting results. Its value is in freeing up researcher time from drafting to focus more on thinking and experimentation. However, it will change the skill set required, placing a higher premium on critical evaluation, experimental design, and oversight of AI tools.