What Happened

AI researcher and developer Rohan Paul reports that Perplexity Computer, the recently launched AI-native hardware device from Perplexity AI, now supports integration with personal health data sources. According to a post on X, the device can connect to health applications, wearable devices, lab results, and medical records. Paul described the experience as "exceeding my expectations" and noted, "my primary care physician is in my pocket."

The post includes a link to a promotional video for Perplexity Computer, suggesting the feature may be part of its evolving capabilities or a newly highlighted use case.

Context

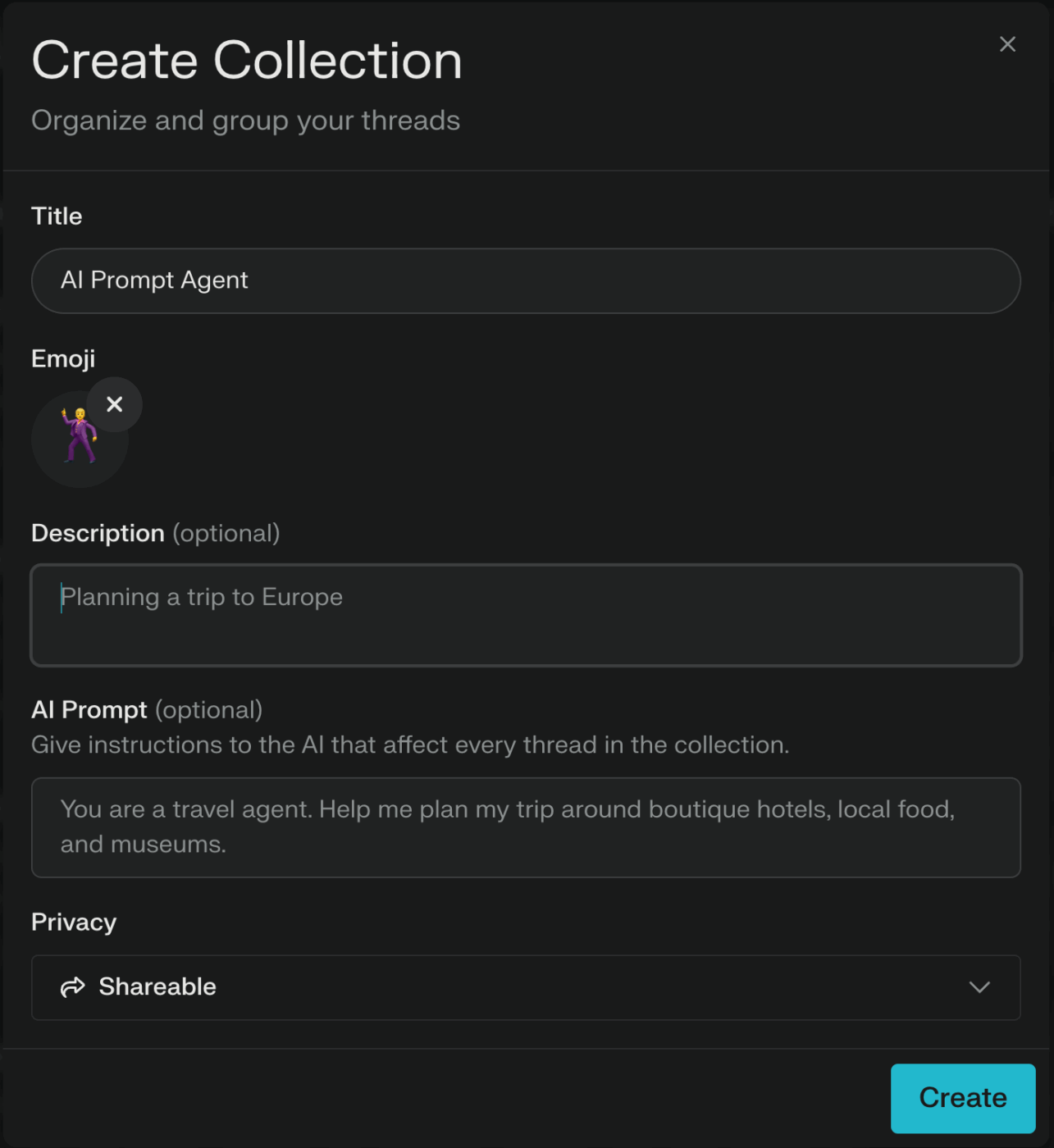

Perplexity Computer is a dedicated device running Perplexity's AI search and assistant software. Launched in June 2024, it is designed as an "answer engine" for proactive, personalized information retrieval without a traditional app-based interface. Its core proposition has been providing verified, citation-backed answers by searching the web in real-time.

This reported health integration marks a significant expansion of its data access beyond public web sources into private, personal data streams. Connecting to wearables (like Fitbit, Apple Watch, or Oura Ring), lab result portals, and electronic medical records would allow the AI to answer health-related queries with context specific to the user's current vitals, historical trends, and clinical data.

What This Means for the Device

The integration shifts Perplexity Computer from a general-purpose information device toward a potential personal health companion. The ability to synthesize data from disparate health sources—activity from a wearable, glucose levels from a lab report, and notes from a medical record—could enable it to provide summarized health updates, answer specific questions about test results, or track progress against health goals.

However, the source does not detail the technical implementation, specific health platforms supported, or the privacy and security protocols for handling such sensitive data. These would be critical considerations for users and a necessary area for Perplexity to address transparently.

Reported based on user feedback from @rohanpaul_ai. Official feature specifications and supported integrations should be confirmed via Perplexity's documentation.