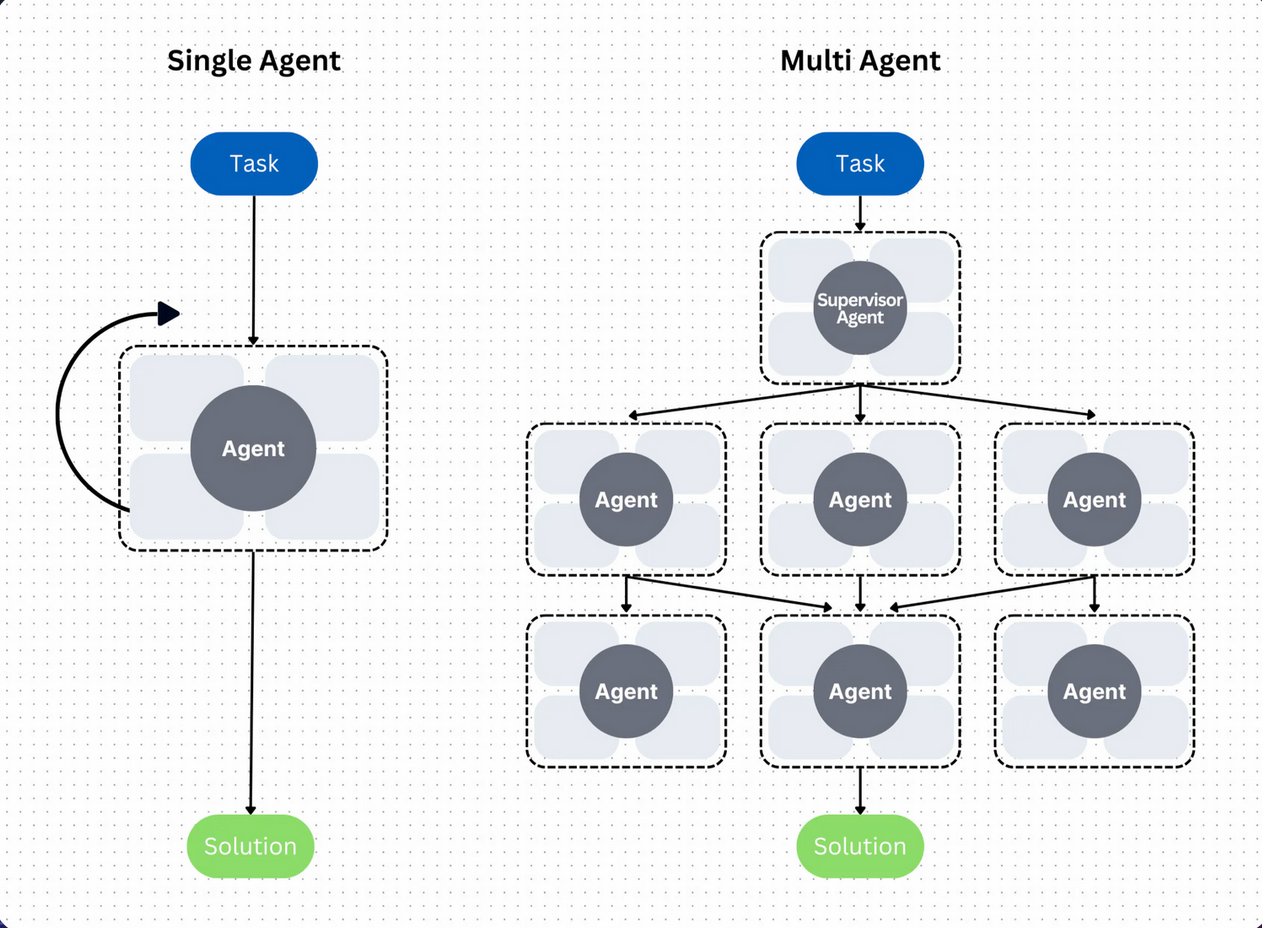

Artificial intelligence company Perplexity has announced a significant expansion of its AI agent ecosystem, specifically designed to enable local hardware and cloud infrastructure to run AI agents while addressing critical security vulnerabilities that plagued earlier implementations. The development represents what AI researcher Rohan Paul describes as "OpenClaw but without the security vulnerabilities"—a reference to previous open-source AI agent frameworks that suffered from security shortcomings.

The OpenClaw Legacy and Security Challenges

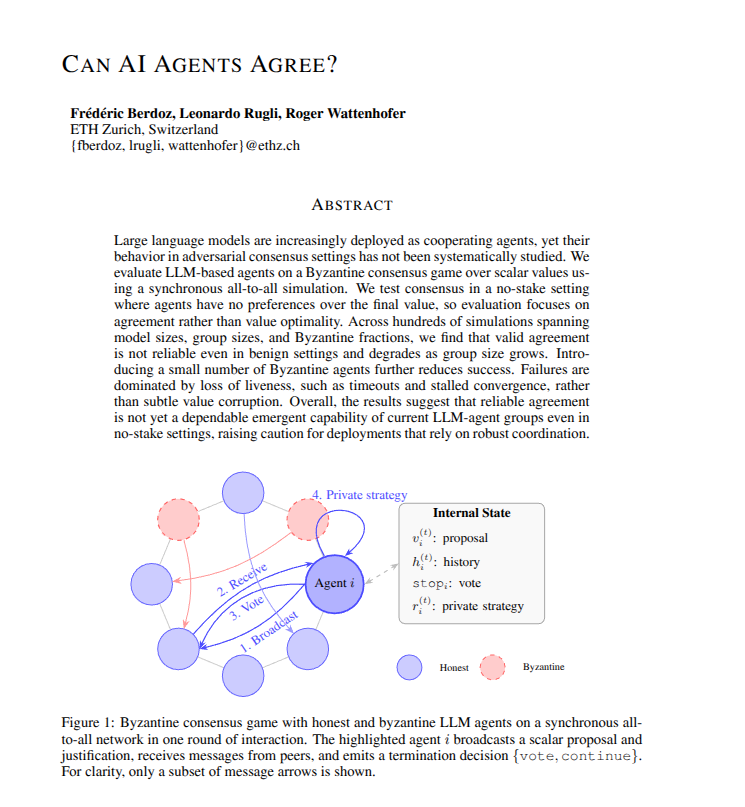

OpenClaw has represented an important category in the AI agent ecosystem—open-source frameworks that allow developers to create and deploy AI agents across various platforms. However, like many rapidly evolving AI technologies, early implementations often prioritized functionality over security, creating vulnerabilities that could be exploited in production environments.

These security issues typically fell into several categories: insecure API integrations, insufficient access controls, vulnerability to prompt injection attacks, and inadequate isolation between agent processes. For organizations considering deploying AI agents on their infrastructure, these vulnerabilities presented significant barriers to adoption, particularly for sensitive applications or regulated industries.

Perplexity's Expanded Ecosystem

Perplexity's expansion appears to address these concerns systematically while maintaining the accessibility that made OpenClaw appealing to developers. The company has developed an ecosystem that allows AI agents to run on both local hardware and cloud infrastructure with enhanced security measures built into the architecture.

This approach recognizes the growing demand for AI agents that can operate across different computational environments—from edge devices and on-premises servers to public and private cloud platforms. By creating a unified ecosystem with security as a foundational principle, Perplexity aims to lower the barrier for organizations to deploy AI agents in production environments.

Technical Implementation and Security Features

While specific technical details weren't provided in the source material, the reference to addressing OpenClaw's vulnerabilities suggests several likely security enhancements:

Secure Communication Protocols: Implementing encrypted channels for agent-to-agent and agent-to-infrastructure communication to prevent interception or manipulation of data in transit.

Access Control and Authentication: Robust identity verification and permission systems to ensure only authorized entities can interact with or modify agent behavior.

Input Validation and Sanitization: Protection against prompt injection and other input-based attacks that could compromise agent integrity or lead to unauthorized actions.

Resource Isolation: Ensuring agents operate within constrained environments with limited access to system resources and other processes.

Audit Logging and Monitoring: Comprehensive tracking of agent activities for security analysis, compliance, and debugging purposes.

Implications for AI Development and Deployment

This development has several important implications for the broader AI ecosystem:

Enterprise Adoption: By addressing security concerns, Perplexity's approach makes AI agents more viable for enterprise applications where security and compliance are non-negotiable requirements.

Edge Computing Integration: The ability to run securely on local hardware opens possibilities for AI agents in edge computing scenarios, from IoT devices to on-premises industrial systems.

Developer Accessibility: Maintaining an open-source or accessible approach while enhancing security could accelerate innovation by allowing more developers to build on a secure foundation.

Industry Standards: As more companies address security in AI agent frameworks, we may see the emergence of industry standards for secure AI agent deployment.

The Competitive Landscape

Perplexity's move comes amid increasing competition in the AI agent space, with companies like OpenAI, Anthropic, and various startups developing their own agent frameworks. The emphasis on security differentiates Perplexity's approach, particularly for applications where data privacy and system integrity are paramount.

This focus on security-first AI agents could position Perplexity favorably in sectors like finance, healthcare, and government, where regulatory requirements and risk considerations have slowed AI adoption compared to less regulated industries.

Future Developments and Open Questions

The expansion of Perplexity's AI agent ecosystem raises several questions for future development:

Will the security implementations be open to community review and contribution?

How will performance be balanced with security overhead, particularly on resource-constrained local hardware?

What specific use cases are most immediately enabled by this more secure approach?

As the ecosystem evolves, we can expect to see more detailed documentation, case studies, and potentially benchmarks comparing security and performance characteristics against other AI agent frameworks.

Conclusion

Perplexity's expansion of its AI agent ecosystem represents an important maturation in the development of deployable AI agents. By directly addressing the security vulnerabilities that limited earlier implementations like OpenClaw, while maintaining accessibility for developers, the company is helping to bridge the gap between experimental AI agents and production-ready systems.

This development reflects a broader trend in AI toward more secure, reliable, and enterprise-ready implementations as the technology moves from research labs to real-world applications. As organizations increasingly look to integrate AI agents into their operations, security considerations will continue to shape both technological development and adoption patterns.

Source: Rohan Paul AI on X (formerly Twitter) - https://x.com/rohanpaul_ai/status/2032165739030024267