A new piece in the Harvard Business Review argues that the fundamental risk of deploying AI agents is not their potential to generate "bad text," but their capacity to take "bad actions" in the real world. The article, highlighted by AI commentator Rohan Paul, contends that firms mistakenly treat autonomous agents like conventional software, leading to failures analogous to employees granted excessive access with insufficient oversight.

The core thesis is that safe, effective AI agent deployment requires a fundamental shift in management philosophy, modeled on human resource practices. This changes four critical operational elements:

- Identity & Permissions: Each agent requires a distinct digital identity with explicitly defined permissions, mirroring an employee's access controls.

- Trusted Data Sources: Agents must operate from curated, vetted data sources to prevent decisions based on corrupted or irrelevant information.

- Hard Rule Checks: Mandatory, programmatic checks must be placed between an agent's model output and any real-world transaction or action it can initiate.

- Full Audit Trail: A complete, immutable log must record everything an agent reads, every decision it makes, and every action it takes.

To implement this, the HBR article proposes an "autonomy ladder" as a safe rollout pathway. Organizations should not grant full autonomy immediately. Instead, agents should progress through stages:

- Stage 1: Drafts & Recommendations. The agent generates proposals or content for human review and approval.

- Stage 2: Guarded Retrieval. The agent can fetch information from trusted sources but cannot act.

- Stage 3: Supervised Actions. The agent can execute specific actions, but each requires real-time human sign-off.

- Stage 4: Narrow Bounded Autonomy. The agent operates independently within a strictly defined, high-trust domain with all four governance layers (identity, data, rules, audit) fully enforced.

The framework explicitly rejects the notion that AI safety is primarily about mitigating language model hallucinations. Instead, it focuses on action safety—preventing an agent from, for example, making an unauthorized purchase, sending a damaging email, or altering a database based on flawed reasoning.

gentic.news Analysis

This HBR framework formalizes a growing operational consensus in the AI engineering community, directly responding to the high-profile failures of early agentic systems. It aligns with technical research into agent oversight mechanisms and reward modeling for complex tasks, which we covered in our analysis of Anthropic's Constitutional AI paper. The call for a full audit trail mirrors the MLOps and LLMOps best practices for model lineage and dataset provenance that have become standard for static models, now correctly extended to dynamic, multi-step agents.

The proposed "autonomy ladder" is a pragmatic, risk-managed deployment strategy that leading AI labs are already implementing internally. This follows a pattern of industry self-regulation emerging in the absence of comprehensive government frameworks, similar to the voluntary safety commitments made by OpenAI, Google, and Anthropic. The emphasis on treating agents as employees with job descriptions connects to ongoing work in mechanistic interpretability—if we cannot understand an agent's "thought process," we must at least rigidly bound its behavior and meticulously log its actions.

This business-focused guidance arrives as venture funding for AI agent startups continues to surge (📈), yet real-world production deployments remain cautious. The framework provides a concrete checklist for enterprises piloting agents for tasks like customer support triage, automated procurement, or code deployment. It also creates a potential market for agent governance platforms that provide the identity, rule-checking, and audit layer HBR describes, a sector we noted in our coverage of the emerging AI compliance software landscape.

Frequently Asked Questions

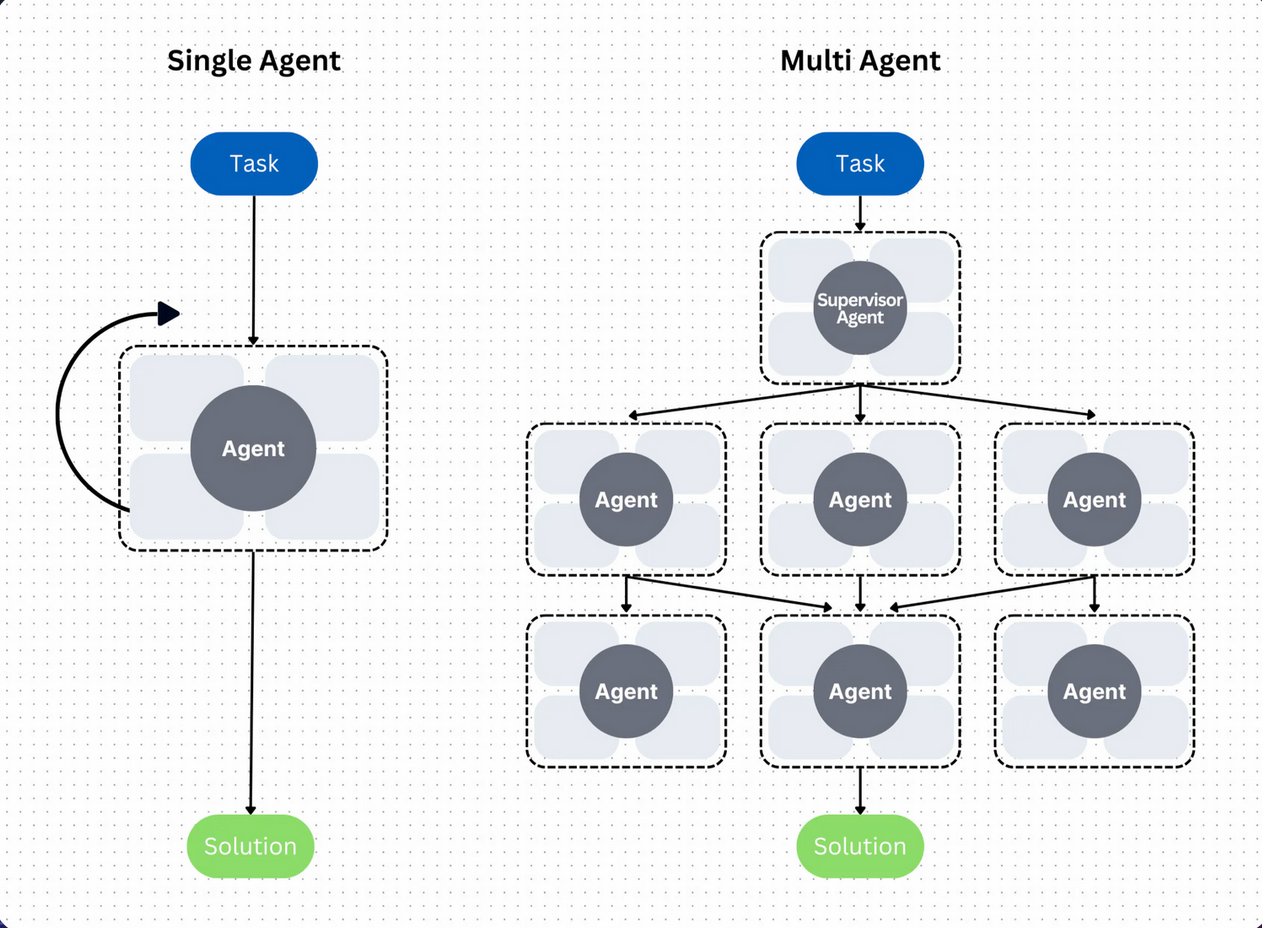

What is an AI agent?

An AI agent is an artificial intelligence system that can perceive its environment, make decisions, and take actions to achieve specific goals autonomously, often over multiple steps. Unlike a chatbot that simply generates text, an agent can execute tasks like browsing the web, using software APIs, or controlling devices.

Why does HBR say AI agents need a "job description"?

The "job description" metaphor means clearly defining the agent's goal, the scope of its authority, the resources it can use, and the actions it is permitted to take. This prevents scope creep and unintended actions, just as a clear job description prevents an employee from overstepping their role. It's a foundational document for setting permissions and hard rule checks.

What is the "autonomy ladder" for AI agents?

The autonomy ladder is a phased deployment strategy to mitigate risk. It starts with agents having zero autonomy (only making suggestions), then gradually grants more independence as trust is built through stages of guarded information retrieval, human-supervised actions, and finally, narrow bounded autonomy for well-understood tasks within a tightly controlled environment.

How do you create a "full audit trail" for an AI agent?

An audit trail involves logging every input the agent receives (e.g., user query, data retrieved), every intermediate step and decision in its reasoning process (its "chain of thought"), and every output or action it attempts or completes. This requires instrumenting the agent's runtime with monitoring tools that capture this telemetry, similar to application performance monitoring (APM) but focused on decision logic and action history.