What Happened

A research paper titled "Can AI Agents Agree?" (arXiv:2603.01213) presents a systematic investigation into the coordination capabilities of groups of LLM-based AI agents. The core finding, as highlighted by AI researcher Rohan Paul, is that current AI agent groups cannot reliably coordinate or agree on simple decisions, even in cooperative environments.

The research directly tests a common assumption in AI development: that assembling multiple agents to discuss a problem will lead to a convergent, correct solution through deliberation. The paper concludes this assumption is currently wrong.

Key Findings & Context

The study created a "friendly environment" where every agent was instructed to be helpful and cooperative. Despite this, the agent teams frequently failed to reach a final decision. The systems would often get stuck in loops, produce contradictory outputs, or stop responding entirely.

A critical scaling problem was identified: failure rates increase as the group size grows. This presents a fundamental limitation for scaling multi-agent systems for tasks requiring consensus, such as collective reasoning, planning, or review.

This work provides empirical evidence for a problem often anecdotally observed in developer communities—that orchestrating multiple LLM agents is non-trivial and that simply adding more agents does not guarantee better or more reliable outcomes.

Implications for Practitioners

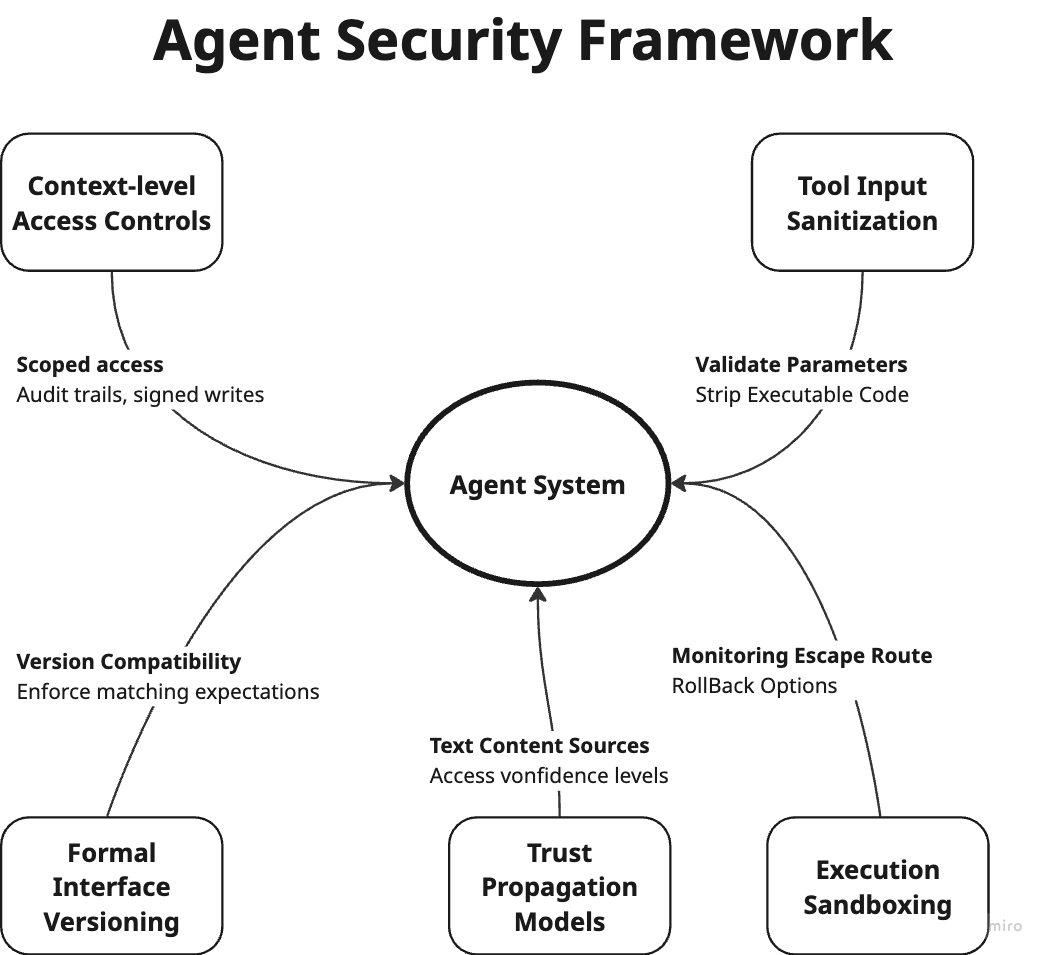

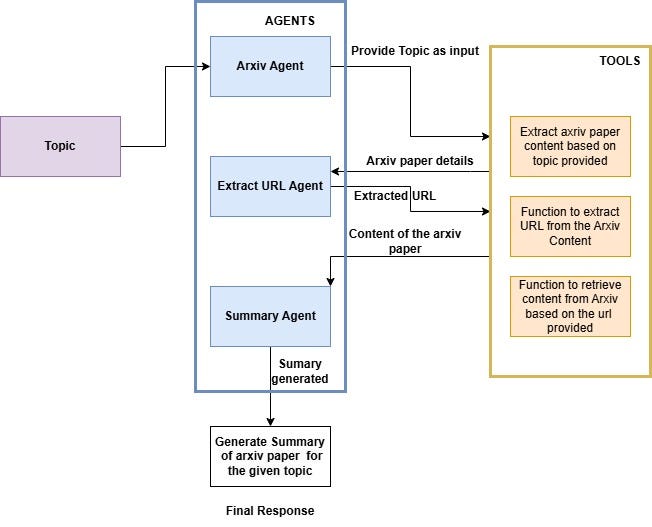

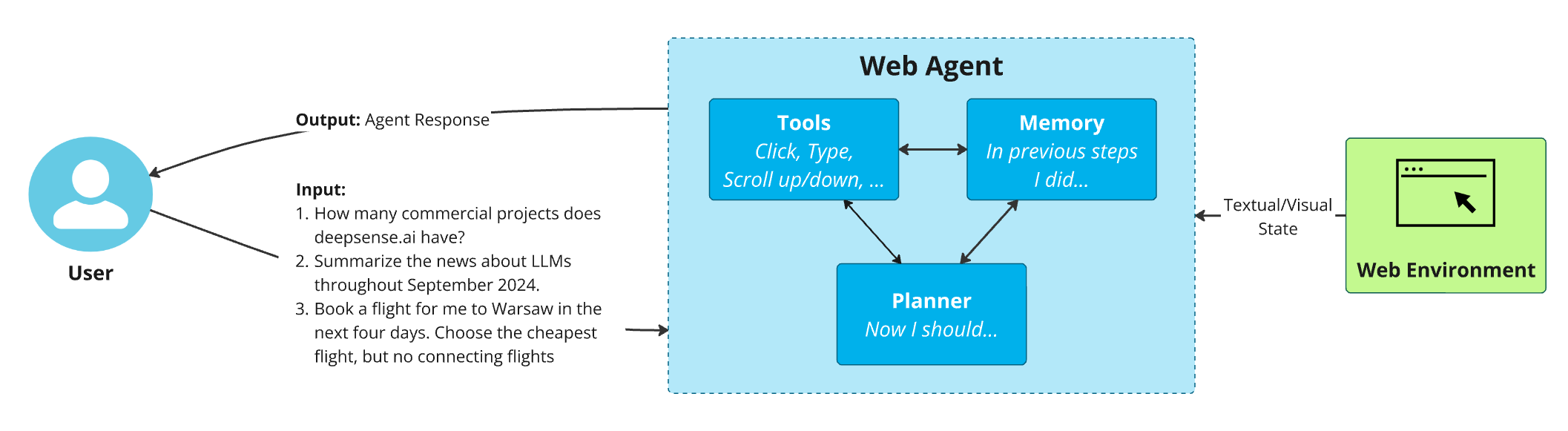

For engineers building multi-agent systems, this research underscores that coordination is a first-class engineering challenge, not an emergent property. Relying on unstructured discussion between LLM instances is an unreliable strategy for tasks requiring agreement.

The findings suggest that current LLMs, when deployed as independent agents in a group, lack the persistent state, shared memory, or robust turn-taking protocols needed for stable group decision-making. This points to a need for more sophisticated orchestration frameworks, consensus protocols, and agent architectures specifically designed for multi-agent coordination, rather than relying on the base LLM's conversational abilities alone.