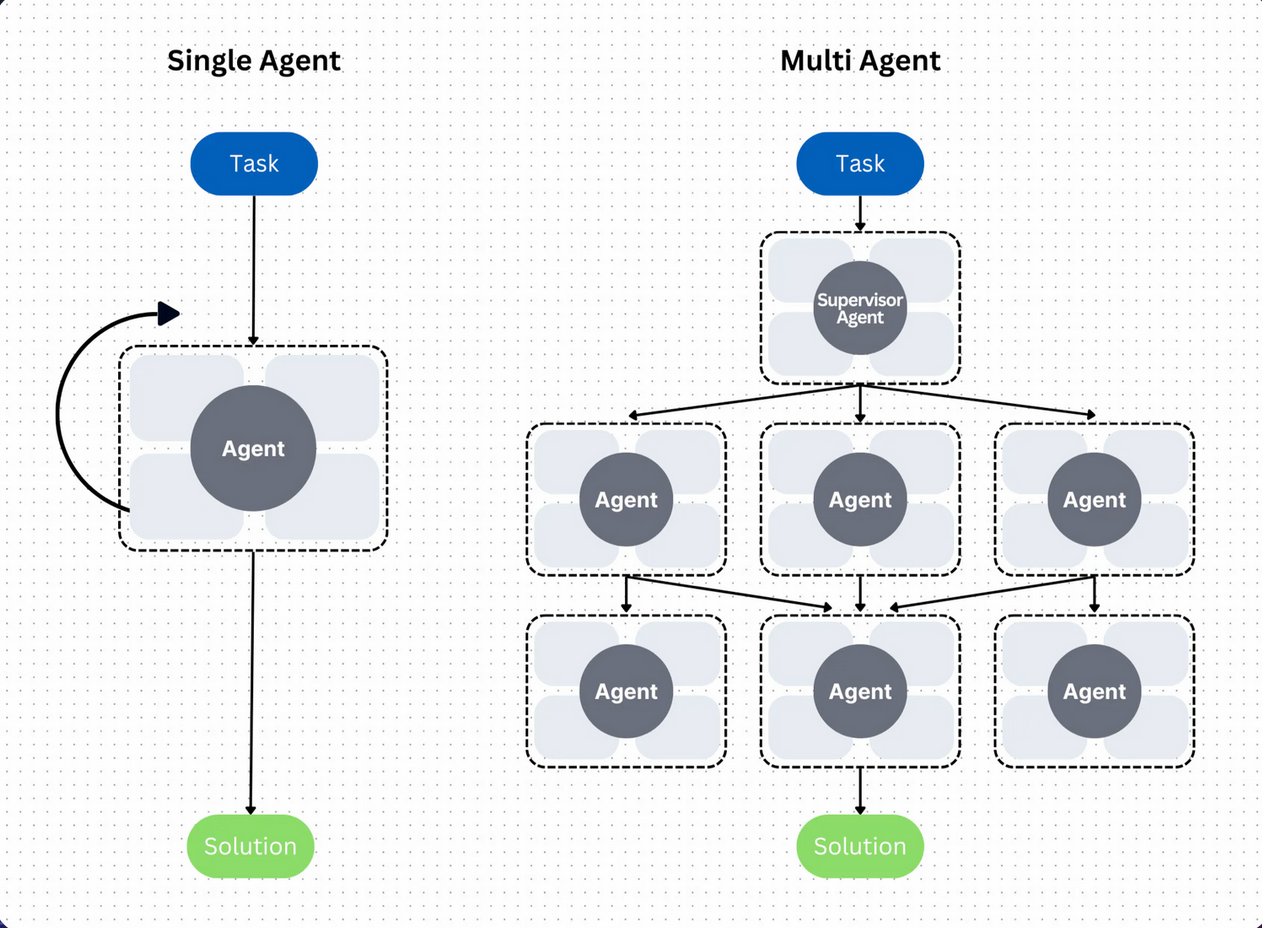

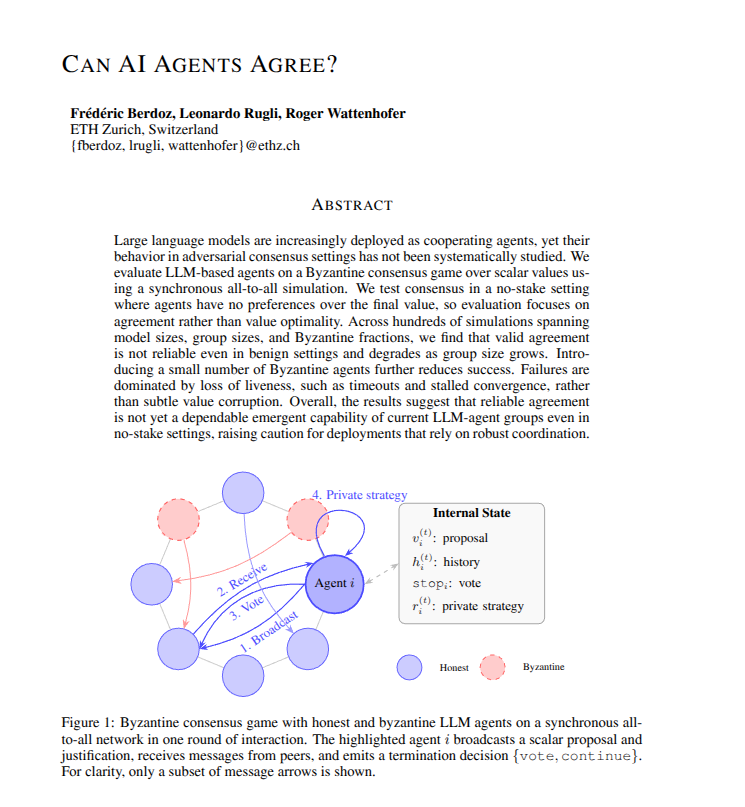

A new, comprehensive research paper highlighted by AI researcher Rohan Paul identifies a critical bottleneck in the development of practical AI agents. The core thesis: AI agents fail not at calling individual tools, but at coordinating many tools reliably over time.

This distinction reframes a fundamental challenge in the field. While significant effort has been spent on improving an agent's ability to correctly invoke a single API or function (tool calling), the paper suggests the real breakdown occurs in the orchestration layer—managing sequences of tool calls, maintaining context across steps, handling errors, and adapting plans over longer horizons.

What the Paper Examines

The paper, which has garnered attention on social platforms, presents a systematic analysis of agent failure modes. It moves beyond evaluating agents on isolated tool-use tasks and instead focuses on complex workflows that require:

- Sequential Tool Use: Chaining multiple, dependent tool calls where the output of one is the input to the next.

- State Management: Maintaining a consistent understanding of the task state and progress across potentially dozens of steps.

- Error Recovery & Replanning: Detecting when a tool call fails or produces an unexpected result, and dynamically adjusting the subsequent plan.

- Long-Horizon Planning: Breaking down a high-level objective into a correct and efficient sequence of low-level tool actions.

The Implication: A Shift in Research Focus

The argument implies that benchmark suites focusing on single-turn tool calling (e.g., "call this API with these parameters") may not adequately stress-test agents for real-world deployment. The harder problem is orchestration reliability.

For developers, this means an agent that aces a benchmark for function calling might still fail miserably at a task like "analyze this sales data, draft a report, email it to the team, and schedule a follow-up meeting," because it cannot reliably manage the four-tool sequence and the state transitions between them.

The Path Forward

The paper's analysis suggests several directions for improvement:

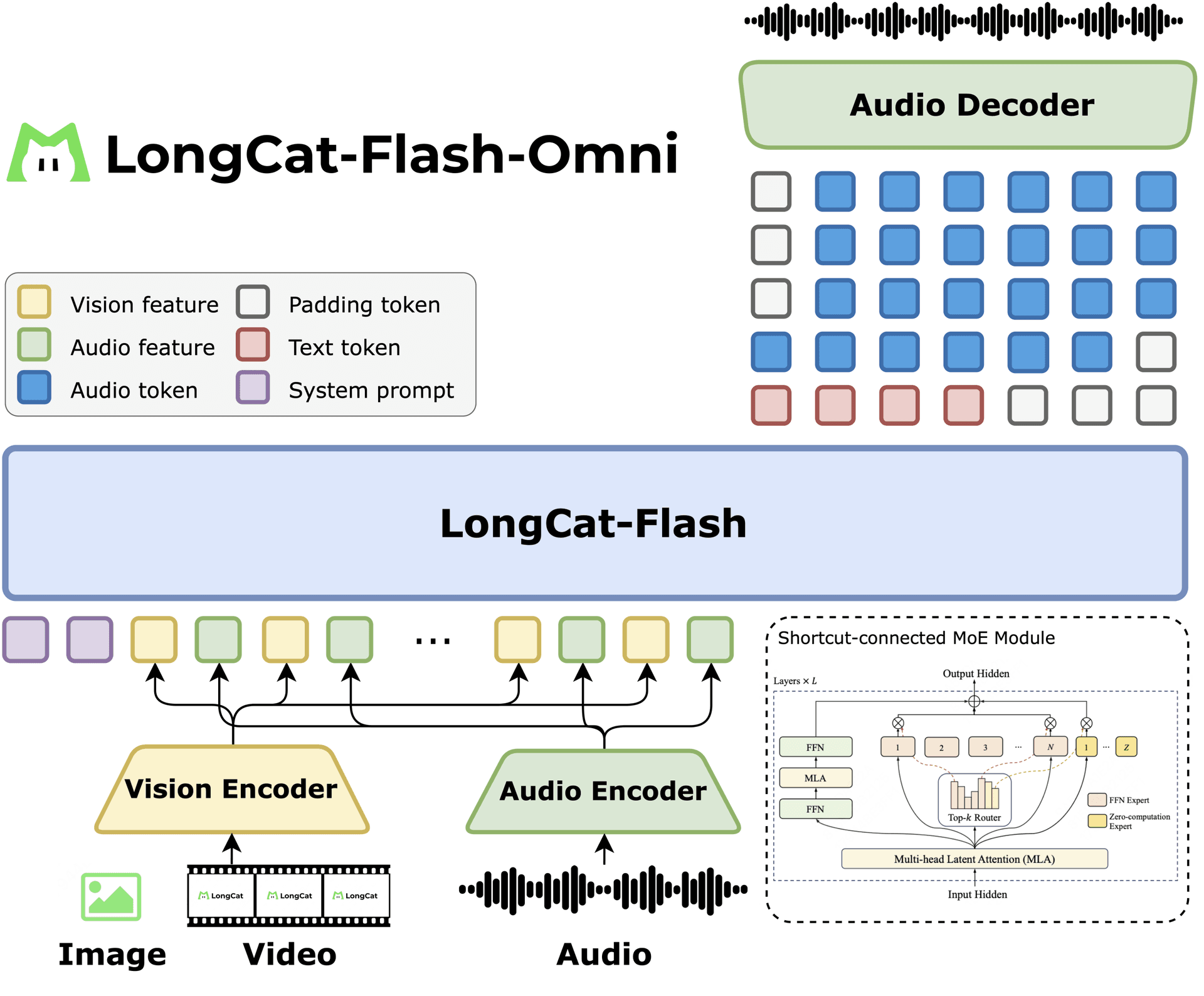

- Advanced Memory & State Architectures: Moving beyond simple context windows to more structured, persistent, and queryable state representations that an agent can reliably reference and update.

- Better Planning Algorithms: Integrating more robust classical planning techniques or learned planners that can generate and revise multi-step plans conditioned on real-time outcomes.

- Benchmarks for Coordination: Developing new evaluation frameworks that specifically measure an agent's ability to complete multi-tool, multi-step workflows with a high success rate.

gentic.news Analysis

This paper touches on the central, unsolved problem of agentic reliability, which has become the defining hurdle for moving AI from a chat-based co-pilot to an autonomous workforce. As we covered in our analysis of Devika and other coding agents, the gap between a promising demo and a production-ready system is vast, and it's precisely this coordination gap that causes most failures.

The focus on multi-step coordination aligns with a broader industry trend we've tracked. In recent months, major players have shifted resources toward workflow automation and stateful agents. This includes efforts from companies like Sierra (founded by Bret Taylor and Clay Bavor), which is building enterprise agents designed for long-running, multi-turn conversations with tool use, and Adept, which continues its foundational work on agents that act across digital interfaces. The paper provides a formal framework for what these companies are empirically discovering: tool calling is solved, tool orchestration is not.

Furthermore, this research direction directly contradicts a simplistic scaling hypothesis—that simply feeding more tool-description data into a larger model will solve agent reliability. It points instead to a need for architectural innovation in how agents reason over state, plan, and recover. This echoes the conclusions from our deep-dive on the SWE-Agent paper, which found that simple, structured scratchpads for planning and editing significantly outperformed raw GPT-4 on software engineering tasks, highlighting the importance of the agent's control loop, not just its underlying LLM.

Frequently Asked Questions

What is an AI agent?

An AI agent is a system that uses a large language model (LLM) as a reasoning engine to perceive its environment (often text-based), make decisions, and take actions by calling tools (APIs, functions, code execution) to achieve a given goal autonomously.

What's the difference between tool calling and tool coordination?

Tool calling is the single action of correctly formatting a request to a specific function or API (e.g., get_weather(location="Paris")). Tool coordination is the higher-order skill of deciding which sequence of tools to call, in what order, based on the evolving state of the task, and recovering from failures mid-sequence (e.g., Get data → Analyze data → Format chart → Write summary → Email report).

Why is coordinating tools over time so hard for AI agents?

It compounds multiple difficult problems: long-horizon planning, maintaining a consistent internal representation of the task state across many steps, and dealing with non-determinism (tools can fail or return unexpected results). Current LLMs, which form the "brain" of most agents, are stateless and prone to reasoning errors over long contexts, making this sequential decision-making unstable.

Does this mean current AI agents are useless?

No, but it clarifies their limitations. They excel as co-pilots where a human manages the high-level coordination and state, approving each step. They struggle as full autopilots on complex, multi-step workflows. The paper helps researchers and engineers target the right problem to move from the former to the latter.