A quiet revolution in artificial intelligence is creating unexpected turbulence in the consumer electronics market. According to recent reports from industry observers including Rohan Paul, the explosive growth of local AI agent frameworks—particularly OpenClaw—is causing severe inventory issues for Apple's professional hardware lineup. Mac Studio configurations with 128GB or 512GB of unified memory are now facing delivery delays of six weeks or more as developers, researchers, and AI enthusiasts buy up every available high-memory Mac to run sophisticated AI models locally.

The Local AI Revolution Hits Hardware Limits

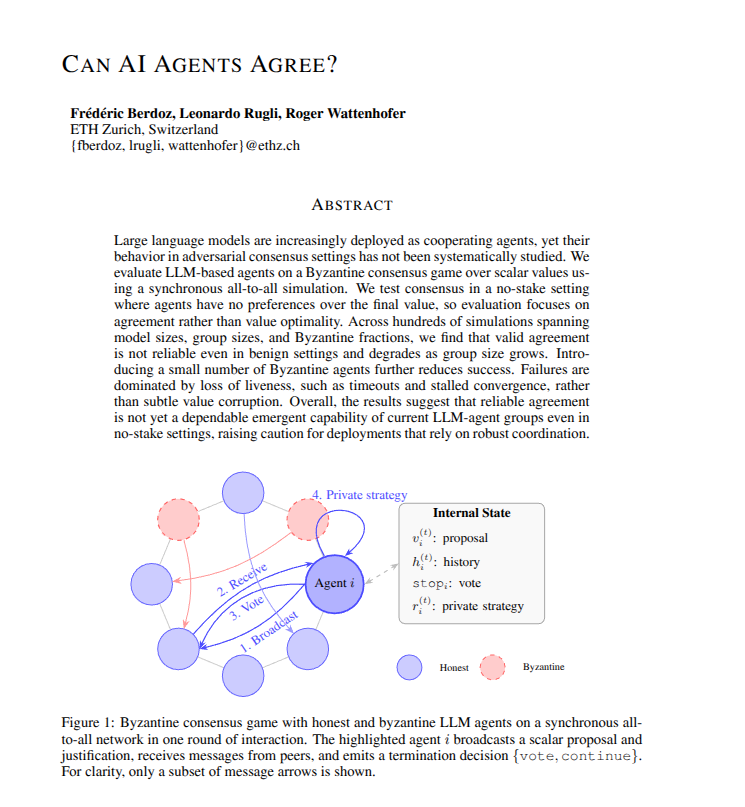

This isn't just another product shortage story. What we're witnessing is the tangible hardware consequence of the most significant shift in AI deployment since the cloud computing era began. For years, running advanced AI models required access to expensive cloud infrastructure from providers like AWS, Google Cloud, or Microsoft Azure. The emergence of frameworks like OpenClaw—which enables users to run sophisticated AI agents entirely on local hardware—has fundamentally changed this equation.

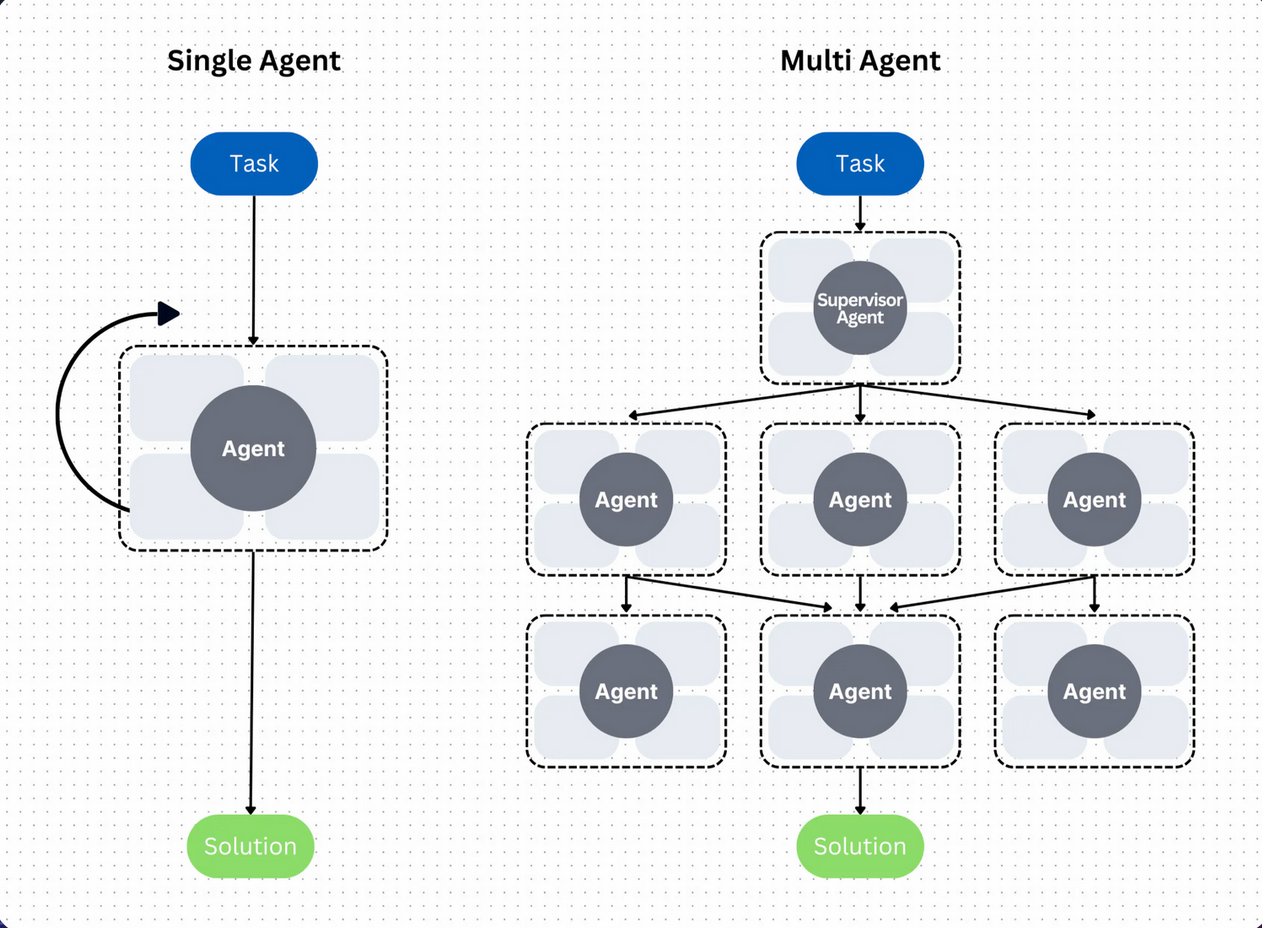

Local AI offers compelling advantages: complete data privacy, no ongoing subscription costs, and immediate responsiveness without network latency. However, these benefits come with substantial hardware requirements. Modern AI models, especially those capable of functioning as autonomous agents, demand significant memory bandwidth and capacity. Apple's unified memory architecture, which allows the CPU and GPU to access the same memory pool, happens to be particularly well-suited for these workloads—making Mac Studio and high-memory Mac mini configurations the unexpected heroes of the local AI movement.

Why Apple Hardware Became the AI Workhorse

Apple's transition to its own silicon has created a perfect storm for AI development. The M-series chips feature a unified memory architecture that eliminates the bottleneck between CPU and GPU memory—a critical advantage for machine learning workloads that shuffle massive amounts of data between processing units. While PCs with discrete graphics cards have separate VRAM and system RAM, Apple's approach allows AI models to utilize the entire memory pool seamlessly.

This architectural advantage has turned Mac Studio into something of a "sleeper hit" in the AI community. With configurations offering up to 192GB of unified memory (and rumors of even higher capacities in future models), these machines can handle large language models and complex AI agents that would traditionally require expensive server-grade hardware. The result? A buying frenzy that Apple's supply chain wasn't prepared to handle.

The Broader Implications for AI Development

The hardware shortage reveals several important trends in the AI landscape:

1. Democratization of AI Development: The ability to run sophisticated AI locally lowers the barrier to entry for developers, researchers, and startups who previously couldn't afford cloud computing costs. This could accelerate innovation as more minds gain access to powerful AI tools.

2. Privacy-First AI Gains Momentum: As concerns about data privacy grow—particularly for enterprise applications—local AI offers a compelling alternative to sending sensitive data to third-party cloud services. The hardware shortage suggests this privacy-conscious approach is resonating with users.

3. Hardware-Aware AI Optimization: The current bottleneck may drive increased optimization of AI models for specific hardware architectures. We're likely to see more frameworks that are specifically tuned for Apple's unified memory or similar architectures from other manufacturers.

4. New Market Opportunities: The shortage creates opportunities for alternative hardware solutions, potentially benefiting competitors with similar unified memory architectures or spurring innovation in specialized AI hardware.

What This Means for Consumers and Developers

For those looking to enter the local AI space, the current shortage presents significant challenges. The six-week wait times for high-memory Mac Studios mean developers may need to:

- Consider alternative hardware configurations

- Explore cloud options for development while waiting for hardware

- Look at the used market for available systems

- Delay projects until hardware becomes available

Apple faces its own challenges: balancing production between consumer-focused devices and professional hardware, managing supply chain constraints for high-capacity memory modules, and potentially accelerating development of even more AI-optimized hardware.

The Future of Local AI Hardware

This shortage is likely just the beginning. As AI models continue to grow in capability and complexity, hardware requirements will only increase. We can expect to see:

- More manufacturers adopting unified memory architectures

- Specialized AI accelerators becoming standard in consumer devices

- Increased competition in the professional workstation market

- New form factors optimized specifically for AI workloads

Apple's response will be particularly telling. Will they increase production of high-memory configurations? Develop dedicated AI hardware? Or partner with framework developers to create optimized solutions? The answers to these questions will shape the local AI landscape for years to come.

Conclusion: A Watershed Moment for Consumer AI

The Mac Studio shortage represents more than just a supply chain hiccup—it's evidence of a fundamental shift in how AI is developed and deployed. The rush to buy high-memory Macs signals that local AI has moved from experimental curiosity to practical necessity for a growing community of developers and users.

As frameworks like OpenClaw continue to evolve and more users recognize the benefits of running AI locally, hardware manufacturers will need to adapt. The current shortage may cause temporary frustration, but it ultimately points toward a future where powerful AI is accessible, private, and under user control—a future that's apparently arriving faster than anyone anticipated.

Source: Rohan Paul on X/Twitter (@rohanpaul_ai)