A series of recent academic and corporate publications has triggered a seismic reassessment of the quantum threat to global encryption. The core metric—the number of stable, error-corrected qubits required to crack widely-used encryption like RSA—has plummeted by a factor of 100,000 over 14 years. Where experts in 2012 estimated a need for 1 billion qubits, and in 2021 revised that to 20 million, the latest findings from February 2026 suggest 100,000 may be sufficient. Last week, new papers from Caltech, Google Quantum AI, and a startup named Oratomic pointed to a further drop to just 10,000 qubits.

This isn't a distant sci-fi scenario. The algorithms that protect Bitcoin transactions, online banking, secure messaging, and password databases rely on the mathematical difficulty of factoring large prime numbers—a problem quantum computers excel at solving via Shor's algorithm. The dramatic reduction in the required quantum resources directly shortens the timeline for what cryptographers call "Q-Day": the day current public-key encryption becomes obsolete.

What the Papers Report

The source points to a coordinated flurry of activity in late February and early March 2026. Independent teams from a leading academic institution (Caltech), a tech giant's quantum division (Google), and a private quantum startup (Oratomic) all published research within the same week. While the specific details of each paper are not provided in the source, the convergent conclusion is stark: the engineering threshold for building a cryptographically-relevant quantum computer (CRQC) is far lower than previously believed.

The timeline illustrates a pattern of accelerating revision:

- 2012 Consensus: ~1 billion physical, error-corrected qubits needed.

- 2021 Revision: Requirement drops to ~20 million qubits.

- February 2026: New estimates center on ~100,000 qubits.

- March 2026 (Last Week): Latest analyses suggest ~10,000 qubits may suffice.

This represents a 100,000x reduction in the estimated requirement over 14 years, with the most drastic revisions occurring in the last few weeks.

The Technical Implications

The collapse in the required qubit count is not due to quantum processors suddenly becoming 100,000 times more powerful. Instead, it stems from breakthroughs in quantum error correction and algorithmic efficiency. The earlier billion-qubit figure assumed a certain overhead for correcting the inherent noise and errors in quantum systems. Recent advances likely demonstrate more efficient error-correcting codes (like improved surface codes or novel topological approaches) and optimizations to the resource requirements of Shor's algorithm itself.

Each order-of-magnitude drop has a cascading effect on development roadmaps. A machine requiring 10,000 error-corrected logical qubits is a vastly different engineering challenge than one requiring 20 million. It moves the goalpost from "maybe this century" to "potentially within a decade or two"—a timeframe that overlaps dangerously with the lifespan of today's encrypted data and long-term infrastructure.

Why This Matters Now

This is a policy and security emergency, not just a theoretical computer science update. Governments, corporations, and protocols that have been operating on a "we have decades" timeline must urgently recalibrate. The push for post-quantum cryptography (PQC)—encryption algorithms believed to be secure against both classical and quantum attacks—just received its most urgent mandate yet.

The National Institute of Standards and Technology (NIST) has been standardizing PQC algorithms for years, but widespread adoption has been slow, often seen as a future-proofing exercise. These new estimates reframe PQC migration as a critical, immediate defensive operation. Systems with long deployment cycles (e.g., hardware security modules, blockchain protocols, government communication systems) can no longer afford to wait.

gentic.news Analysis

This development connects directly to several trends we've been tracking. First, it validates the aggressive investment and hiring spree in the quantum error correction space throughout 2025. Companies like Quantinuum and IBM have been heavily focused on demonstrating longer coherence times and lower logical error rates, which directly feed into these revised resource estimates. The mention of Oratomic is particularly notable. As a startup, its concurrent publication with Caltech and Google suggests it may possess proprietary IP in error correction or algorithmic compilation that challenges even the largest players—a sign of the ferment in the sector.

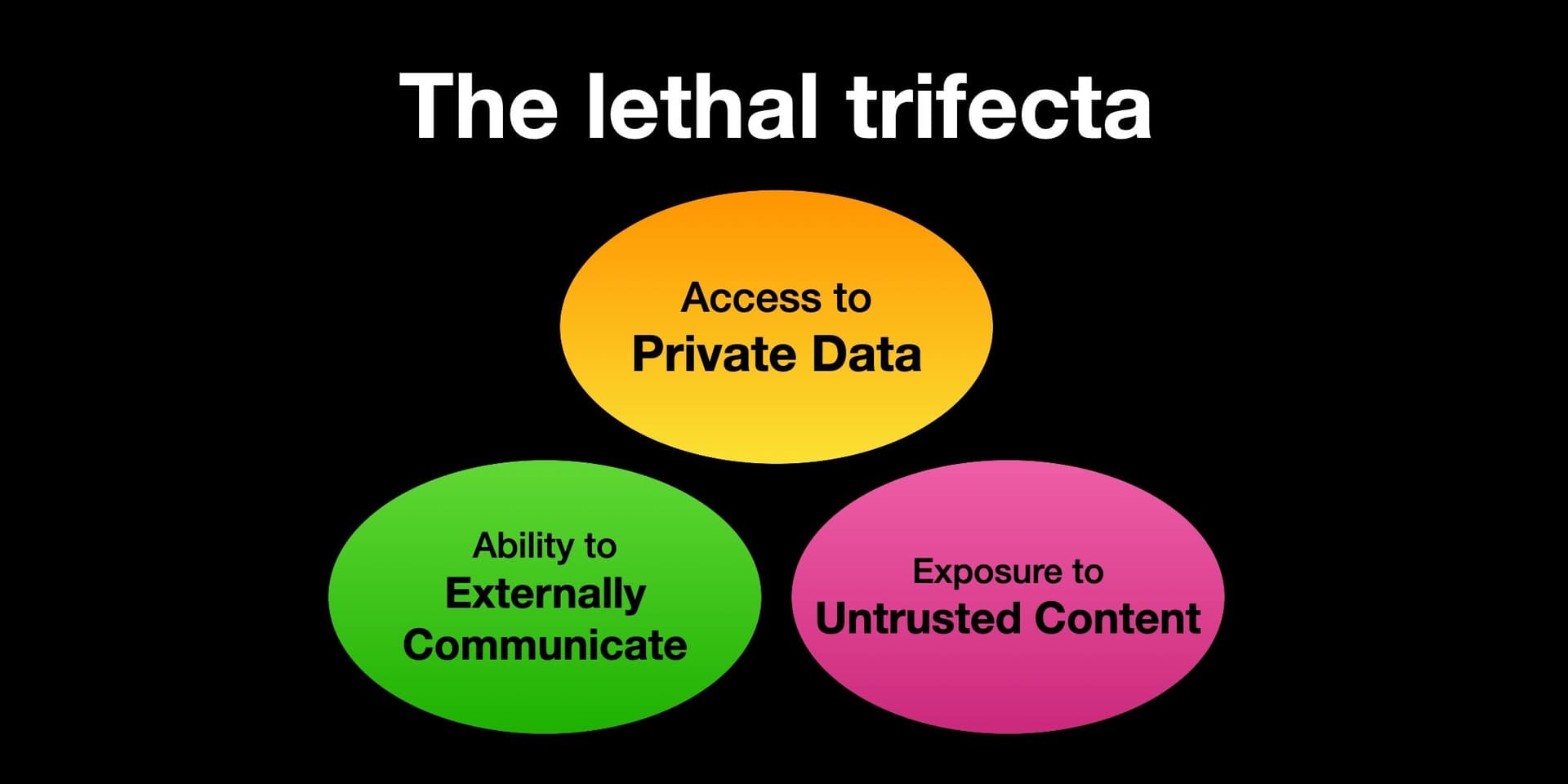

Second, this creates immediate pressure on the AI security ecosystem. As we covered in our analysis of ML-based cryptanalysis, machine learning models are already being used to probe classical encryption weaknesses. A near-term quantum horizon will force a merger of quantum and AI research for both attack and defense. We anticipate a surge in research papers and startup funding at this intersection in the coming months.

Finally, this news functionally sets a clock. If 10,000 error-corrected qubits is the new target, we can map that against the public roadmaps of leading quantum firms. While still a monumental challenge, it is no longer in the realm of pure speculation. Enterprise CISOs and blockchain core developers must now model their migration to PQC with a realistic, aggressive timeline informed by these numbers. The "cryptographic apocalypse" now has a much clearer—and closer—horizon.

Frequently Asked Questions

How soon could a 10,000-qubit quantum computer be built?

Estimates vary wildly, but the consensus has shifted from "50+ years" to "10-20 years" based on these new resource estimates. However, breakthroughs are non-linear. A single major innovation in qubit stability or error correction could compress that timeline further. Leading quantum companies like IBM and Google have public roadmaps extending to 10,000+ physical qubits by 2030, but creating 10,000 error-corrected logical qubits from those is a more complex milestone.

What should individuals do to protect their data now?

For most individuals, immediate action is limited. The priority is on institutions and software providers to adopt post-quantum cryptographic standards. However, you can practice good "crypto-agility": use password managers, enable multi-factor authentication (preferably using non-SMS methods like authenticator apps or security keys), and stay informed about which services announce PQC upgrades. For long-term data sensitivity (e.g., storing genetic data), investigate quantum-secure storage options that may emerge.

Does this mean Bitcoin and blockchain are immediately broken?

No. A cryptographically-relevant quantum computer does not yet exist. However, this news drastically shortens the estimated timeframe for a potential attack. Bitcoin's SHA-256 hashing is relatively quantum-resistant, but its Elliptic Curve Digital Signature Algorithm (ECDSA) used for wallets is vulnerable. The blockchain community is actively researching quantum-resistant signatures and will need to execute a coordinated network upgrade (a "hard fork") well before Q-Day. The urgency for that planning just increased exponentially.

What is post-quantum cryptography (PQC) and is it ready?

PQC refers to cryptographic algorithms designed to be secure against attacks from both classical and quantum computers. NIST has been running a standardization process since 2016 and selected its first suite of PQC algorithms in 2024. Several are considered mature and ready for implementation in protocols like TLS. The challenge is not a lack of algorithms, but the immense global effort required to test, standardize, and deploy them across billions of devices and systems without breaking functionality or security.