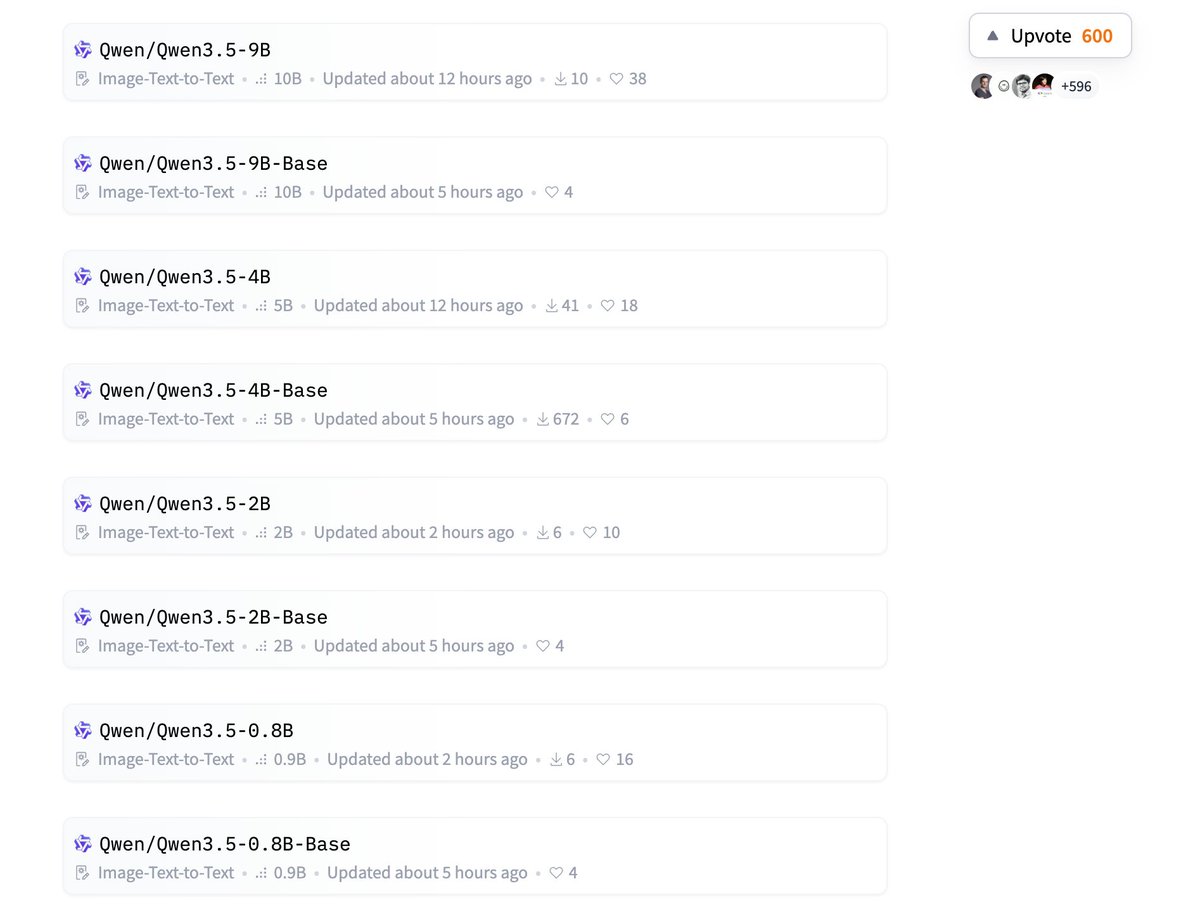

Alibaba's Qwen research team has unveiled Qwen3.5-9B-Base, a groundbreaking foundation model that pushes the boundaries of what's possible in multilingual AI processing. Released on Hugging Face, this 9-billion parameter model boasts a staggering 1 million token context window while supporting 201 languages, making it one of the most versatile and capable base models available to the open-source community.

Technical Architecture: Hybrid Innovation

At the core of Qwen3.5-9B-Base lies a sophisticated hybrid architecture combining DeltaNet and Mixture of Experts (MoE) components. This innovative design represents a significant evolution from previous Qwen models and addresses critical challenges in large language model deployment.

The DeltaNet component provides efficient attention mechanisms that scale better with context length, while the MoE architecture enables the model to activate only relevant expert pathways during inference. This combination allows the model to maintain high performance across its massive context window while keeping computational costs manageable—a crucial consideration for practical deployment.

Multilingual Capabilities: Breaking Language Barriers

With support for 201 languages, Qwen3.5-9B-Base represents a major step toward truly global AI accessibility. The model's training corpus includes extensive multilingual data, enabling it to understand and generate content across a diverse linguistic landscape. This capability is particularly significant for regions and languages that have traditionally been underserved by mainstream AI models.

The model's multilingual proficiency extends beyond simple translation tasks, encompassing nuanced understanding of cultural contexts, idiomatic expressions, and language-specific structures. This makes it valuable for applications ranging from cross-cultural communication to localized content generation.

The 1M Context Window: What It Means

The 1 million token context window represents a quantum leap in what AI models can process in a single interaction. To put this in perspective, this capacity allows the model to analyze and generate content equivalent to approximately 750,000 words or several full-length novels simultaneously.

This extended context capability enables several transformative applications:

- Comprehensive document analysis: Processing entire technical manuals, legal documents, or research papers in one pass

- Long-form content generation: Creating coherent, consistent narratives across thousands of words

- Complex reasoning: Maintaining context across extended chains of thought and multi-step problems

- Memory-intensive applications: Building AI assistants that can remember extensive conversation histories

Efficiency Considerations

Despite its impressive capabilities, Qwen3.5-9B-Base has been designed with efficiency in mind. The hybrid DeltaNet-MoE architecture allows for selective activation of model components, reducing computational overhead during inference. This makes the model more accessible for researchers and organizations with limited computational resources while still maintaining state-of-the-art performance.

The model's efficiency characteristics are particularly important given the growing concern about the environmental impact and cost of running large AI models. By optimizing for both performance and efficiency, the Qwen team addresses practical deployment considerations that often determine whether advanced AI capabilities reach real-world applications.

Open-Source Implications

By releasing Qwen3.5-9B-Base on Hugging Face, Alibaba continues its commitment to open-source AI development. This move democratizes access to cutting-edge AI capabilities, allowing researchers, developers, and organizations worldwide to experiment with and build upon this technology without prohibitive licensing costs.

The open-source availability also facilitates transparency and collaborative improvement. As the community tests, fine-tunes, and adapts the model for various applications, we can expect rapid innovation and optimization that benefits all users.

Competitive Landscape

Qwen3.5-9B-Base enters a competitive field of foundation models, but its unique combination of features—particularly the 1M context window and extensive multilingual support—gives it distinct advantages in specific applications. While larger models may excel in certain benchmarks, Qwen3.5-9B-Base's efficiency and specialized capabilities make it particularly suitable for practical deployments where resource constraints and language diversity are important considerations.

Future Directions and Applications

The release of Qwen3.5-9B-Base opens numerous possibilities for AI applications:

Research Applications:

- Cross-lingual information retrieval and analysis

- Long-context scientific literature review

- Multilingual dataset creation and augmentation

Commercial Applications:

- Global customer support systems

- Multilingual content creation platforms

- International legal and compliance analysis

- Cross-border business intelligence

Educational Applications:

- Language learning tools

- Cross-cultural educational content

- Research assistance for international students

Challenges and Considerations

While Qwen3.5-9B-Base represents significant technical advancement, several challenges remain:

- Evaluation: Developing comprehensive benchmarks for 1M context models

- Deployment: Optimizing inference for practical applications

- Bias mitigation: Ensuring fair representation across 201 languages

- Resource requirements: Balancing capability with accessibility

The Qwen team will need to address these challenges through continued research, community engagement, and iterative improvement.

Conclusion

Qwen3.5-9B-Base represents a significant milestone in AI development, combining unprecedented context capacity with extensive multilingual support in an efficient architecture. As researchers and developers begin exploring its capabilities, we can expect new applications and innovations that leverage its unique strengths.

The model's availability on Hugging Face ensures that these advanced capabilities will be accessible to a broad community, potentially accelerating progress in multilingual AI, long-context understanding, and efficient model deployment. As the AI field continues to evolve, models like Qwen3.5-9B-Base demonstrate how technical innovation can expand what's possible while addressing practical considerations of efficiency and accessibility.

Source: Hugging Papers on X