Alibaba's Qwen team has released Qwen3.5-Omni, a new multimodal model now available via open access on Hugging Face. The model introduces several novel capabilities focused on interpreting multimodal inputs rather than generating them.

What's New: Interpreting, Not Generating

According to the announcement, Qwen3.5-Omni's primary advancement is its enhanced ability to understand and describe complex multimodal inputs. The key features highlighted are:

- Script-Level Captioning: The model can generate detailed, narrative-style descriptions for videos or image sequences, moving beyond simple object labeling to create a coherent story or script.

- Audio-Visual Vibe Coding: This refers to the model's ability to interpret the combined "mood" or atmosphere from both audio and visual inputs simultaneously. For example, analyzing a video clip to describe not just what is seen and heard, but the overall emotional or aesthetic tone.

- Real-Time Web Search Built-In: The model integrates the ability to perform live web searches, allowing it to pull in current information to augment its responses.

The announcement includes a significant caveat: "Omni" in this context refers to omnimodal understanding, not creation. The model is designed to interpret images, audio, and video, but it does not generate these media types itself. This clarifies its position as an advanced analysis and reasoning tool rather than a competitor to image or audio generation models like Stable Diffusion or Suno.

Technical Details & Access

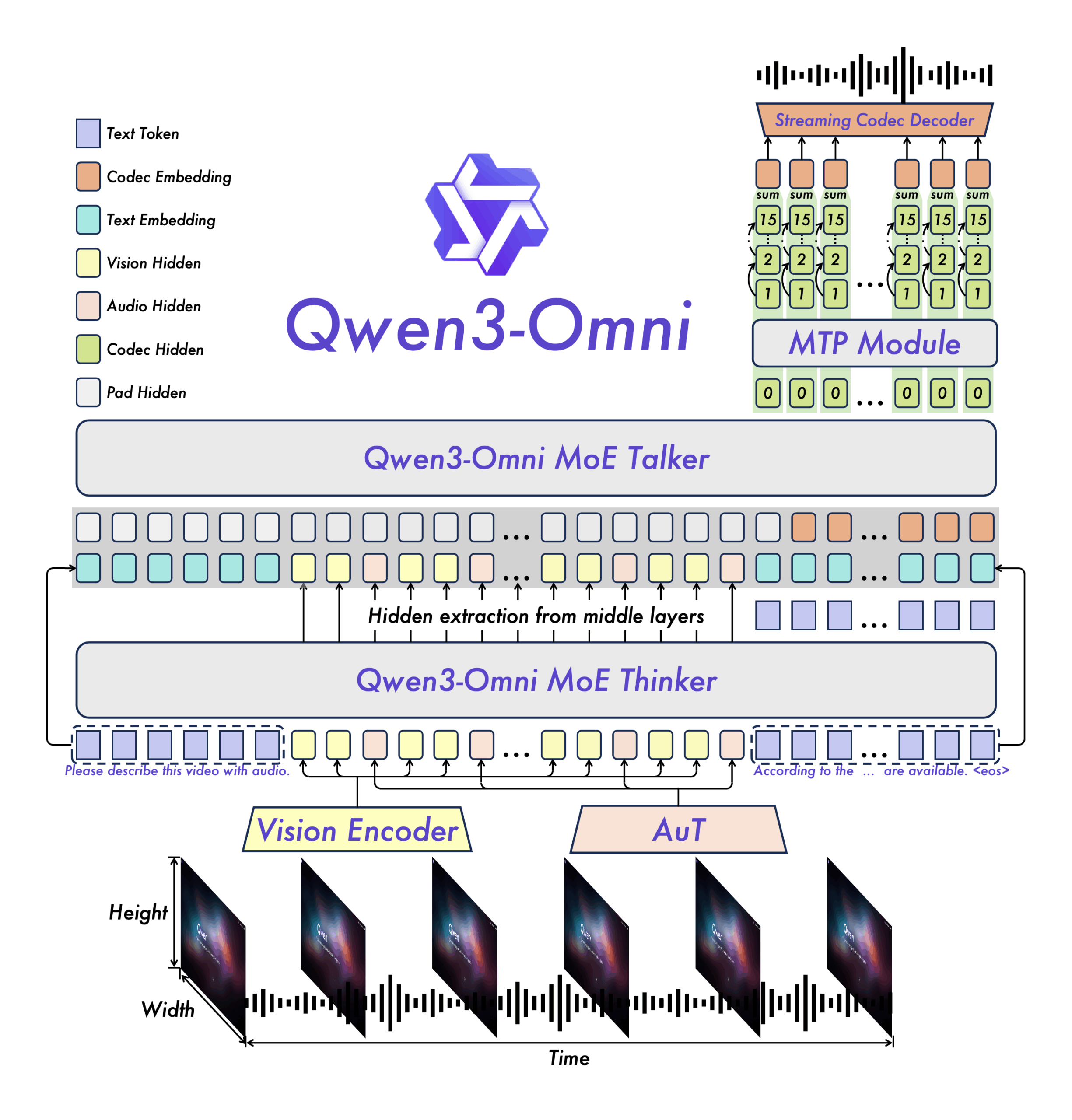

Qwen3.5-Omni is available for download and experimentation on the Hugging Face Hub. This follows the Qwen team's established pattern of releasing open-access models, continuing the lineage from Qwen2.5. The model is presumed to be an extension of the Qwen3.5 language model architecture, retrofitted with robust multimodal encoders for vision and audio.

How It Compares

This release places Qwen3.5-Omni in a competitive space with other large multimodal models (LMMs) that prioritize understanding, such as Google's Gemini 1.5 Pro and OpenAI's GPT-4V. Its differentiating features are the specific emphasis on narrative "script" generation and combined audio-visual sentiment analysis ("vibe coding").

Qwen3.5-Omni Multimodal Interpretation No Script-level captioning, audio-visual "vibe" analysis GPT-4V / Gemini 1.5 Pro General Multimodal Reasoning No Very long context, strong generalist performance Midjourney / Stable Diffusion Image Generation Yes (Images) High-fidelity visual creation Sora / Luma Dream Machine Video Generation Yes (Video) Photorealistic video synthesisWhat to Watch

The practical utility of "vibe coding" and script-generation will need validation through user testing and benchmarks. The built-in web search is a pragmatic feature for real-time knowledge, but its implementation depth and citation accuracy are key details to examine. As an open-weight model, its performance relative to closed-source giants like GPT-4V will be a major point of community evaluation.

gentic.news Analysis

This release is a strategic move by Alibaba Cloud to solidify its position in the open-source multimodal arena. By focusing on interpretation, Qwen3.5-Omni carves a distinct niche that avoids direct competition with state-of-the-art generative models from OpenAI and Google, while still addressing a high-demand capability: making sense of the world's growing volume of audio-visual data.

The emphasis on "script-level" narrative understanding suggests targeting applications in automated content moderation, video indexing for archives, and advanced accessibility tools. The integrated web search points towards use cases in real-time analysis, such as interpreting live news feeds or social media streams.

This follows Alibaba's consistent strategy of using open-source releases to build developer mindshare and ecosystem traction, a playbook also employed effectively by Meta with its Llama series. The Qwen team's rapid iteration from Qwen2.5 to this Omni model demonstrates a focused effort to keep pace in the multimodal race, even if specializing in a specific lane. The caveat about non-generation is both a honest limitation and a clever positioning—it sets clear expectations and frames the model as a precision tool rather than a creative one.

Frequently Asked Questions

What can Qwen3.5-Omni actually do?

Qwen3.5-Omni is designed to understand and describe images, audio, and video. It can generate detailed narrative captions for videos (script-level captioning), interpret the combined mood from audio and visual inputs (vibe coding), and use real-time web search to inform its responses. It does not create new images, audio, or video.

How do I try Qwen3.5-Omni?

The model is available for download and use on the Hugging Face Hub. Developers can access it through the Hugging Face transformers library, following the standard workflow for loading and running Qwen models.

How is this different from GPT-4V or Gemini?

While all are multimodal understanding models, Qwen3.5-Omni emphasizes specific capabilities like generating cohesive storylines from video and analyzing combined audio-visual sentiment. It is also fully open-access, unlike the closed APIs of GPT-4V and Gemini. Its integrated web search is a built-in feature that may require separate tool-calling in other models.

What does "vibe coding" mean?

"Vibe coding" is an informal term used in the announcement to describe the model's ability to analyze and articulate the overall atmosphere, emotion, or aesthetic tone derived from both the sound and visuals of a piece of media simultaneously. It goes beyond listing objects and sounds to synthesize a holistic interpretation.