Hermes Agent crossed 140,000 GitHub stars in under three months, according to Nvidia. The Nous Research framework is now the most-used agent on OpenRouter, optimized for local inference on Nvidia RTX GPUs and DGX Spark.

Key facts

- Hermes Agent: 140K GitHub stars in under three months.

- Most-used agent on OpenRouter as of last week.

- Qwen 3.6 35B: runs on 20GB memory, beats 120B models.

- Qwen 3.6 27B: matches accuracy of 400B-parameter models.

- Nvidia RTX, RTX PRO, DGX Spark as recommended hardware.

Agentic AI frameworks are proliferating, but Hermes Agent stands apart on two vectors: reliability and self-improvement. Developed by Nous Research, Hermes is provider- and model-agnostic, designed for always-on local use. Its GitHub adoption—140K stars in under three months—reflects a community hungry for agents that work without constant debugging.

Self-Evolving Skills and Contained Sub-Agents

Hermes writes and refines its own skills. When it encounters a complex task or receives feedback, it saves learnings as a skill, enabling adaptation over time. Sub-agents are short-lived, isolated workers focused on a sub-task with a dedicated context and tools. This keeps task organization tidy and allows Hermes to run with smaller context windows—critical for local models with limited memory.

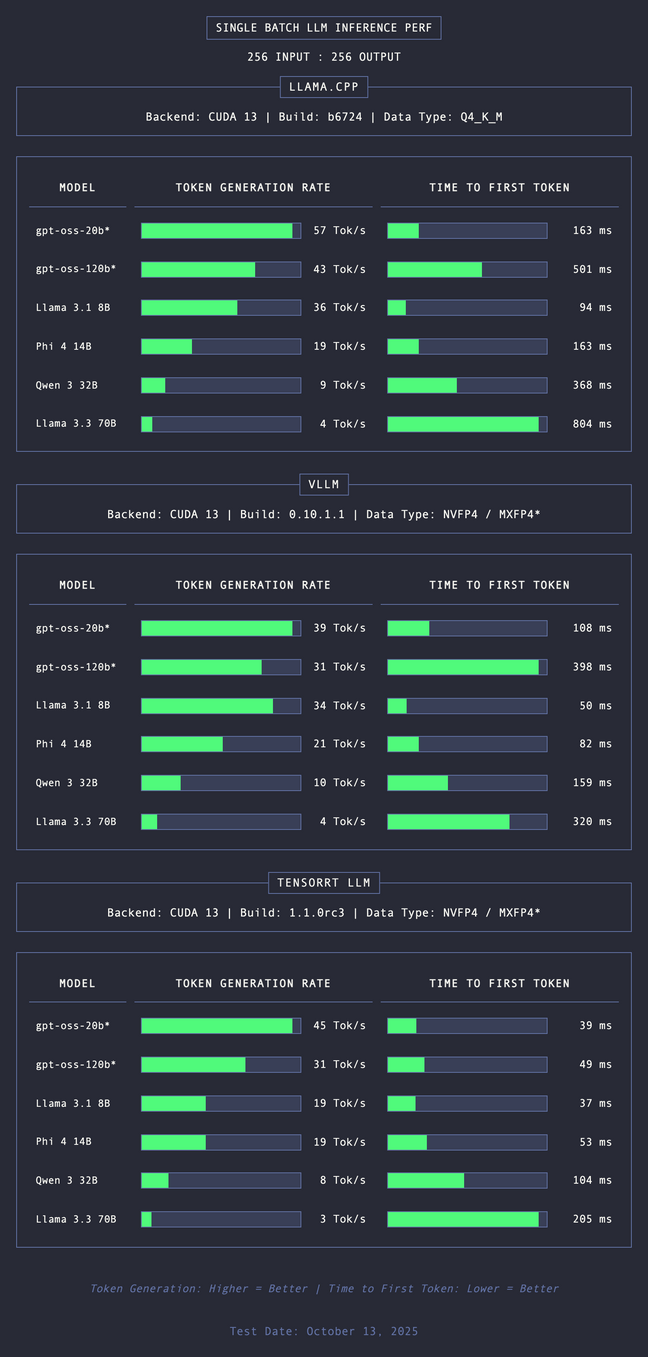

Nvidia positions Hermes as the ideal workload for RTX PCs, RTX PRO workstations, and DGX Spark. The hardware accelerates inference, enabling persistent agents rather than task-by-task execution. Developer comparisons show Hermes outperforming other frameworks using identical models, per the source.

Qwen 3.6: Parameter Efficiency Leap

Alibaba’s Qwen 3.6 series, released alongside the Hermes announcement, provides the underlying intelligence. The 35B model runs on roughly 20GB of memory while surpassing 120B-parameter predecessors that require 70GB+. The 27B dense model matches the accuracy of 400B-parameter models, per the source. This parameter efficiency makes local agentic AI feasible on consumer-grade hardware.

Unique Take: Framework Over Model

The narrative around AI agents often fixates on model size. Hermes demonstrates that orchestration layer design matters more. The framework itself drives reliability, not the raw parameter count. This mirrors the trend seen in [our earlier coverage of AMD's MI355X cluster for OSS maintainers]—hardware and software co-design is the bottleneck, not model scale.

Historical Context

Hermes builds on the momentum of OpenClaw, another open-source agent framework that saw rapid adoption. Nvidia’s investment in agentic AI infrastructure—from Blackwell GPUs to DGX Spark—aligns with its broader strategy of owning the inference stack. The company recently open-sourced MRC, the RDMA protocol powering OpenAI's Blackwell clusters.

Key Takeaways

- Hermes Agent hit 140K GitHub stars, most-used on OpenRouter.

- Runs locally on Nvidia RTX with self-evolving skills and Qwen 3.6 models that beat prior 120B-parameter models.

What to watch

Watch for Qwen 3.6 model downloads on Hugging Face and whether Hermes maintains its OpenRouter usage lead as competitors like Claude Code and OpenClaw iterate. Also track Nvidia's next DGX Spark pricing disclosure.