What Happened

The source article is a technical deep-dive from an engineering team documenting their experience deploying a 35-billion parameter large language model (LLM), specifically the Qwen3.5–35B model, on an NVIDIA DGX Spark cluster equipped with the new GB10 Blackwell GPUs. The author frames it as a "complete, no-fluff guide," promising to detail the dead ends, hardware bugs, and unexpected hurdles encountered during the process, alongside the successful outcomes.

While the full article is behind a Medium paywall, the snippet and title clearly indicate its nature: a post-mortem or case study from the trenches of high-stakes AI infrastructure deployment. The focus is on the practical, often unglamorous, work of getting a state-of-the-art model running on cutting-edge, enterprise-grade hardware.

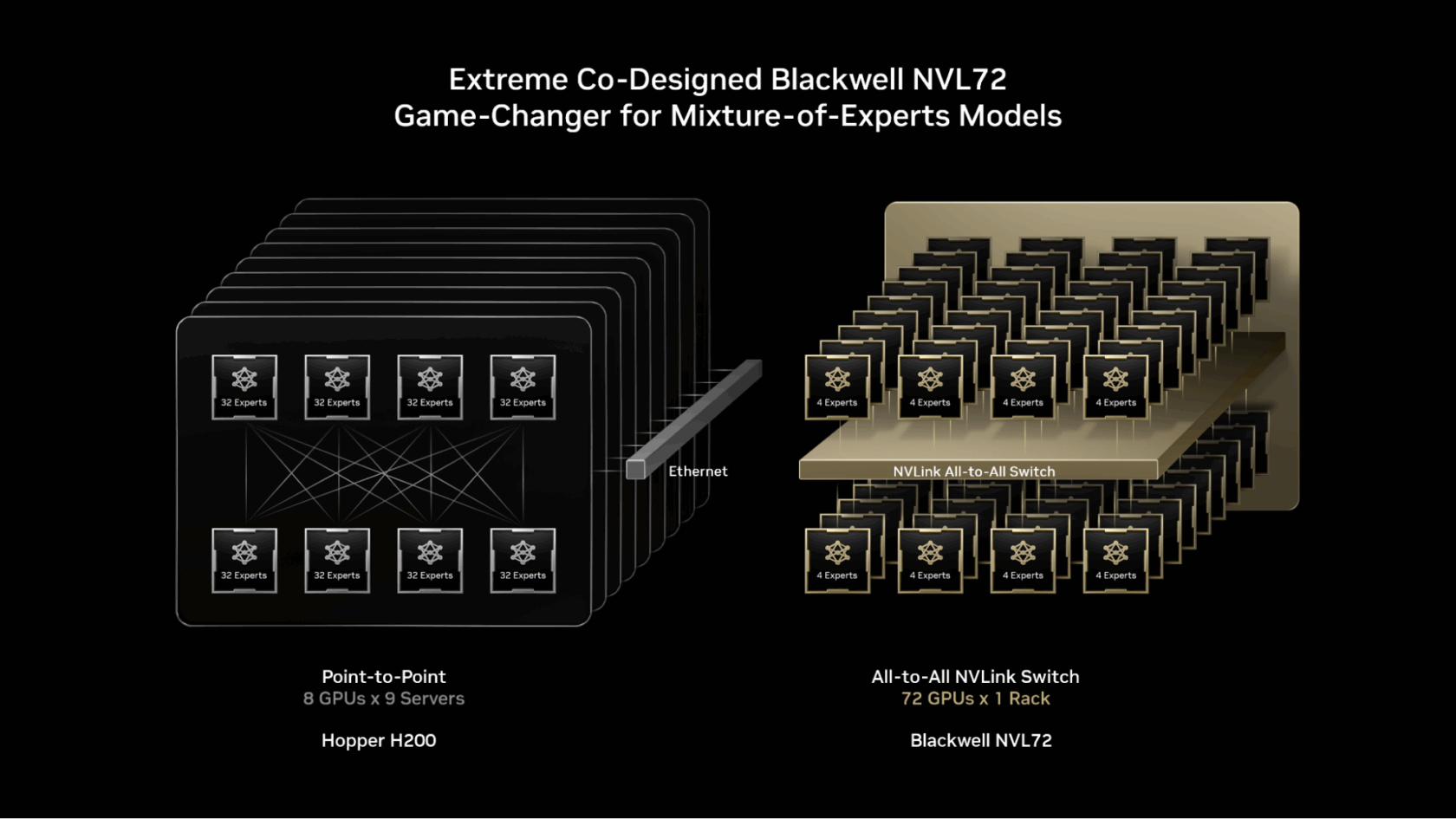

Technical Details: The Blackwell Frontier

The key hardware component here is NVIDIA's GB10 Blackwell. This is part of the first wave of products based on NVIDIA's next-generation Blackwell architecture, which is designed to succeed the current Hopper architecture (powering H100 GPUs). The DGX Spark is a pre-configured, rack-scale AI system designed for scalable training and inference.

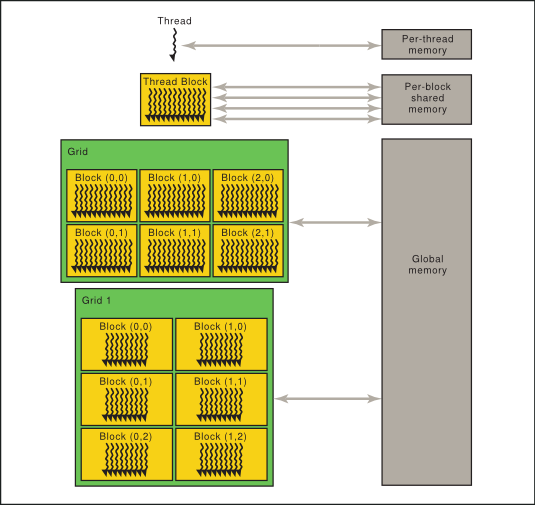

Deploying a 35B parameter model is a significant undertaking. Models of this size typically require model parallelism (splitting the model across multiple GPUs) and sophisticated inference optimization techniques like quantization, continuous batching, and optimized attention kernels to achieve usable latency and throughput. The "everything that went wrong" suggests the team likely grappled with:

- Driver and CUDA compatibility issues with early Blackwell hardware.

- Memory constraints and optimizing model sharding across GPUs.

- Integration challenges between the model serving software (e.g., vLLM, TensorRT-LLM, TGI) and the new GPU architecture.

- Performance tuning to achieve the desired tokens-per-second rate.

The fact that they were deploying Qwen3.5, a leading open-source model from Alibaba's Qwen team, is also notable. It indicates a move away from purely proprietary APIs (like GPT-4) towards controllable, on-premise open-weight models for enterprises that demand data sovereignty, customization, and predictable inference costs.

Retail & Luxury Implications

This technical case study is highly applicable to retail and luxury brands building serious, in-house AI capabilities. The relevance is not about a specific retail use case, but about mastering the infrastructure foundation that enables those use cases.

For a luxury conglomerate, running a 35B model on-premise or in a private cloud could be the engine for:

- Hyper-Personalized Content Generation: Creating unique, brand-perfect marketing copy, product descriptions, or client communications at scale, without sending sensitive brand or client data to a third-party API.

- Internal Knowledge Agents: Deploying a sophisticated, company-wide assistant trained on internal design documents, material science research, supply chain logs, and legacy clienteling notes.

- Advanced Product & Trend Analysis: Running complex, multi-step analysis on global trend reports, social media sentiment, and sales data to inform design and merchandising decisions.

The journey documented in the source article is a precursor to all of this. It answers the critical question: "How do we actually run these powerful models ourselves?" The lessons learned about hardware bugs, deployment pitfalls, and performance tuning are invaluable for any enterprise AI team about to make a major hardware investment, such as procuring Blackwell-based systems.

Adopting this technology stack represents a shift from being an AI consumer (using SaaS tools) to an AI operator. It grants full control over data, model behavior, and cost structure but demands significant MLOps and infrastructure expertise. The article serves as a stark reminder that the path to private, powerful AI is paved with technical complexity.