Key Takeaways

- Pyptx lets developers write and launch hand-tuned Nvidia PTX kernels directly from Python, supporting Hopper (sm_90a) and Blackwell (sm_100a).

- It provides explicit control over registers, shared memory, and advanced features like WGMMA and TMA, with dispatch through JAX, PyTorch eager, and torch.compile.

What's New

Pyptx is a new open-source Python DSL for writing Nvidia PTX kernels targeting Hopper (sm_90a) and Blackwell (sm_100a) GPUs. Developed by Patrick Toulme, it allows developers to write low-level GPU assembly in Python, with each function call mapping to exactly one PTX instruction. There is no optimizer, autotuner, or tensor IR between the Python function and the emitted PTX.

The project is available on GitHub and provides a real PTX parser, emitter, and transpiler. It can round-trip 218+ real PTX files byte-identically, supporting kernels from CUTLASS, Triton, fast.cu, DeepGEMM, ThunderKittens, and LLVM tests.

Technical Details

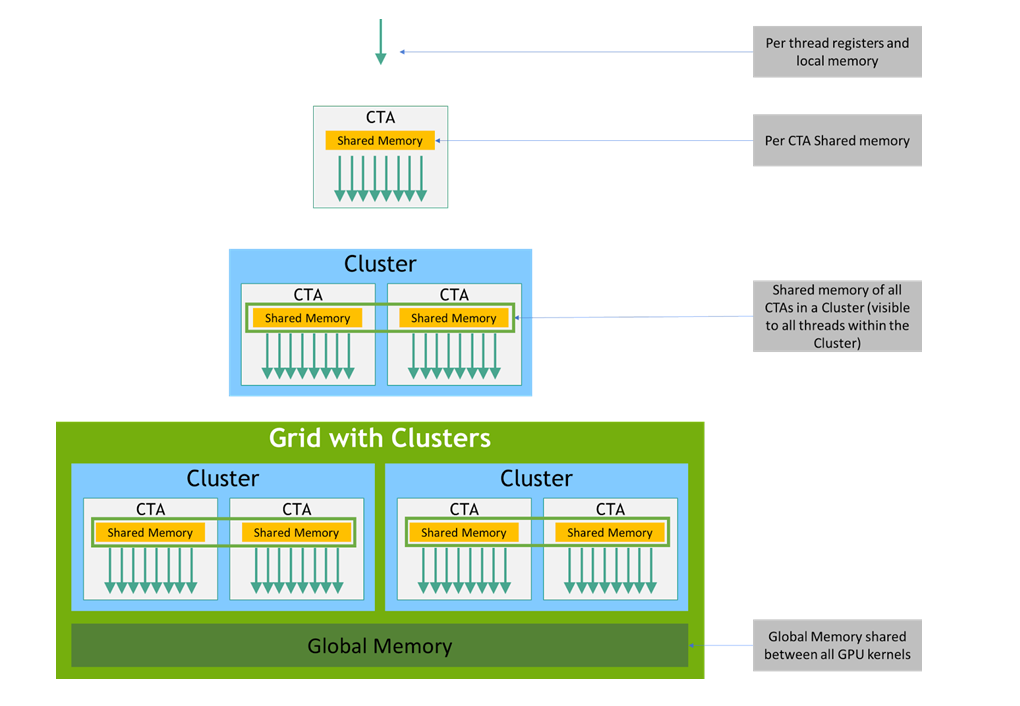

Pyptx supports explicit registers, predicates, barriers, and shared memory. For Hopper (sm_90a), it enables WGMMA (warp group matrix multiply-accumulate), TMA 2D/3D with multicast, mbarriers, and cluster launch. For Blackwell (sm_100a), it supports tcgen05.mma, tcgen05.ld, TMEM, SMEM descriptors, and warp specialization.

The kernel object can be called from three execution environments:

- PyTorch eager: Direct dispatch through

torch.library.custom_op - torch.compile: Compilation path using the same custom_op mechanism

- JAX jit: Lowering through typed FFI via

jax.ffi.ffi_call

Under the hood, the PTX is JIT-compiled through cuModuleLoadData and registered with a ~150-line C++ launch shim. Dispatch overhead ranges from approximately 4 µs for CUDA graph replay to 14 µs for cached C++ extension and 14–22 µs for the torch.compile path.

A minimal GEMM kernel example:

from pyptx import kernel, reg, smem, ptx, Tile

from pyptx.types import bf16, f32

@kernel(

in_specs=(Tile("M", "K", bf16), Tile("K", "N", bf16)),

out_specs=(Tile("M", "N", f32),),

grid=lambda M, N, K: (N // 64, M // 64),

block=(128, 1, 1),

arch="sm_90a",

)

def gemm(A, B, C):

sA = smem.wgmma_tile(bf16, (64, 16), major="K")

sB = smem.wgmma_tile(bf16, (16, 64), major="MN")

acc = reg.array(f32, 32)

# TMA loads + ptx.wgmma.mma_async(...)

How It Compares

Pyptx occupies a specific niche between high-level kernel frameworks like Triton and raw CUDA C++ with inline PTX. Unlike Triton, which abstracts away many GPU details through a compiler, Pyptx gives developers exact control over every instruction. Unlike writing CUDA C++ with inline PTX, Pyptx keeps the kernel in Python and integrates with existing Python ML frameworks.

The project also includes a PTX-to-Python transpiler. Running python -m pyptx.codegen kernel.ptx --sugar --name my_kernel > my_kernel.py converts existing PTX output from nvcc, Triton, or Pallas into Python code. The --sugar flag demangles names, raises spin-loops into ptx.loop(...), collapses mbarrier-wait blocks, and groups expression chains.

What to Watch

Pyptx is currently a single-developer project. The documentation at pyptx.dev includes examples for Hopper (RMS norm, grouped GEMM, high-performance 815 TFLOPS GEMM) and Blackwell (13 isolated tcgen05 primitives). The project requires pip install ninja for the PyTorch C++ extension to JIT-build on first launch.

While the project demonstrates impressive technical depth, its adoption will depend on whether the developer community finds value in writing direct PTX versus using higher-level abstractions. The 815 TFLOPS Hopper GEMM example shows what's possible, but real-world usage will require developers to invest in learning the PTX instruction set.

gentic.news Analysis

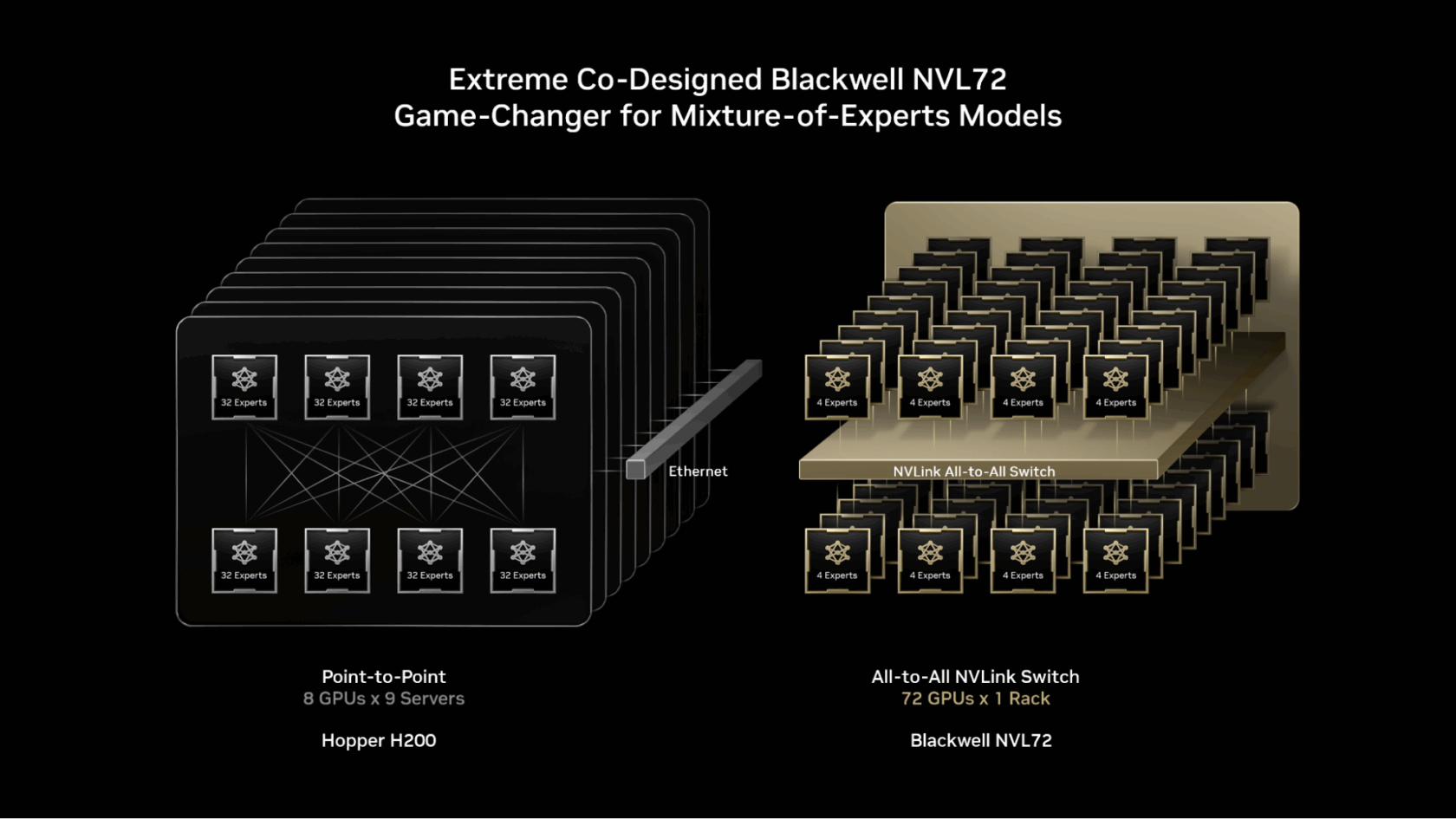

Pyptx arrives at a moment when the GPU programming landscape is fragmenting. Nvidia's Hopper and Blackwell architectures introduced increasingly specialized instructions — WGMMA, TMA, tcgen05 — that are difficult to target from high-level frameworks. Triton and CUDA provide coverage, but developers pushing for peak performance often find themselves reading PTX dumps to understand what the compiler actually emitted.

This project flips that script. Instead of writing Python and hoping the compiler generates the right PTX, Pyptx lets you write the PTX directly and stay in Python. It's a return to the ethos of hand-tuned GPU kernels, but with modern ergonomics. The transpiler feature is particularly interesting: you can take optimized PTX from CUTLASS or DeepGEMM, convert it to Python, and then modify or extend it without fighting the compiler.

The timing is notable given Nvidia's recent trajectory. On April 26, 2026, Nvidia invested $2 billion in Marvell Technology for NVLink Fusion interconnect development, signaling continued investment in GPU infrastructure. On April 25, Nvidia trained a billion-parameter LLM without backpropagation. These developments suggest that the hardware capabilities are outpacing the software stack's ability to exploit them. Tools like Pyptx that give developers direct hardware access may become increasingly valuable as architectures grow more complex.

However, Pyptx faces an adoption challenge. Most ML engineers work at the PyTorch or JAX level and have no desire to write PTX. The project's audience is a narrow slice of performance engineers working on kernels for inference serving, MoE routing, or custom attention mechanisms. For them, Pyptx could be a productivity boost over writing CUDA C++ with inline assembly.

Frequently Asked Questions

What is PTX?

PTX (Parallel Thread Execution) is Nvidia's low-level virtual machine and instruction set architecture for GPU programming. It sits between high-level languages like CUDA C++ and the actual machine code (SASS) that runs on the GPU. PTX provides a stable instruction set that Nvidia guarantees forward compatibility across GPU generations.

How does Pyptx differ from Triton?

Triton is a Python-based DSL that compiles to efficient GPU code through its own compiler and optimizer. Pyptx, by contrast, emits exact PTX instructions with no optimization layer. Triton abstracts away register allocation, memory tiling, and instruction scheduling. Pyptx gives the developer explicit control over all of these, at the cost of requiring more expertise.

Can I use Pyptx with existing PyTorch models?

Yes. Pyptx kernels can be called from PyTorch eager mode and through torch.compile. They integrate as custom operations. You would typically use Pyptx to replace specific performance-critical kernels within a larger PyTorch model, not to rewrite the entire model.

What GPU architectures does Pyptx support?

Pyptx targets Hopper (sm_90a) and Blackwell (sm_100a) architectures. It specifically supports the "a" variants of these architectures, which include the full set of advanced features like WGMMA, TMA, and tcgen05 instructions. Older architectures like Ampere are not supported.