What Happened

The source material presents a case study outlining a typical trajectory for machine learning (ML) systems within companies. It begins with a common origin story: an ML model is rarely born as a large-scale enterprise project. Instead, it often starts as a small experiment—a weekend project or a proof-of-concept built by a data scientist using familiar tools like a Jupyter notebook and a simple Flask or FastAPI wrapper for serving predictions.

This initial, simplistic setup works for demonstration and early testing. However, as the model proves its value and business demand grows, the system hits a wall. The article details how this "hacky" initial serving layer becomes a bottleneck, struggling with issues like:

- Performance & Latency: Inability to handle concurrent requests efficiently, leading to slow response times.

- Scalability: Difficulty scaling the service up or down to match traffic patterns.

- Model Management: Challenges in updating models without downtime (A/B testing, canary deployments) and supporting multiple model types (e.g., PyTorch, TensorFlow, ONNX) within a unified serving architecture.

- Resource Utilization: Poor GPU/CPU usage, leading to wasted infrastructure costs.

The case study posits that at this critical juncture—where the ML system must graduate from a prototype to a reliable, scalable production service—companies often converge on a dedicated inference server solution. The article specifically highlights NVIDIA's Triton Inference Server as a leading choice to solve these problems.

Technical Details

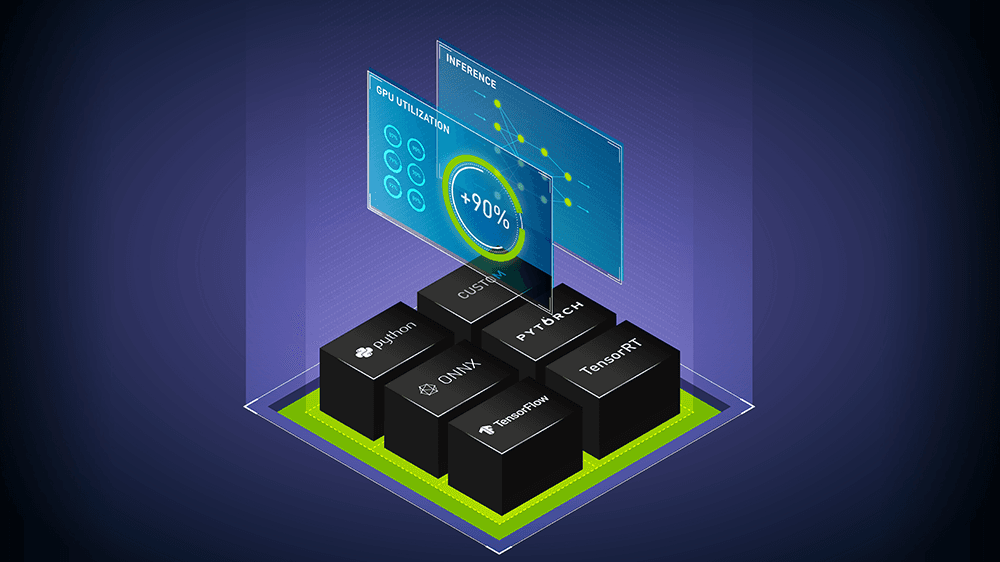

Triton Inference Server is an open-source software solution designed to streamline the deployment of AI models at scale. The case study implies its adoption is driven by several key technical capabilities that address the pain points of homegrown serving systems:

Multi-Framework & Multi-Model Support: Triton can serve models from virtually any framework (TensorFlow, PyTorch, ONNX Runtime, TensorRT, Python, etc.) simultaneously. This is crucial for retail companies that may have computer vision models for visual search (PyTorch), demand forecasting models (TensorFlow), and NLP models for customer service (ONNX) all needing to be served from the same platform.

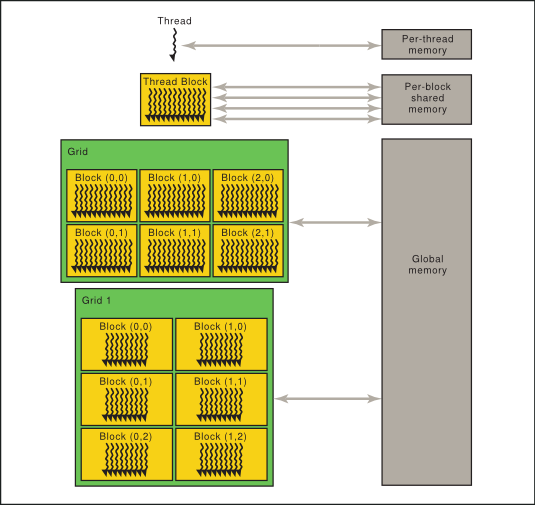

Dynamic Batching: This is a core feature for optimizing throughput. Instead of processing inference requests one-by-one, Triton can dynamically group multiple incoming requests into a single batch for more efficient computation on GPU or CPU, significantly improving hardware utilization and reducing latency for high-volume scenarios.

Model Orchestration & Pipelines: Triton allows the creation of inference pipelines (ensembles), where the output of one model becomes the input of another. This enables complex, multi-stage AI workflows—like a fashion attribute detector feeding into a recommendation engine—to be deployed as a single, optimized service.

Production-Grade Features: It provides essential operational features out-of-the-box, including metrics export (for monitoring with Prometheus/Grafana), health checks, concurrent model execution, and support for shared memory, eliminating the need to build this plumbing from scratch.

In essence, the article frames Triton as the industrial-grade engine that replaces the "duct-tape and glue" initial serving setup, providing the scalability, performance, and manageability required for business-critical AI applications.

Retail & Luxury Implications

The journey described in the case study is exceptionally relevant for retail and luxury brands scaling their AI initiatives. The initial "weekend project" phase mirrors how many brands first experiment with AI: a data scientist might build a prototype for personalized product tagging, a markdown optimization model, or a chatbot for styling advice, served via a simple API.

The problems that emerge at scale are magnified in retail due to traffic spikes (e.g., during launches, sales, or holidays), the need for real-time latency in customer-facing applications (like visual search or virtual try-on), and the growing portfolio of diverse AI models.

Adopting a standardized inference serving platform like Triton offers concrete advantages for this sector:

- Unified AI Platform: A luxury house running separate, siloed serving systems for its recommendation engine (PyTorch), counterfeit detection vision model (TensorRT), and sustainability analytics (ONNX) faces operational chaos. Triton can consolidate these into a single, managed service layer, simplifying MLOps and infrastructure management.

- Handling Peak Loads: During a major e-commerce sale or a high-profile product drop, inference demand can skyrocket. Triton's dynamic batching and efficient resource utilization ensure the AI services that power the customer experience remain responsive and cost-effective under load.

- Accelerating Experimentation & Deployment: The ability to easily run A/B tests between model versions or deploy new models without downtime (using Triton's model versioning) allows merchandising and marketing teams to innovate faster. They can test new ranking algorithms or visual search models with minimal engineering friction.

- Optimizing Costly Hardware: Luxury brands investing in high-end GPU clusters for design and AI need to maximize their return. Triton's performance optimizations ensure this expensive infrastructure is used efficiently, improving the ROI of AI projects.

The gap between the case study's general narrative and retail production is small. The challenges of scaling model serving are universal. For a retail AI leader, this article serves as a validation that investing in a professional inference serving architecture is not premature optimization—it's a necessary step to transition AI from promising experiments to reliable, scalable business assets.