Alibaba's Qwen team has quietly released what might be one of the most strategically important AI models of 2024: Qwen2-VL-2B, a 2-billion parameter multimodal model with native 262K context length that can be extended to an impressive 1 million tokens. Available now on Hugging Face, this compact powerhouse challenges fundamental assumptions about AI scaling while offering practical capabilities for resource-constrained environments.

Breaking the Scaling Paradigm

For years, the AI community has operated under what seemed like an iron law: bigger models deliver better performance. From GPT-3's 175 billion parameters to recent models pushing past the trillion-parameter mark, the scaling hypothesis has dominated AI development. Qwen2-VL-2B represents a deliberate counter-narrative—a model that prioritizes efficiency, accessibility, and specialized capabilities over brute-force scaling.

What makes this release particularly noteworthy is its multimodal nature. Unlike text-only models of similar size, Qwen2-VL-2B can process both images and text, understanding their relationships and generating coherent responses. This multimodal capability at just 2 billion parameters suggests significant architectural innovations in how the Qwen team has structured their model.

The Context Window Revolution

The model's context capabilities are arguably its most impressive feature. With native 262K context length—meaning it can process approximately 200,000 words in a single pass—and extensibility to 1 million tokens (roughly 750,000 words), Qwen2-VL-2B offers long-context capabilities previously reserved for much larger models.

This extended context window enables practical applications that were previously impossible for smaller models:

- Document analysis: Processing entire technical manuals, legal documents, or research papers

- Long-form content creation: Maintaining coherence across book chapters or extensive reports

- Complex reasoning: Following extended chains of thought across multiple documents

- Multimodal narratives: Understanding relationships between images and lengthy accompanying text

Technical Architecture and Innovations

While full technical details await the expected research paper, several architectural choices likely contribute to Qwen2-VL-2B's capabilities:

Efficient Attention Mechanisms: The model almost certainly employs sophisticated attention optimizations like sliding window attention, sparse attention patterns, or hybrid approaches that maintain performance while reducing computational complexity for long sequences.

Multimodal Integration Strategy: The efficient fusion of visual and textual information suggests innovations in how visual features are processed and aligned with language representations, possibly through lightweight adapters or shared embedding spaces.

Parameter Efficiency: At just 2 billion parameters, the model likely employs techniques like knowledge distillation from larger models, careful architectural pruning, or innovative parameter sharing strategies that maximize capability per parameter.

Context Extension Methodology: The extensibility to 1M tokens suggests either sophisticated positional encoding schemes (like RoPE extensions) or retrieval-augmented approaches that maintain performance beyond the native context window.

Practical Implications and Applications

Qwen2-VL-2B's compact size and substantial capabilities open doors for applications previously limited by computational constraints:

Edge Computing Deployment: The model's modest size makes it suitable for deployment on edge devices, local servers, or in environments with limited computational resources, enabling AI capabilities without cloud dependency.

Cost-Effective Scaling: Organizations can deploy multiple instances of Qwen2-VL-2B for specialized tasks at a fraction of the cost of larger models, enabling more granular and cost-effective AI integration.

Research and Experimentation: The model provides an accessible platform for researchers and developers to experiment with multimodal AI, long-context applications, and specialized fine-tuning without requiring massive computational resources.

Specialized Enterprise Applications: Industries with specific multimodal needs—such as medical imaging with reports, technical documentation with diagrams, or e-commerce with product images and descriptions—can fine-tune the model for their particular use cases.

The Competitive Landscape

Qwen2-VL-2B enters a competitive field where efficiency has become increasingly important:

Against Larger Models: While it won't outperform GPT-4 or Claude 3 on general benchmarks, its efficiency and specialized capabilities make it competitive for specific applications where cost, latency, or privacy are concerns.

In the Compact Model Space: It competes with other efficient models like Microsoft's Phi series, Google's Gemma models, and Meta's Llama variants, but distinguishes itself through its native multimodal capabilities and exceptional context length.

Open Source Advantage: As an open model available on Hugging Face, Qwen2-VL-2B benefits from community development, fine-tuning, and integration that proprietary models cannot match.

Challenges and Limitations

Despite its impressive specifications, Qwen2-VL-2B faces inherent limitations:

Capability Trade-offs: The 2-billion parameter count necessarily limits the breadth of knowledge and reasoning capabilities compared to larger models, particularly for complex, multi-step reasoning tasks.

Quality at Scale: While the extended context window is technically impressive, maintaining high-quality attention and coherence across 1 million tokens presents significant engineering challenges that may affect performance at the extremes.

Multimodal Depth: The model's visual understanding, while impressive for its size, likely lacks the nuanced comprehension of specialized vision-language models or larger multimodal systems.

Future Directions and Industry Impact

The release of Qwen2-VL-2B signals several important trends in AI development:

Specialization Over Generalization: We may see increasing development of smaller, specialized models optimized for particular tasks or domains rather than continued scaling of general-purpose models.

Efficiency as Competitive Advantage: As AI deployment becomes more widespread, efficiency metrics (capability per parameter, inference speed, memory footprint) may become as important as benchmark performance.

Democratization of Advanced AI: Models like Qwen2-VL-2B make advanced capabilities (multimodal understanding, long-context processing) accessible to organizations and researchers without massive computational resources.

Hybrid Architectures: Future systems may combine multiple specialized smaller models (like Qwen2-VL-2B for certain tasks) with larger models for more complex reasoning, creating more efficient overall systems.

Conclusion

Qwen2-VL-2B represents more than just another model release—it challenges fundamental assumptions about how we build and deploy AI systems. By delivering multimodal capabilities and million-token context in a 2-billion parameter package, Alibaba's Qwen team has demonstrated that thoughtful architecture and optimization can sometimes outperform brute-force scaling.

As the model becomes available to the broader community through Hugging Face, we can expect to see innovative applications, fine-tuned variants, and new insights into what's possible with efficient AI design. In an industry often focused on ever-larger models, Qwen2-VL-2B serves as a valuable reminder that sometimes, less really can be more—especially when that "less" comes with sophisticated capabilities previously reserved for models orders of magnitude larger.

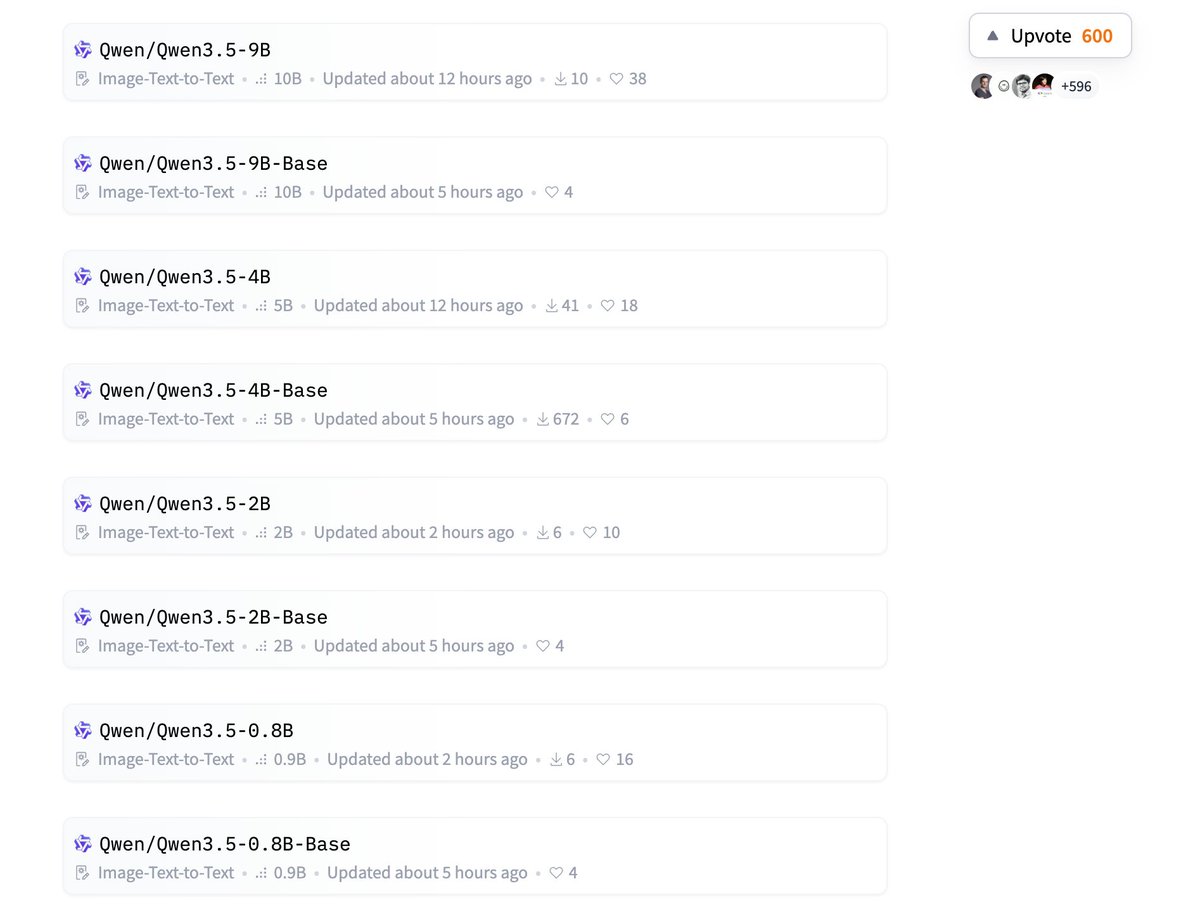

Source: Hugging Papers announcement on X/Twitter (https://x.com/HuggingPapers/status/2030066066152398943)