What Happened: Reframing the RAG Failure Mode

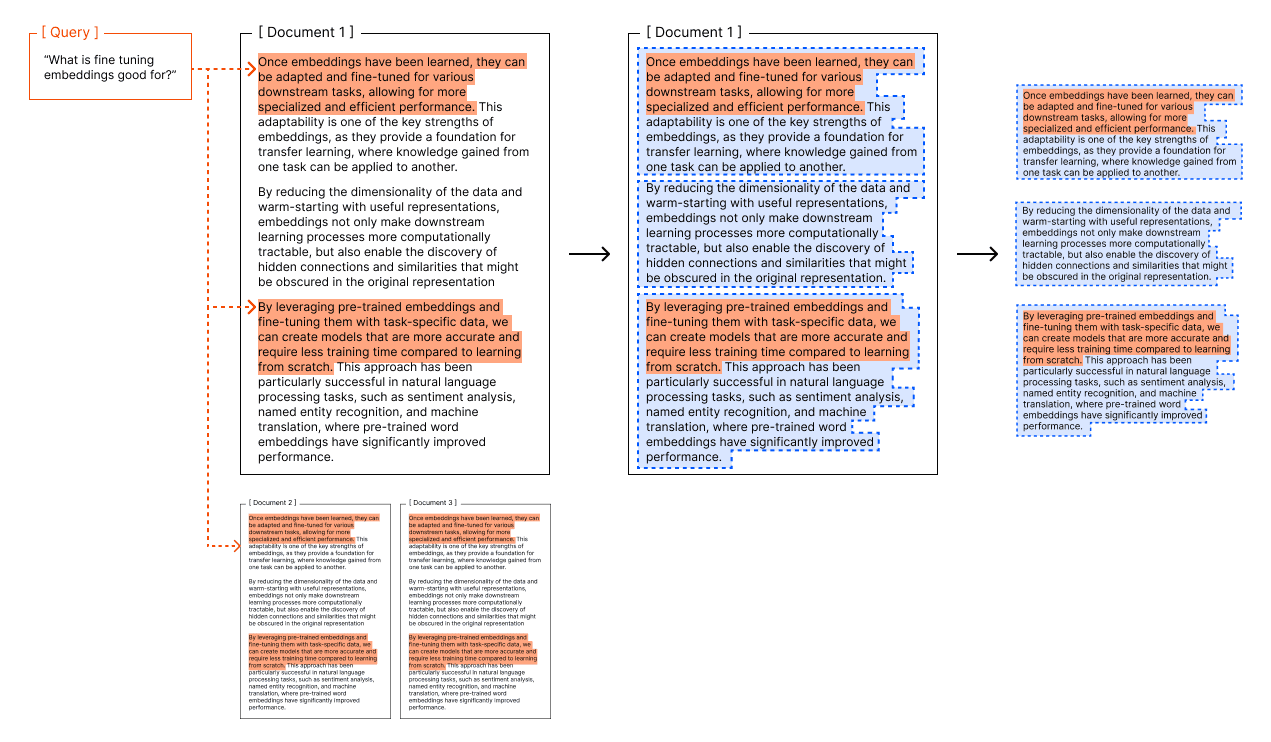

A recent analysis posits a critical, often overlooked thesis for AI engineers: Retrieval-Augmented Generation (RAG) systems typically fail at their data boundaries, not during the search phase. The common narrative focuses on improving embedding models, tuning similarity scores, or implementing complex re-ranking strategies. However, the article argues that the root cause of many RAG failures—such as missing crucial information or providing incomplete answers—lies upstream, in how source knowledge is prepared and presented to the system.

The core premise is that before a vector search ever runs, the informational "playing field" has already been defined and limited by three key architectural decisions:

- Chunking Strategy: How long-form documents (PDFs, manuals, transcripts) are split into discrete, searchable segments.

- Context Window Limits: The maximum amount of text (in tokens) that can be fed into the LLM for generation after retrieval.

- Source Segmentation: How different knowledge sources (e.g., product catalog, customer service logs, brand guidelines) are isolated or connected.

If the answer to a user's query depends on information that straddles two chunks, exists in a source excluded from the search index, or requires more context than the LLM's window allows, the most sophisticated search algorithm in the world cannot recover. The failure is predetermined at the boundary.

Technical Details: The Boundary Problem Explained

The Chunking Conundrum

Chunking is necessary to make dense vector retrieval efficient, but it's inherently lossy. Semantic boundaries in text (the end of a concept, a product feature list, a policy clause) rarely align neatly with arbitrary token counts. A simple sliding window or recursive character split can sever the connection between a question and its answer. For instance, a query about "the return policy for limited-edition items" might require synthesizing a clause from a general returns chunk and an exception noted in a separate limited-edition terms chunk. If these are not retrieved together, the LLM cannot provide the correct, nuanced answer.

The Context Window Bottleneck

Even if the perfect set of relevant chunks is retrieved, they must fit within the LLM's context window alongside the user's query and the system prompt. While modern LLMs have large windows (128K+ tokens), practical deployments for cost and latency reasons often use smaller models or strict limits. This creates a hard boundary: the system is physically incapable of considering all retrieved evidence if it exceeds this limit, forcing a truncation or prioritization that may omit critical details.

The Source Segmentation Silo

In enterprise settings, knowledge is fragmented across systems. A RAG pipeline might only have access to the current season's lookbook PDFs but not the internal CRM notes from the buying team explaining the inspiration behind a collection. The system's boundary is defined by what data is indexed. A query about "the design narrative for the Spring '25 collection" will fail not because of search, but because the relevant narrative exists in an un-indexed source.

The article suggests that diagnosing RAG issues should start by auditing these boundaries—checking for split concepts, testing context saturation, and mapping knowledge silos—before diving into search optimization.

Retail & Luxury Implications

For retail and luxury brands deploying RAG for customer service, internal knowledge bases, or personalized shopping assistants, this boundary-focused diagnosis is highly applicable.

- Product Knowledge & Cross-Selling: A customer asks, "Can I wear this silk dress from the 'Ethereal' collection to a garden party?" A perfect answer requires retrieving chunk A (the dress's material care instructions), chunk B (the 'Ethereal' collection's thematic inspiration about lightness), and possibly chunk C (stylist notes on versatility). If these are in separate chunks or sources, the AI may give a generic, incomplete response.

- Complex Policy Queries: Luxury retail often involves intricate policies (customization, international shipping, authentication, repairs). A question like, "What is the process and cost to repair a vintage handbag purchased in Europe if I now live in the US?" likely pulls information from multiple policy documents and geographic service terms. Standard chunking can easily fragment this multi-part answer.

- Personalization & Client History: Truly personalized service requires merging a client's purchase history (from a CRM system) with current inventory and trend data. If the RAG system's boundary excludes the live CRM connection, it can only answer based on public inventory, missing the opportunity to say, "Based on your past purchases of tailored blazers, the new double-breasted version from this season would complement your existing wardrobe."

The implication is clear: the value of a luxury RAG system is determined by how its knowledge boundaries are drawn. Investing in intelligent, semantic-aware chunking (using models to find natural breakpoints), designing pipelines to dynamically pull from multiple source systems, and carefully managing context allocation is as critical as selecting the right embedding model. The search is merely fetching what's within the fence; the real strategic work is deciding where to build the fence and installing gates between different knowledge gardens.