Redis, the company behind the popular in-memory data platform, has announced a significant expansion of its machine learning (ML) capabilities with the launch of Redis Feature Form. This new product is positioned as an enterprise-grade feature store, a critical piece of infrastructure for companies moving machine learning models from experimentation to production.

Key Takeaways

- Redis announced the launch of Redis Feature Form, a new enterprise feature store designed to manage and serve machine learning features in production.

- This move positions Redis to compete in the critical MLOps infrastructure layer, helping companies operationalize AI models more reliably.

What Happened: A New Tool for the MLOps Stack

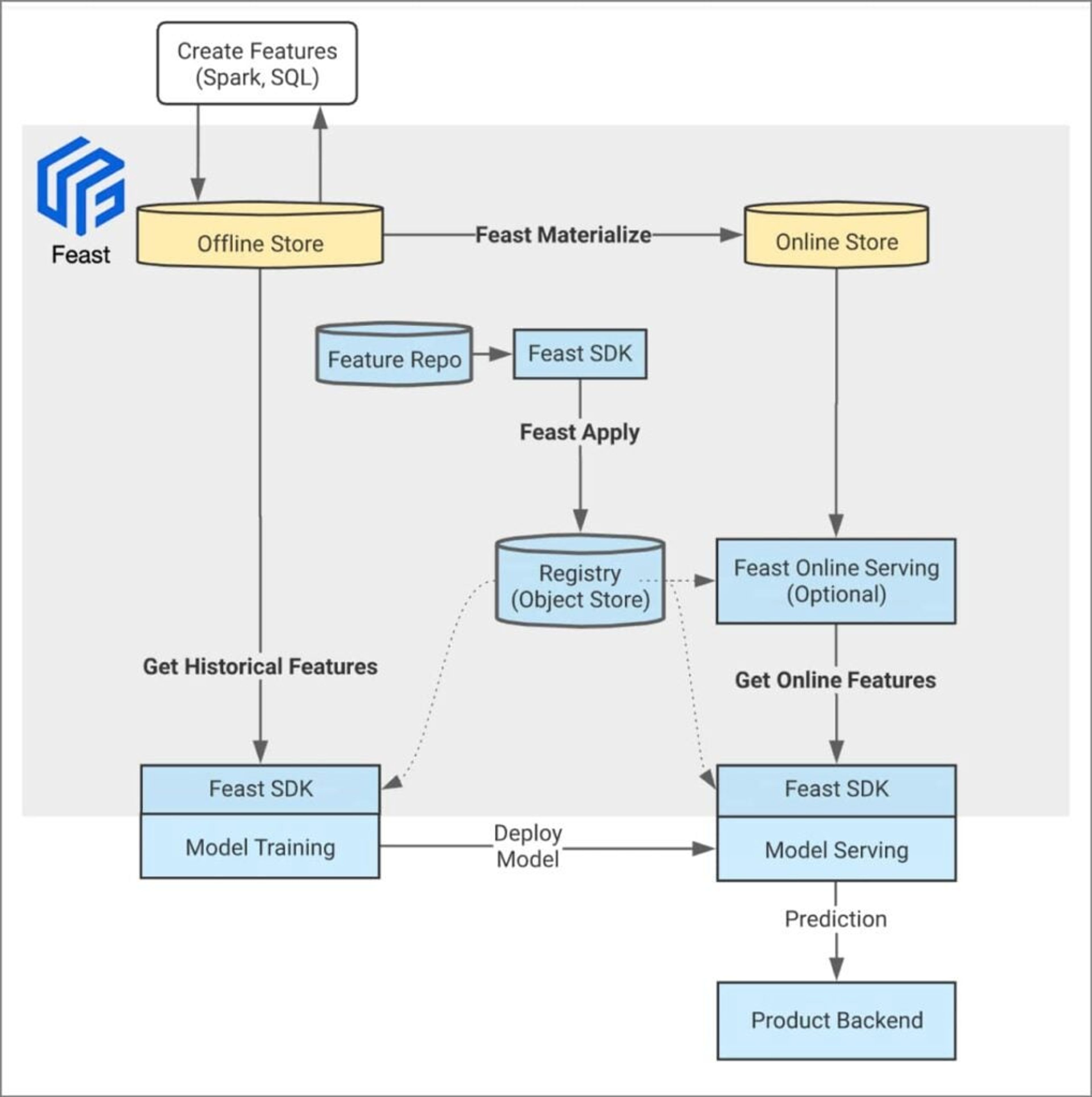

While the provided source material is limited, the core announcement is clear: Redis is entering the competitive market for feature stores. A feature store is a centralized repository for storing, managing, and serving standardized features—the reusable data inputs—used to train and power machine learning models.

The primary value proposition of a feature store is to solve the "training-serving skew" problem, where the data used to train a model differs from the data fed to it in production, leading to degraded performance. By providing a single source of truth for features, companies can ensure consistency, improve collaboration between data scientists and engineers, and accelerate the deployment of ML applications.

Technical Details: Building on the Redis Core

While specific architectural details are not provided in the source, we can infer the likely foundation. Redis Feature Form is almost certainly built atop Redis's core strengths: ultra-low-latency data access, high throughput, and robust data structures. This is a logical evolution.

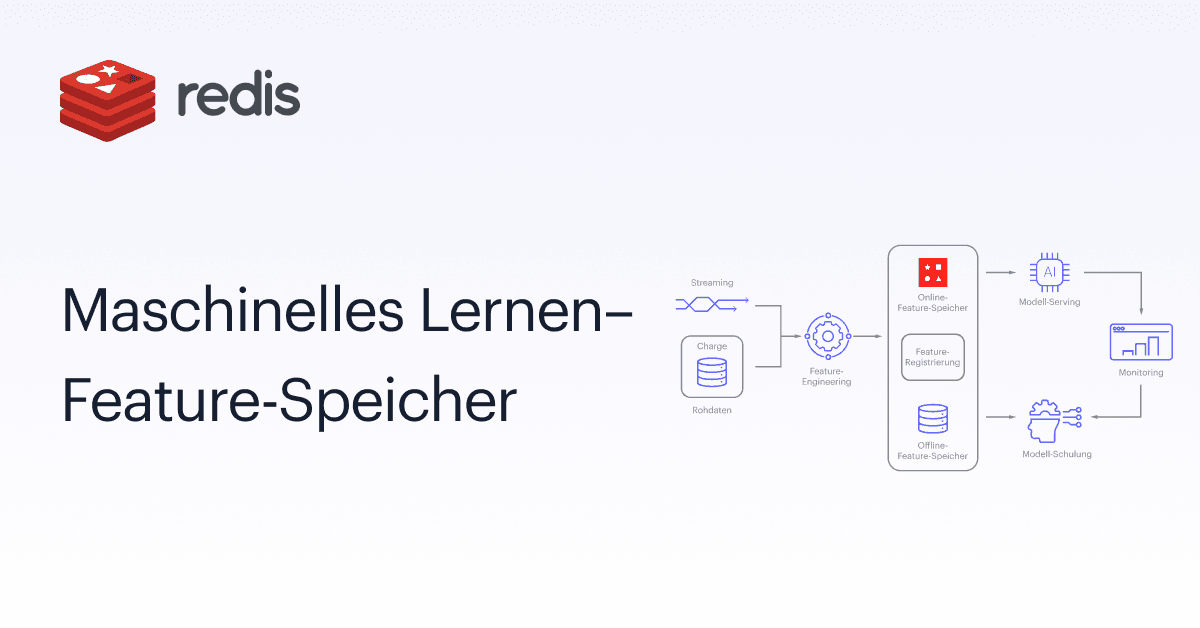

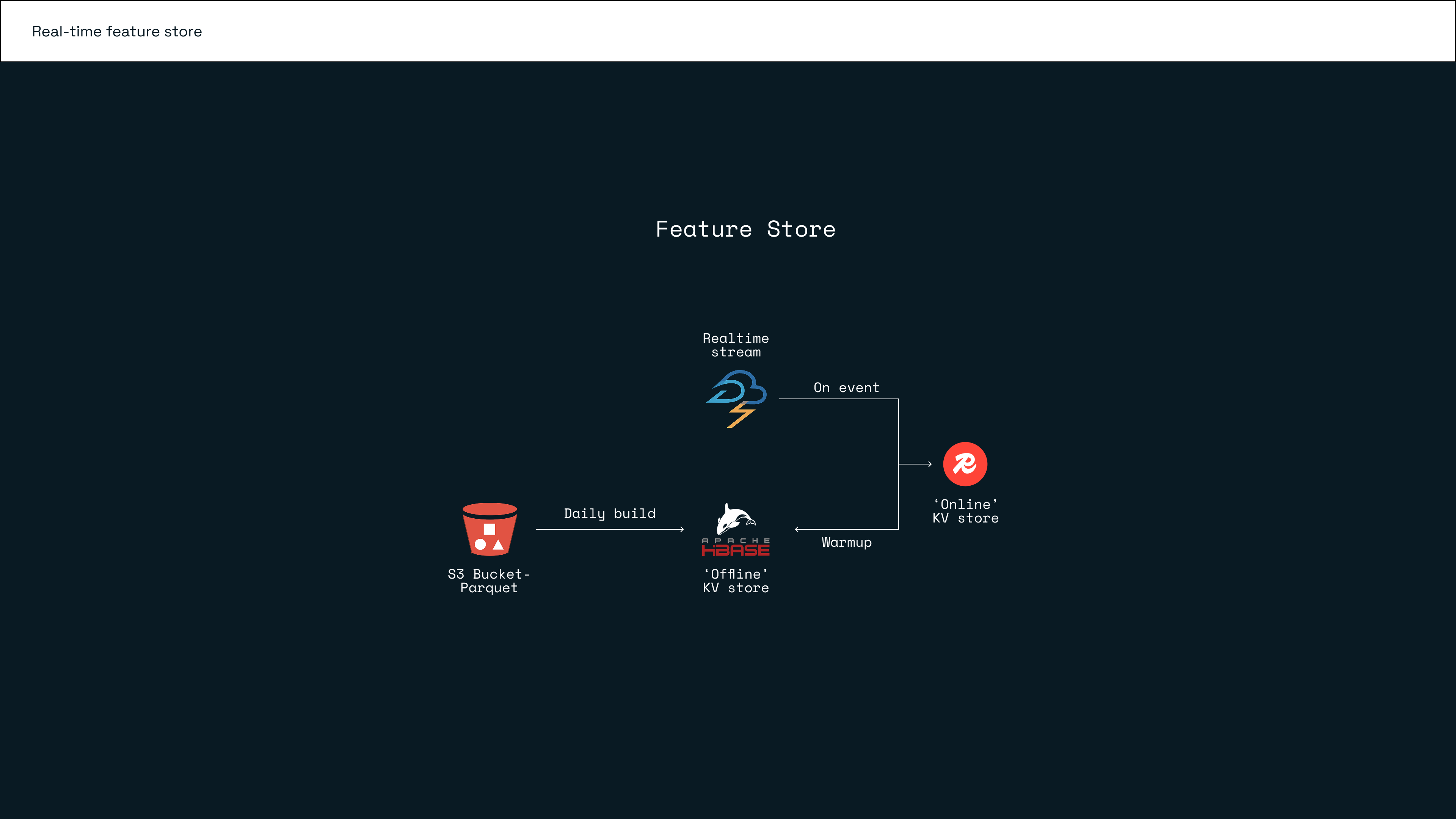

Traditional feature stores must handle two primary workloads:

- Offline/Historical Feature Serving: Providing large batches of feature values for model training and batch inference.

- Online/Real-time Feature Serving: Delivering the latest feature values for a specific entity (e.g., a customer, a product) with millisecond latency for real-time inference (e.g., next-best-offer, fraud detection).

Redis's in-memory architecture is uniquely suited for the demanding online serving use case, which is often the bottleneck in real-time ML applications. The new "Feature Form" layer likely adds the necessary abstractions, metadata management, and governance tools expected of an enterprise feature store, wrapping the raw performance of Redis.

Retail & Luxury Implications: Operationalizing Real-Time AI

For retail and luxury brands investing in personalization, dynamic pricing, inventory forecasting, and fraud detection, the operationalization of ML models is a major hurdle. Redis Feature Form, if it delivers on its promise, addresses a key pain point.

Concrete Application Scenarios:

- Unified Customer Profile for Real-Time Personalization: A feature store can maintain a live, computed profile for each customer, aggregating features like "average order value last 30 days," "preferred category," "time since last session," and "real-time browsing intent." When a customer loads a product page, the recommendation engine can fetch this complete, consistent profile from the feature store in milliseconds to generate a hyper-personalized suggestion.

- Consistent Dynamic Pricing Models: Pricing algorithms rely on features like competitor price, inventory levels, demand forecasts, and product affinity. A feature store ensures the model in production uses the exact same logic to calculate "30-day demand trend" as the model used during training, preventing pricing errors.

- Streamlined Fraud Detection: Features for fraud models, such as "transaction velocity" or "device history," need to be computed and served in real-time. A low-latency feature store like Redis Feature Form is critical for evaluating transactions before they are approved.

The Gap to Consider: The announcement is just that—an announcement. The maturity, specific capabilities, and ease of integration with existing retail data stacks (e.g., Snowflake, Databricks, cloud data warehouses) are not detailed. Retail AI teams should evaluate it against established players like Tecton, Feast (an open-source framework), and cloud-native solutions (AWS SageMaker Feature Store, GCP Vertex AI Feature Store) based on their specific latency, scale, and existing vendor commitments.

Implementation & Governance

Adopting a feature store represents a significant step in ML maturity. It requires:

- Technical Integration: Wiring it into existing data pipelines for feature computation and into model serving endpoints for feature retrieval.

- Organizational Shift: Encouraging data scientists to write reusable feature definitions and engineers to rely on the store as a central service.

- Governance: Establishing lineage tracking (where did this feature come from?), access controls, and monitoring for feature drift (when the statistical properties of the input data change over time, potentially breaking the model).

A successful implementation can dramatically reduce the "time-to-insight" for ML models and increase their reliability in production, which directly translates to better customer experiences and operational efficiency.

gentic.news Analysis

This move by Redis is a strategic push to move up the value chain from a foundational data store to a higher-level AI/ML infrastructure provider. It follows a broader industry trend where database and data platform vendors are aggressively expanding into the MLOps space to capture more of the AI lifecycle budget. This announcement directly positions Redis against Databricks (with its Lakehouse AI platform and feature store capabilities) and pure-play MLOps vendors.

For our audience of retail and luxury AI leaders, the key takeaway is the continued maturation and productization of core MLOps components. The market is moving from building these tools in-house to evaluating commercial, enterprise-grade options. While the feature store concept is not new, its availability from a performance-centric vendor like Redis validates its necessity for real-time use cases. Retailers with advanced, latency-sensitive AI applications—such as real-time personalization engines or fraud detection at point-of-sale—should add Redis Feature Form to their evaluation shortlist. Its success will hinge on how well it integrates with the complex, hybrid data estates typical of large retailers, not just its raw speed.

Ultimately, tools like this lower the barrier to reliable, production-grade AI. The competitive edge in luxury retail will increasingly come from who can operationalize these models fastest and most reliably, making the underlying infrastructure a strategic concern.