Stanford University has released a comprehensive set of free, open-source cheatsheets designed to distill the complex field of large language models (LLMs) and transformers into accessible, technical references. The collection, announced via social media, aims to serve as a practical toolkit for engineers, researchers, and students.

What's in the Cheatsheets?

The cheatsheets cover a wide array of foundational and advanced topics central to modern LLM development and deployment. According to the announcement, the materials span from core architectural components to popular efficiency and application techniques.

Key areas covered include:

- Core Architecture: Self-attention mechanisms, the fundamental building block of transformers.

- Efficiency & Optimization Techniques: Flash Attention (for faster attention computation), LoRA (Low-Rank Adaptation for parameter-efficient fine-tuning), Mixture of Experts (MoE), model distillation, and quantization.

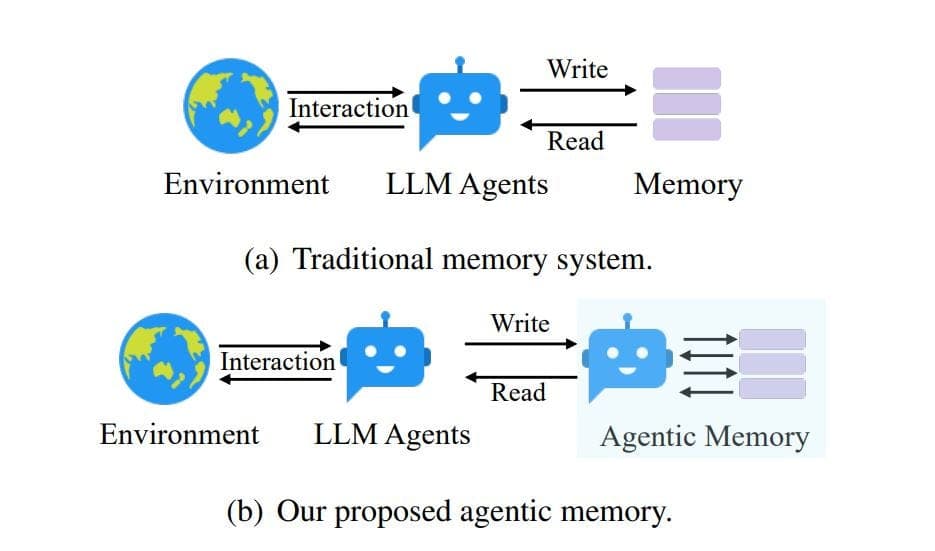

- Application & Alignment Methods: Supervised Fine-Tuning (SFT), Retrieval-Augmented Generation (RAG), AI agents, and LLM-as-a-judge evaluation setups.

This collection effectively maps the journey from a model's internal mechanics (self-attention) to training adaptations (LoRA, SFT), inference optimizations (quantization), and final application patterns (RAG, agents).

Access and Philosophy

The resource is described as "100% Free and Open Source," aligning with a trend of academic institutions providing public educational tools for the rapidly evolving AI field. The format—cheatsheets—suggests a focus on concise explanations, diagrams, and key equations rather than lengthy textbooks, aiming for immediate utility for practitioners looking up a concept or implementing a technique.

gentic.news Analysis

This release by Stanford is a significant contribution to AI literacy and practical education. It systematizes knowledge that has previously been scattered across hundreds of ArXiv papers, blog posts, and GitHub repositories into a single, curated reference. For an engineer deciding between LoRA and full fine-tuning, or a researcher implementing a RAG pipeline, a trusted cheatsheet can dramatically reduce the initial research overhead.

The choice of topics is a precise snapshot of the 2024-2025 LLM stack. Including Flash Attention 2 and LoRA highlights the industry's intense focus on inference cost and fine-tuning efficiency. The presence of RAG and "LLM-as-a-judge" underscores the shift from building massive base models to practical techniques for grounding outputs and evaluating performance, which have been dominant themes in enterprise AI adoption.

This move follows a pattern of leading AI institutions releasing educational infrastructure. It echoes DeepLearning.AI's short courses and Hugging Face's tutorials but in a more condensed, reference-oriented format. By making it open-source, Stanford invites community contributions and updates, which will be crucial to maintain relevance as the field progresses. For the technical community, the real test will be the depth, accuracy, and clarity of the cheatsheets—if successful, they could become as ubiquitous as the famous "Stanford CS229" machine learning notes.

Frequently Asked Questions

Where can I download the Stanford LLM cheatsheets?

The original announcement was made on social media (X). The downloadable cheatsheets are likely hosted on a Stanford-affiliated website or GitHub repository. Searching for "Stanford LLM cheatsheets" or monitoring the university's official AI lab websites (like the Stanford Center for Research on Foundation Models) would be the best way to locate the direct download links.

Are these cheatsheets suitable for beginners in AI?

While designed to be concise, the cheatsheets likely assume a foundational understanding of machine learning and neural networks. Concepts like self-attention and quantization are intermediate to advanced topics. They are probably most valuable for practitioners, students in advanced courses, or engineers who have encountered these terms and need a clear, technical summary.

How is this different from other online AI guides?

The key differentiators are curation, authority, and format. This is a curated set from a leading research institution (Stanford), which lends a degree of authority and quality assurance. The cheatsheet format is specifically designed for quick reference, consolidation, and review, unlike longer-form tutorials, blog posts, or video courses that teach a concept from the ground up.

Will the cheatsheets be updated with new techniques?

As an open-source project, there is potential for community maintenance and updates. However, the long-term update cycle will depend on the sponsoring lab or group at Stanford. Given the pace of change in LLMs, for the resource to remain valuable, a process for incorporating significant new techniques (like new attention variants or fine-tuning methods) will be necessary.