In the rapidly evolving landscape of artificial intelligence, where breakthroughs seem to occur weekly and investment figures reach astronomical heights, one might expect the fundamental definitions and governance structures to shift with the technological tides. Yet according to recent clarifications, Microsoft and OpenAI have maintained a remarkably stable approach to defining and recognizing Artificial General Intelligence (AGI)—the holy grail of AI research that promises human-level or superhuman capabilities across diverse domains.

Despite announcing new funding initiatives, expanding partnerships, and building unprecedented computational infrastructure, the contractual definition of AGI and the formal process for determining when it has been achieved remain exactly as established in their partnership agreements. This consistency in governance framework reveals a deliberate strategy to maintain stability amid technological turbulence.

The AGI Definition: Economic Performance as the Benchmark

At the heart of this framework lies a specific, economically-focused definition of AGI:

"A system is being developed that can perform most economically valuable tasks better than humans, and whose development is officially declared AGI by the OpenAI board."

This definition contains two critical components. First, it establishes a performance threshold: the system must outperform humans on "most economically valuable tasks." This moves beyond narrow benchmarks or specific capabilities to encompass the broad spectrum of work that drives economic value. Second, it establishes an institutional process: the official declaration must come from the OpenAI board, creating a formal governance mechanism rather than relying on technical metrics alone.

Governance Stability Amid Technological Change

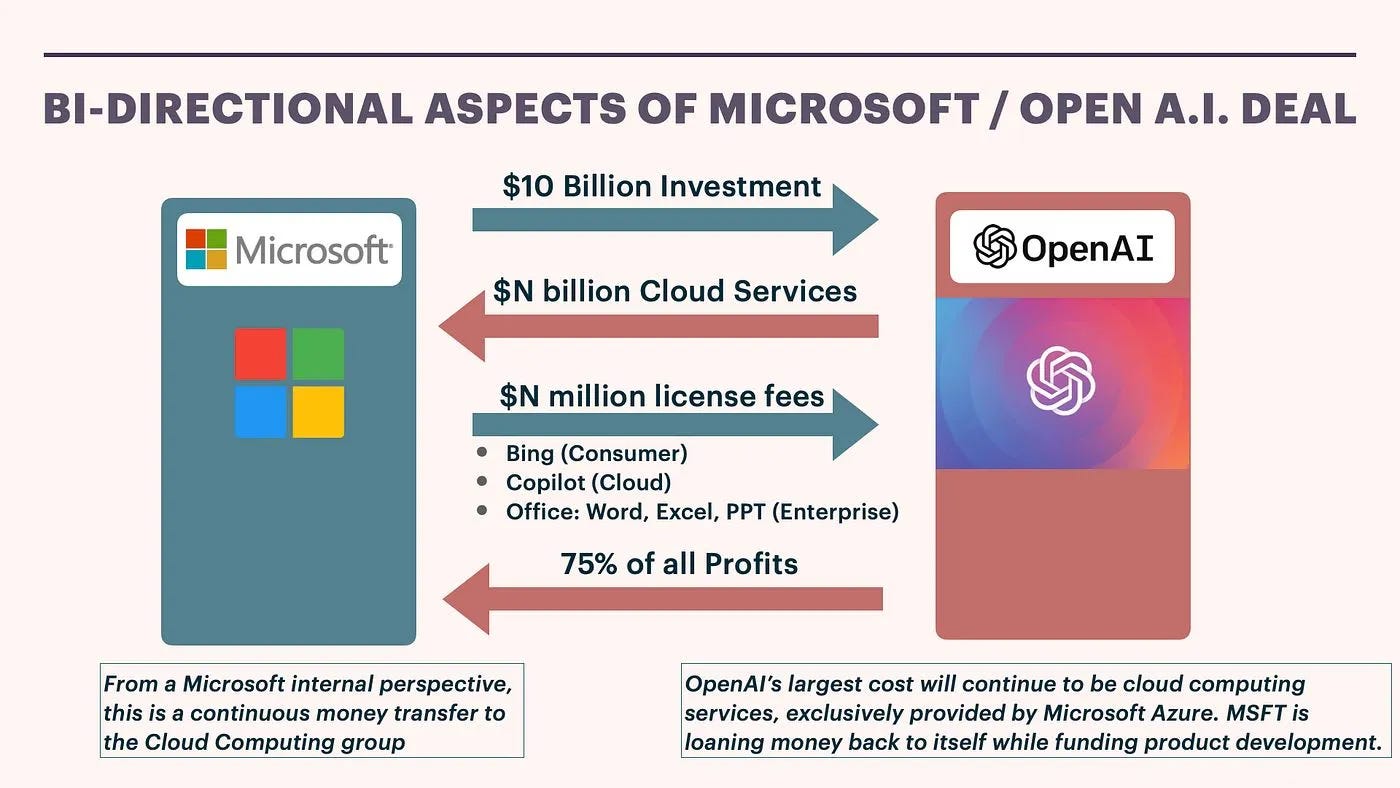

What makes this development particularly noteworthy is the explicit statement that "nothing alters the governance framework around how AGI is defined or recognized" despite significant changes in other areas of the partnership. Microsoft has reportedly invested billions in OpenAI, with recent expansions including specialized AI data centers and cloud infrastructure. The companies have formed increasingly integrated partnerships across product development, research, and deployment.

Yet the AGI governance framework remains untouched. This suggests several strategic considerations:

Contractual Certainty: By maintaining stable definitions, both companies preserve the original intent and balance of their partnership agreements.

Regulatory Preparedness: A consistent definition provides clearer parameters for regulatory discussions and ethical frameworks.

Investor Confidence: Stability in fundamental definitions may reassure investors concerned about mission drift or governance changes.

The Board's Role as AGI Arbiter

The specification that AGI must be "officially declared by the OpenAI board" places significant responsibility on a single governance body. This structure raises important questions about transparency, accountability, and the criteria the board will use to make such a monumental declaration.

Will the board rely on specific technical benchmarks? Economic impact assessments? Societal readiness evaluations? The framework as described leaves these implementation details unspecified, potentially giving the board substantial discretion in determining when the threshold has been crossed.

Implications for the AI Ecosystem

This clarified governance framework has ripple effects throughout the AI industry:

For Competitors: Other organizations developing AGI must now consider whether to adopt similar definitions or establish alternative frameworks. The economic-performance focus may influence how other companies benchmark their own progress.

For Regulators: Policymakers now have a clearer target for discussions about AGI governance, though the board-centric declaration process may raise questions about democratic accountability.

For Researchers: The definition emphasizes economically valuable tasks, potentially shaping research priorities toward practical applications rather than theoretical capabilities.

For Society: The economic framing suggests that AGI's arrival will be measured primarily by its impact on labor markets and productivity rather than by philosophical definitions of intelligence or consciousness.

Historical Context and Future Trajectory

OpenAI's approach to AGI definition has evolved since the organization's founding. Originally established as a nonprofit with a mission to ensure AGI benefits all humanity, OpenAI transitioned to a "capped-profit" structure in 2019 while maintaining its original mission through a nonprofit governing body. The Microsoft partnership, announced that same year, included provisions for AGI development and commercialization.

This latest clarification suggests that despite the organization's structural evolution and the scaling of its ambitions, certain foundational agreements remain intact. As AI systems grow increasingly capable—with models demonstrating proficiency across language, reasoning, coding, and creative tasks—the question of when they cross the AGI threshold becomes more pressing.

The Economic Focus: Strengths and Limitations

The emphasis on "economically valuable tasks" provides a concrete, measurable standard but also raises questions. Which tasks count as "economically valuable"? How is "most" quantified? Does this definition adequately capture the broader societal implications of AGI beyond economic metrics?

Some critics might argue that this definition is overly narrow, potentially missing important aspects of general intelligence that don't directly translate to economic value. Others might counter that economic impact provides the most objective and consequential measure of AGI's arrival.

Looking Ahead: The AGI Declaration Process

As AI capabilities advance, attention will increasingly focus on the declaration process itself. How will the OpenAI board approach this responsibility? What evidence will they require? Will the declaration be a sudden announcement or a gradual acknowledgment?

The board's composition—which includes technology leaders, researchers, and policy experts—suggests a multidisciplinary approach to this determination. However, the lack of public criteria for the declaration leaves room for uncertainty about how transparent the process will be.

Conclusion: Stability as Strategy

In an industry characterized by rapid change and hyperbolic claims, Microsoft and OpenAI's decision to maintain their AGI governance framework represents a strategic choice for stability. By keeping the definition and declaration process constant despite massive investments and technological progress, they create a fixed point around which other developments can be understood.

This approach balances the need for flexibility in development with the need for certainty in governance—a challenging equilibrium that will be tested as AI systems approach the threshold they've defined. The world will be watching not just for technological breakthroughs, but for the board's declaration that those breakthroughs have crossed into the territory of Artificial General Intelligence.

Source: Based on clarification from Microsoft and OpenAI regarding their contractual AGI definition and governance framework.