As autonomous computer-use agents (CUAs) rapidly advance—capable of navigating desktop environments to complete tasks from natural language instructions—a fundamental question emerges: how do we reliably evaluate their performance at scale? A groundbreaking study published on arXiv, "CUAAudit: Meta-Evaluation of Vision-Language Models as Auditors of Autonomous Computer-Use Agents," reveals both the promise and peril of using AI to evaluate AI, uncovering systematic limitations that could impact real-world deployment.

The Evaluation Crisis in Autonomous Computing

Computer-use agents represent a paradigm shift in human-computer interaction. Unlike traditional automation tools, these agents perceive and interact with graphical user interfaces much like humans do, executing complex multi-step tasks across applications. As their capabilities expand, traditional evaluation methods—static benchmarks, rule-based checks, and manual inspection—have proven inadequate. They're brittle, costly, and poorly aligned with how these agents actually perform in diverse real-world environments.

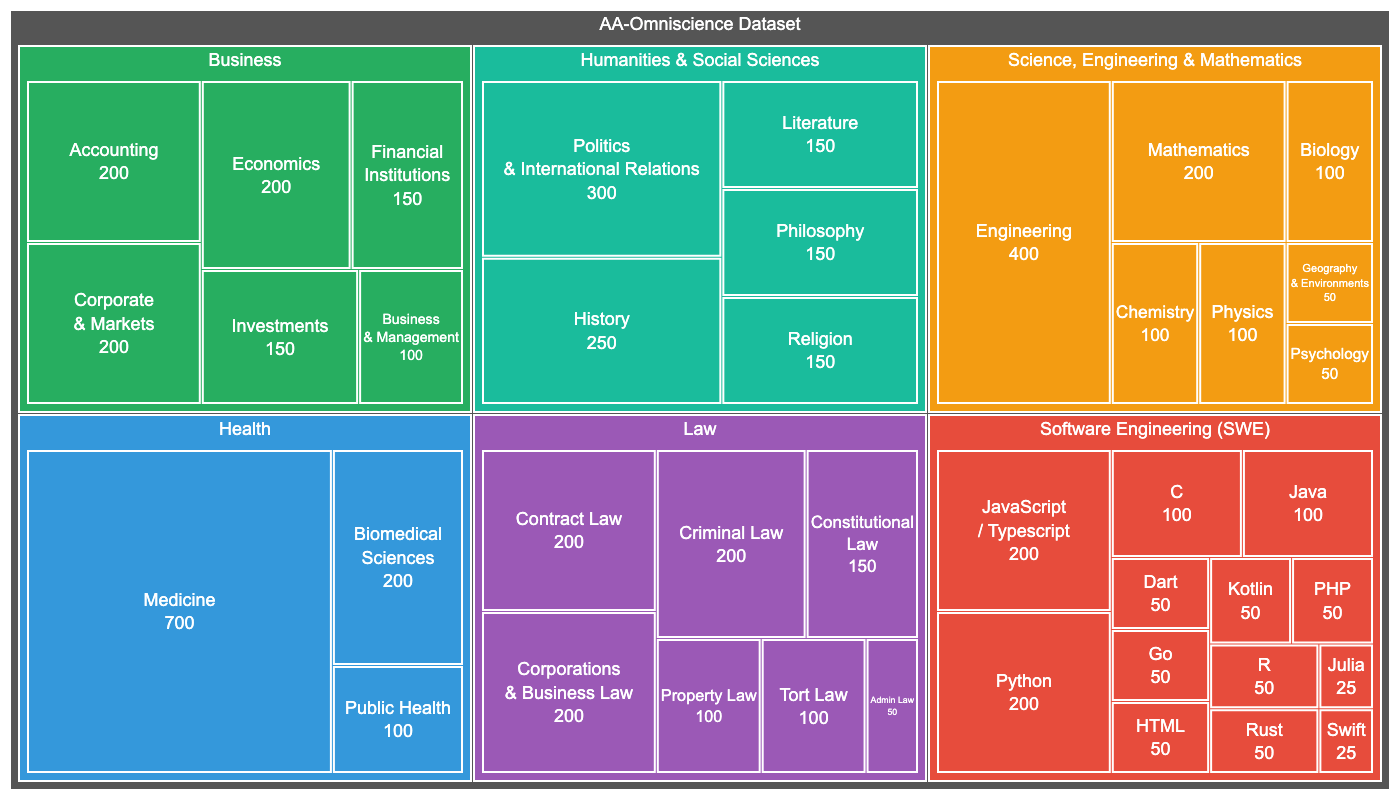

The CUAAudit research team proposed an elegant solution: use vision-language models (VLMs) as autonomous auditors. These multimodal AI systems could theoretically analyze a CUA's final environment state (captured as screenshots) alongside the original instruction to determine task success, creating a scalable evaluation pipeline. The study represents the first large-scale meta-evaluation of this approach, testing five state-of-the-art VLMs across three established CUA benchmarks spanning macOS, Windows, and Linux environments.

Three Dimensions of Auditor Reliability

The researchers didn't just measure accuracy. They analyzed auditor behavior across three complementary dimensions that collectively determine evaluation reliability:

Accuracy: How often auditors correctly identify task completion versus failure.

Calibration: Whether an auditor's confidence estimates ("I'm 90% sure this succeeded") align with actual correctness probabilities.

Inter-model Agreement: How consistently different VLMs make the same judgments on identical tasks.

This multidimensional approach reveals nuances that simple accuracy metrics would miss, providing a more complete picture of evaluation system reliability.

Promising Results with Critical Caveats

The findings present a complex picture. State-of-the-art VLMs demonstrated "strong accuracy and calibration" in controlled conditions, suggesting the approach has genuine merit. However, all auditors exhibited "notable performance degradation in more complex or heterogeneous environments." This environmental sensitivity poses a significant challenge, as real-world desktop environments are inherently complex and varied.

Perhaps most concerning was the discovery that "even high-performing models show significant disagreement in their judgments." When different VLMs evaluated the same CUA performance, they often reached different conclusions about success or failure. This inter-model disagreement reveals fundamental inconsistencies in how current AI systems interpret task completion, raising questions about evaluation objectivity.

The Real-World Implications

These findings arrive at a critical moment in AI development. Just days before this research was published, Meta acquired Moltbook, a social network for AI agents, signaling accelerated investment in autonomous agent technology. As companies like Meta, OpenAI, and others race to deploy increasingly capable CUAs, reliable evaluation becomes not just an academic concern but a practical necessity for safe, effective deployment.

The CUAAudit results suggest that simply using the most accurate VLM as an auditor isn't sufficient. Evaluation systems must account for:

Environmental context: Auditor performance varies significantly across operating systems and application ecosystems

Uncertainty quantification: Confidence estimates must be properly calibrated to be useful

Evaluator variance: Different models may legitimately interpret success criteria differently

Task complexity: Simple tasks are evaluated more reliably than complex, multi-step operations

Toward More Robust Evaluation Frameworks

The research doesn't suggest abandoning VLM-based auditing but rather highlights the need for more sophisticated approaches. Potential solutions include:

- Ensemble methods: Combining judgments from multiple VLMs with different architectures

- Uncertainty-aware evaluation: Treating auditor confidence as probabilistic rather than binary

- Environment-specific calibration: Adjusting evaluation criteria based on platform characteristics

- Human-in-the-loop verification: Using automated auditing for initial screening with human oversight for edge cases

As the paper concludes, these results "expose fundamental limitations of current model-based auditing approaches and highlight the need to explicitly account for evaluator reliability, uncertainty, and variance when deploying autonomous CUAs in real-world settings."

The Broader Context of AI Evaluation

This research contributes to a growing recognition within the AI community that evaluation methodologies haven't kept pace with model capabilities. Recent arXiv publications on topics ranging from consumer rating systems to recommendation algorithms reflect increasing attention to how we assess AI performance. The CUAAudit study extends this concern to the emerging domain of autonomous computer-use agents, where evaluation challenges are particularly acute due to the open-ended nature of desktop interactions.

As AI systems become more autonomous and integrated into daily workflows, the question of who audits the auditors—and how—will only grow in importance. This research provides both a methodology for investigating these questions and sobering evidence that reliable AI evaluation remains an unsolved challenge.

Source: arXiv:2603.10577v1 "CUAAudit: Meta-Evaluation of Vision-Language Models as Auditors of Autonomous Computer-Use Agents" (Submitted March 11, 2026)