What Happened

A keynote address scheduled for the 2025 European Conference on Information Retrieval (ECIR) will focus on a fundamental challenge in modern AI: understanding how Large Language Models (LLMs) use their internal knowledge versus external context. The talk, based on a preprint paper, will present research on evaluating the knowledge stored within an LLM's parameters, diagnostic tests to reveal conflicts, and the characteristics of contextual knowledge that models successfully integrate.

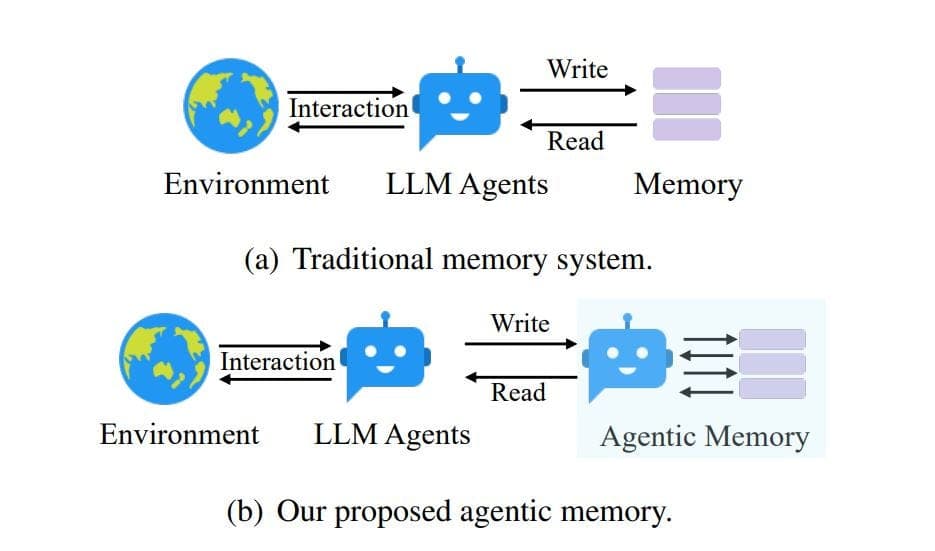

The core issue is that LLMs possess two types of "knowledge." Parametric knowledge is the vast amount of information embedded in the model's weights during its initial training on a massive corpus. This is the model's internal memory. Contextual knowledge is the external information provided to the model at inference time, such as a retrieved document, a user's query history, or real-time data from a database.

Technical Details

The research highlights a critical tension between these two knowledge sources. For LLMs to be useful in dynamic, real-world applications—like answering questions about current events, specific products, or private company data—they must rely on and correctly integrate the provided contextual knowledge to overcome the limitations of their static, pre-trained parametric memory, which can be incomplete or outdated.

However, studies show that LLMs often fail to do this effectively. They exhibit a tendency to ignore or override provided context when it conflicts with their pre-existing parametric knowledge, a phenomenon known as knowledge conflict. Furthermore, intra-memory conflict can occur when contradictory information already exists within the model's own parameters.

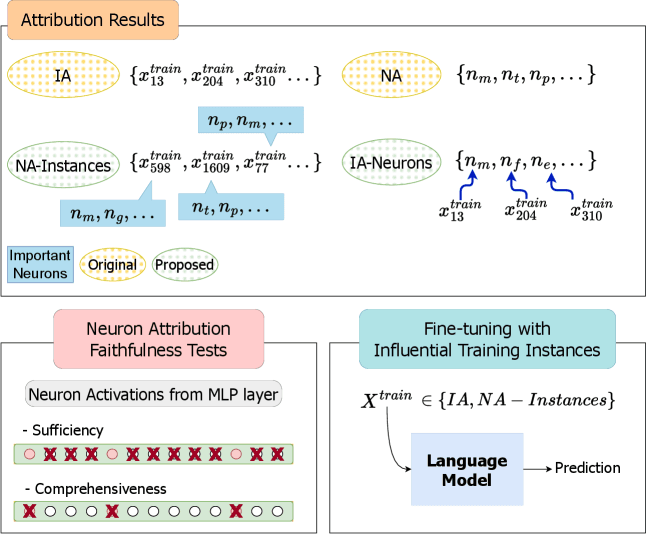

The keynote will delve into methods for:

- Evaluating Parametric Knowledge: Quantifying what a model "knows" from its training.

- Diagnosing Knowledge Conflicts: Creating tests to identify when a model is prioritizing its internal memory over more relevant, provided context.

- Understanding Successful Integration: Analyzing what makes some pieces of contextual knowledge more likely to be used correctly by the model.

This work is foundational for improving Retrieval-Augmented Generation (RAG) systems, where the quality of the final output depends entirely on the model's ability to ground its response in the retrieved documents, not its potentially flawed or generic internal knowledge.

Retail & Luxury Implications

For AI leaders in retail and luxury, this research is not about a new product feature, but about engineering reliability into core AI systems. The failure modes described—models ignoring fresh context in favor of old training data—directly threaten high-value applications.

- Dynamic Product & Policy Assistants: A customer service chatbot for a luxury brand must use the latest return policy, promotional terms, or product availability data (context) rather than a generic policy it learned during pre-training (parametric knowledge). A knowledge conflict could lead to giving incorrect, brand-damaging information.

- Personalized Recommendations: A recommendation engine that uses a customer's past purchase history and real-time browsing behavior (context) must integrate this signal more strongly than generic "popular item" knowledge from its training. If the model's parametric memory overrides the context, personalization fails.

- Internal Knowledge Management: An AI tool for designers that retrieves information from internal trend reports and material databases must faithfully use that proprietary context. If it defaults to public knowledge about fabrics or styles, its value is lost.

The practical takeaway is that deploying a simple RAG pipeline is not enough. Teams must actively test for knowledge conflict in their specific applications. Before launching an AI agent, it should be rigorously evaluated with queries where the provided, up-to-date context contradicts the model's likely internal knowledge (e.g., "What is our summer 2025 flagship collection?" when the context document describes a just-released, unexpected design shift).

Success in this area shifts the focus from mere model selection to the development of robust evaluation frameworks and prompt/architecture engineering that explicitly guide the model to trust the provided context, a key differentiator for production-grade AI systems.