Key Takeaways

- Zed's parallel agents cut refactoring time 60% on independent modules but introduced conflicts on shared dependencies.

- The bottleneck isn't speed — it's context window limits.

What Changed — Zed's Parallel Agents Launch

Zed hit 229 points on Hacker News with its new parallel agents feature. The pitch is straightforward: launch multiple agent instances against the same codebase simultaneously, each with its own context window, working on separate branches or files. Results get integrated afterward. It's a "fork and merge" model applied to inference.

Developer Juanchi Torchia tested it against his real production setup — Claude Code + CrabTrap + Railway on a Next.js/TypeScript/PostgreSQL stack — and published the raw numbers.

What It Means For You — The Real Bottleneck Isn't Speed

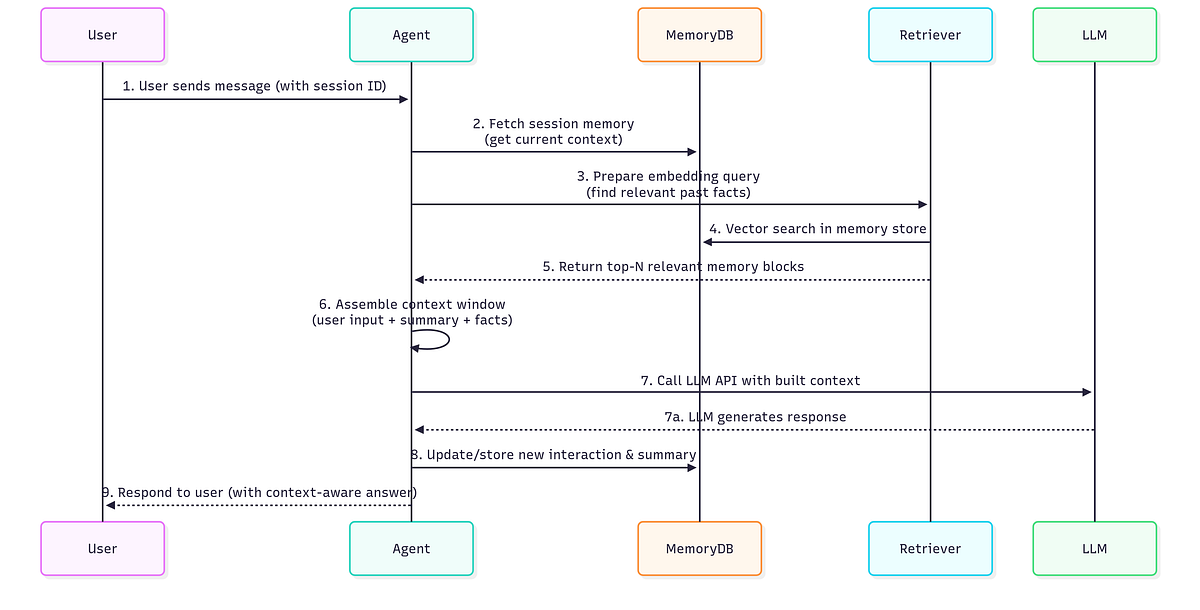

Torchia's thesis before testing: parallelization solves the wrong problem if your bottleneck is context, not speed. His data backs it up.

Scenario 1: Independent Modules — Real Speed Gains

He migrated three modules (auth, metrics, database client) from any to strict types. These had no direct dependencies. Sequential Claude Code: ~45 minutes. Zed parallel agents: ~18 minutes. A 60% reduction in wall-clock time.

This works because each agent gets a clean context window. No accumulated garbage from previous turns. Each agent sees only its module.

Scenario 2: Cross-Dependent Code — The Context Problem Bites

He asked two parallel agents to write tests for two services sharing a validation helper. The result: inconsistent mocks. Agent 1 mocked validatePayload one way, Agent 2 another. Integration required manual reconciliation.

The parallel agents couldn't coordinate because they couldn't see each other's context. This is the fundamental limitation: parallelization doesn't expand the pipe — it just adds more pumps.

Try It Now — When to Use Parallel Agents vs. Claude Code

Use parallel agents when:

- Tasks are truly independent (no shared files, no shared dependencies)

- You're doing separate refactors across modules

- You need to generate tests for unrelated components

Stick with sequential Claude Code when:

- Tasks share utilities, types, or business logic

- You need consistent mocking or styling across files

- The task requires understanding the full codebase context

For Claude Code users, the lesson is practical: you can simulate parallel agents by running multiple claude sessions in separate terminals, each with a focused prompt. But you'll hit the same cross-context issues.

# Terminal 1: refactor module A

claude "Refactor auth module to strict types"

# Terminal 2: refactor module B

claude "Refactor metrics module to strict types"

This works for independent tasks. For anything shared, you're better off with a single session and careful prompt engineering to stay within context limits.

gentic.news Analysis

This article aligns with a pattern we've seen across the AI coding tools landscape: the gap between marketing promises and real-world workflow integration. Zed's parallel agents are a genuine innovation for specific scenarios, but they don't solve the core challenge that Claude Code users face daily — context window management.

Torchia's data is particularly valuable because he's measuring token consumption per turn. Notice the pattern: input tokens grow from 8k to 22k across four turns in a single session. This is the real bottleneck. Parallel agents avoid this by starting fresh contexts, but introduce coordination problems.

The takeaway for Claude Code users: invest in your CLAUDE.md file and prompt engineering to keep context lean. That's how you actually "widen the pipe" — not by adding more pumps.