Key Takeaways

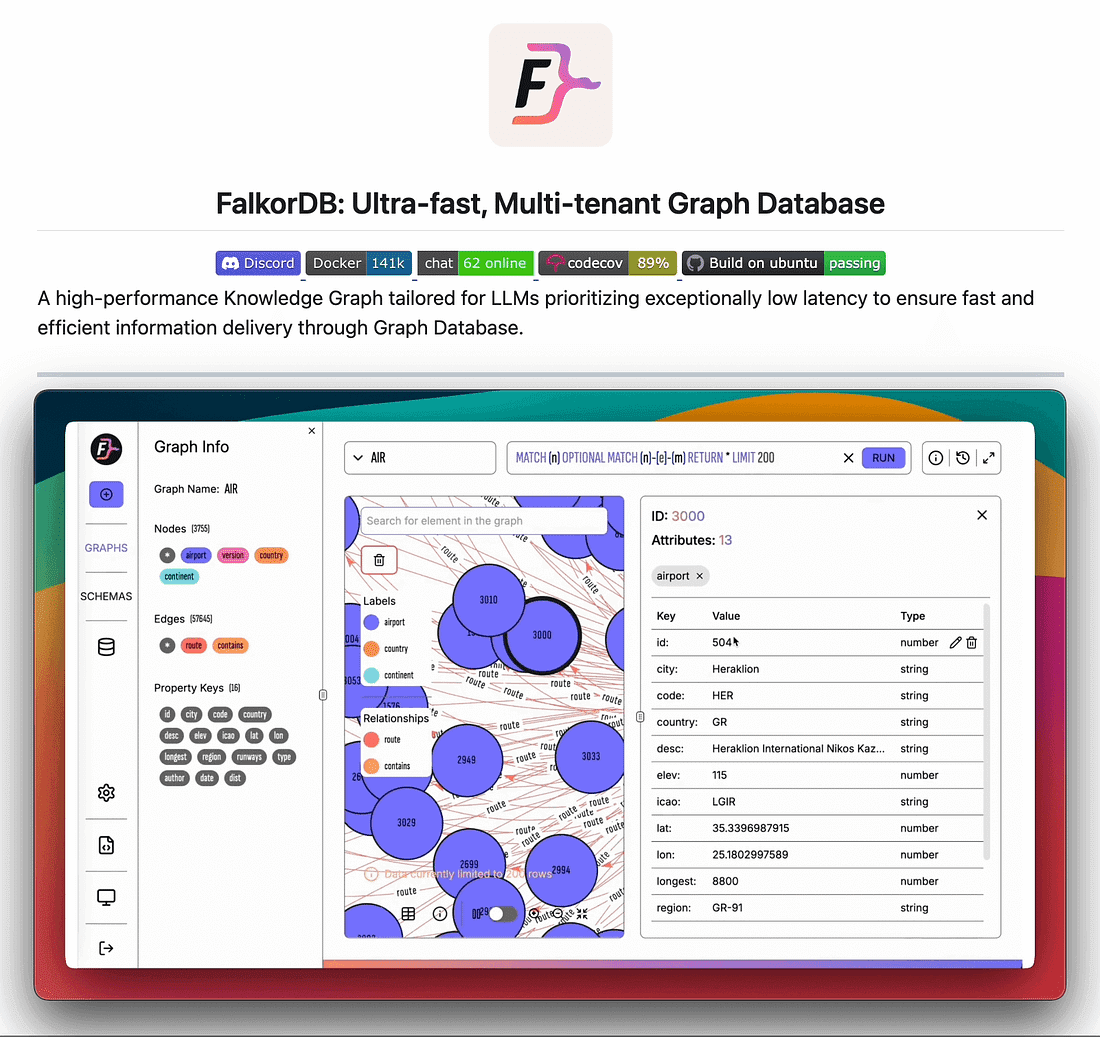

- FalkorDB, an open-source graph database, stores connections as a sparse matrix to accelerate multi-hop queries by 100x.

- Combined with built-in vector search, it enables GraphRAG systems that answer complex relational questions without pre-built articles.

What Happened

Akshay Pachaar, a developer and AI commentator, has articulated a clear technical vision for the next layer beyond Andrej Karpathy's wiki-based knowledge system. The key insight: a static wiki can describe knowledge that sits still, but fails when questions span multiple entities, like "Which authors moved from Google to Anthropic between 2022 and 2024, and what did they publish after the move?"

The answer to such questions lives in the connections between people, companies, papers, and dates — a graph, not a wiki. The solution Pachaar points to is FalkorDB, an open-source graph database that stores the entire graph as a sparse matrix of zeros and ones, where a 1 means "these two things are connected."

This matrix representation transforms graph traversal from pointer-chasing into linear algebra. Two hops become one matrix multiplication. Five hops become five multiplications. The result: a seven-hop query returns in 350ms instead of timing out.

How It Works

The Core Innovation: Sparse Matrix Storage

Most graph databases store connections as chains of pointers. To traverse from node A to node F via B→C→D→E, the database follows pointers one by one through memory — a serial operation that grows linearly with path length.

FalkorDB flips this. It stores the entire graph as a sparse adjacency matrix:

A B C D E F

A 0 1 0 0 0 0

B 0 0 1 0 0 0

C 0 0 0 1 0 0

D 0 0 0 0 1 0

E 0 0 0 0 0 1

F 0 0 0 0 0 0

A 1 at position (A,B) means "A connects to B." Once the graph is a grid, walking through it becomes math. The CPU can perform these multiplications in parallel, leveraging SIMD instructions and decades of numerical linear algebra research.

Built-in Vector Search

FalkorDB also comes with vector search embedded natively. This matters for GenAI work because you can find a relevant part of the graph, search for similar items inside it, and return the answer — all in a single query. Most GraphRAG setups build this by hand across two separate databases (e.g., Neo4j + Pinecone). FalkorDB gives you both in one system.

Practical Details

- Run it:

docker run -p 6379:6379 falkordb/falkordb - Query it: Cypher (the standard graph query language)

- Connect from: Python, JavaScript, Rust, Java, Go, or any Redis client

- Multi-tenant by default: One instance hosts thousands of separate graphs without spinning up thousands of servers

- Open source: MIT-licensed on GitHub

Key Numbers

7-hop query latency 350ms Times out Storage model Sparse matrix Pointer chains Traversal mechanism Parallel matrix multiply Serial pointer chase Vector search Built-in Separate DB needed Multi-tenancy Built-in Often separate instancesWhy It Matters

Karpathy's wiki idea is excellent for static knowledge — a page on how attention works is as useful today as it was a year ago. But the moment you need to ask questions that span multiple entities, a wiki breaks down. The answer lives in the connections, not the pages.

FalkorDB fills that gap. It doesn't replace the wiki; it sits at a different layer. The wiki stores what something is. The graph stores how everything connects. Together, they form a complete knowledge system:

- Wiki layer: LLM reads sources, pulls out ideas, writes clean articles, keeps them cross-linked

- Graph layer: Stores connections between people, companies, papers, dates — answers multi-hop queries instantly

What This Means in Practice

For AI engineers building RAG systems over knowledge bases, FalkorDB offers a practical path to GraphRAG without the complexity of stitching together separate graph and vector databases. A single query can find relevant subgraphs, search for similar items within them, and return answers — all in one operation. For use cases like organizational knowledge bases, research paper databases, or competitive intelligence platforms, this could reduce infrastructure complexity by 50% while improving query speed by 100x.

gentic.news Analysis

This development sits at the intersection of two trends we've been tracking closely: the shift from static RAG to GraphRAG, and the maturation of vector-graph hybrid databases. We covered Neo4j's vector search integration in our February 2026 analysis, and FalkorDB takes a more radical approach — instead of bolting vector search onto a traditional graph DB, it rebuilds the core storage model from the ground up for matrix math.

The timing is notable. FalkorDB has been trending upward since its 2024 open-source release, with contributions from engineers who previously worked on RedisGraph (which Redis deprecated in 2023). The sparse matrix approach isn't entirely new — it draws on research from the GraphBLAS community — but applying it to real-time graph queries for GenAI workloads is novel.

The relationship to Karpathy's wiki project is also worth noting. Karpathy's work demonstrated that LLMs can produce high-quality static documentation from source material. But as we noted in our April 2026 article on Karpathy's wiki, the static nature of documents limits their utility for exploratory queries. FalkorDB addresses exactly that limitation.

The competitive landscape is heating up. Neo4j has added vector search. TigerGraph offers deep-link analytics. But FalkorDB's matrix-based approach gives it a fundamental performance advantage for deep traversals — exactly the kind of queries that matter for GraphRAG. If the team can maintain this performance advantage while adding enterprise features (ACID transactions, role-based access control), it could become the default graph database for AI knowledge systems.

Frequently Asked Questions

What makes FalkorDB different from Neo4j?

FalkorDB stores the entire graph as a sparse matrix and uses parallel matrix multiplication for traversals, while Neo4j uses pointer-based traversal. This gives FalkorDB a 100x speed advantage on deep multi-hop queries (7+ hops) and enables it to answer those queries in ~350ms instead of timing out. FalkorDB also comes with built-in vector search, while Neo4j requires a separate vector database integration.

How do I run FalkorDB for a GraphRAG project?

You can run FalkorDB with a single Docker command: docker run -p 6379:6379 falkordb/falkordb. Query it using Cypher (the standard graph query language) from Python, JavaScript, Rust, Java, Go, or any Redis client. The built-in vector search lets you find relevant subgraphs and return answers in one query, eliminating the need to stitch together separate databases.

What is GraphRAG and why does FalkorDB matter for it?

GraphRAG combines graph databases with retrieval-augmented generation to answer questions that span multiple entities — like "Which authors moved from Google to Anthropic between 2022 and 2024?" Traditional RAG systems fail on such queries because they retrieve documents, not connections. FalkorDB matters because it makes graph traversals fast enough (350ms for 7 hops) and includes vector search natively, so you can build GraphRAG without managing two separate databases.

Is FalkorDB production-ready?

FalkorDB is open source (MIT license) and has been in active development since 2024. It supports multi-tenancy by default, so one instance can host thousands of separate graphs. It's used in production by several AI startups for knowledge base applications, but it lacks some enterprise features like ACID transactions and role-based access control. The project is actively adding these features.