Developer Akshay Pachaar has released Claude-Mem, a free, open-source plugin designed to add persistent memory functionality to Anthropic's Claude Code. The tool addresses a core limitation of current AI coding assistants by maintaining context across sessions, capturing tool usage patterns, and implementing a production-ready retrieval system that reportedly reduces token consumption by up to 10x.

Key Takeaways

- Developer Akshay Pachaar released Claude-Mem, a free plugin that adds persistent memory across Claude Code sessions.

- It captures tool usage and implements a 3-layer retrieval system, saving up to 10x tokens.

What the Plugin Does

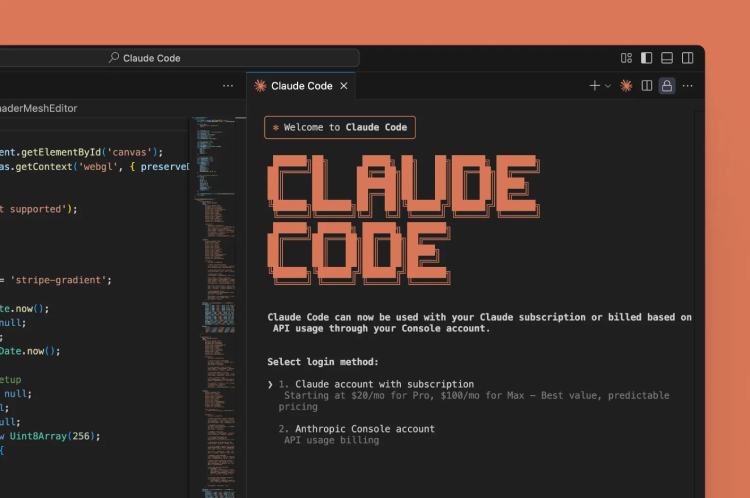

Claude-Mem operates as a plugin that sits alongside Claude Code, the coding-focused variant of Anthropic's Claude 3.5 Sonnet model. Its primary function is to persist memory between separate chat sessions. In standard usage, when a developer closes a Claude Code chat window, the model's context—including previous code snippets, decisions, and tool calls—is lost. Claude-Mem captures this information, allowing a user to "start where you left off" in a new session.

A key technical feature is its ability to capture tool usage. As developers use Claude Code to call linters, run tests, or execute shell commands via tools, Claude-Mem logs these interactions. This creates a memory of the developer's workflow and the model's responses, which can be referenced later to avoid repeating steps or explanations.

Technical Architecture: The 3-Layer Retrieval System

The plugin's efficiency stems from its production-ready, 3-layer retrieval system. While the source tweet does not detail the exact layers, a typical architecture for such a system might involve:

- Embedding & Vector Search: Converting code snippets, error messages, and tool outputs into vector embeddings for fast similarity search.

- Metadata Filtering: Indexing sessions by project, file type, or timestamp to narrow retrieval scope.

- Relevance Ranking: A final layer that scores and ranks retrieved memories based on the current query's context to return the most useful prior interactions.

This structured approach to retrieving past context is what enables the claimed up to 10x reduction in token usage. Instead of a developer manually re-pasting large blocks of code or re-explaining complex project architecture in each new session, Claude-Mem can inject the relevant prior context automatically, saving the tokens that would have been spent on re-transmission and re-explanation.

Availability and Integration

Claude-Mem is described as 100% open-source, with its codebase available on GitHub (link provided in the source tweet). Being free and open-source significantly lowers the barrier to adoption for individual developers and teams. Integration is likely achieved through Claude's existing plugin or extension framework, though the specific installation steps are not detailed in the brief announcement.

Why Persistent Memory Matters for AI Coding

The lack of persistent, project-aware memory is a well-known friction point with AI coding assistants. Developers often work on the same codebase across multiple days or sessions. The need to repeatedly re-establish context with the AI assistant is inefficient and costly, especially as context windows grow and token usage adds up.

Claude-Mem tackles this directly. By making the AI assistant "remember" past work, it moves closer to the ideal of a persistent pair programmer that understands the full history of a project, not just the last 200,000 tokens of the current conversation.

gentic.news Analysis

This release taps directly into the accelerating trend of community-driven tooling built atop foundational AI models. While Anthropic, OpenAI, and Google refine their core models, a vibrant ecosystem of developers is creating the specialized plugins and workflows that define real-world utility. Claude-Mem follows a pattern we've seen with tools like aider and Cursor, which wrap LLMs with additional capabilities tailored for developers. It represents a productization of the RAG (Retrieval-Augmented Generation) pattern for a highly specific, high-value use case: software development.

The focus on token efficiency is particularly shrewd. As context windows expand into the millions of tokens, the cost and latency of processing full context histories become significant. A smart retrieval layer that serves only the relevant slices of memory is a more scalable and economical solution than brute-force feeding an entire project history into every prompt. If the 10x token savings claim holds in practice, it translates to direct cost reduction for developers using Claude Code via the API.

However, the success of such a tool hinges on the accuracy and relevance of its retrieval. Hallucinated or mis-retrieved code context could lead to confusing or incorrect suggestions. The "production-ready" claim suggests the developer has considered this, but the real test will be in widespread community adoption and stress-testing across diverse codebases. This development underscores that for AI coding assistants, the next frontier of improvement may lie as much in the orchestration and memory systems built around them as in the raw capabilities of the underlying models themselves.

Frequently Asked Questions

How do I install the Claude-Mem plugin?

The plugin is open-source and available on GitHub. Installation likely involves cloning the repository and following setup instructions to integrate it with your Claude Code environment, potentially as a local server or extension. Check the project's README for specific steps.

Does Claude-Mem work with regular Claude Chat, or only Claude Code?

The announcement specifically mentions making "Claude Code" more powerful, suggesting the plugin is designed for the coding-optimized variant. Its features, like capturing tool usage, are most relevant for a coding assistant. It may not be compatible with the general-purpose Claude Chat interface.

What does "saves up to 10x tokens" mean?

It means the plugin's retrieval system can dramatically reduce the number of tokens you need to send to Claude Code in a new session. Instead of you manually copying and pasting old code or explanations (which consumes tokens), the plugin automatically fetches and injects only the relevant prior context, potentially cutting the token count of your prompts by 90%.

Is my code sent to an external server when using Claude-Mem?

As an open-source plugin, it can likely be run entirely locally. The memory storage and retrieval processes would happen on your machine or within your controlled infrastructure, keeping your code private. Always verify this in the project's documentation and code.