Researchers from ETH Zurich and Anthropic have developed an automated AI system that can link anonymous social media accounts to real-world identities using only raw text posts, achieving 67% re-identification accuracy at 90% precision for just $1-4 per person. The system represents a 450x improvement over previous deanonymization methods and fundamentally challenges the viability of online pseudonymity.

What the System Does

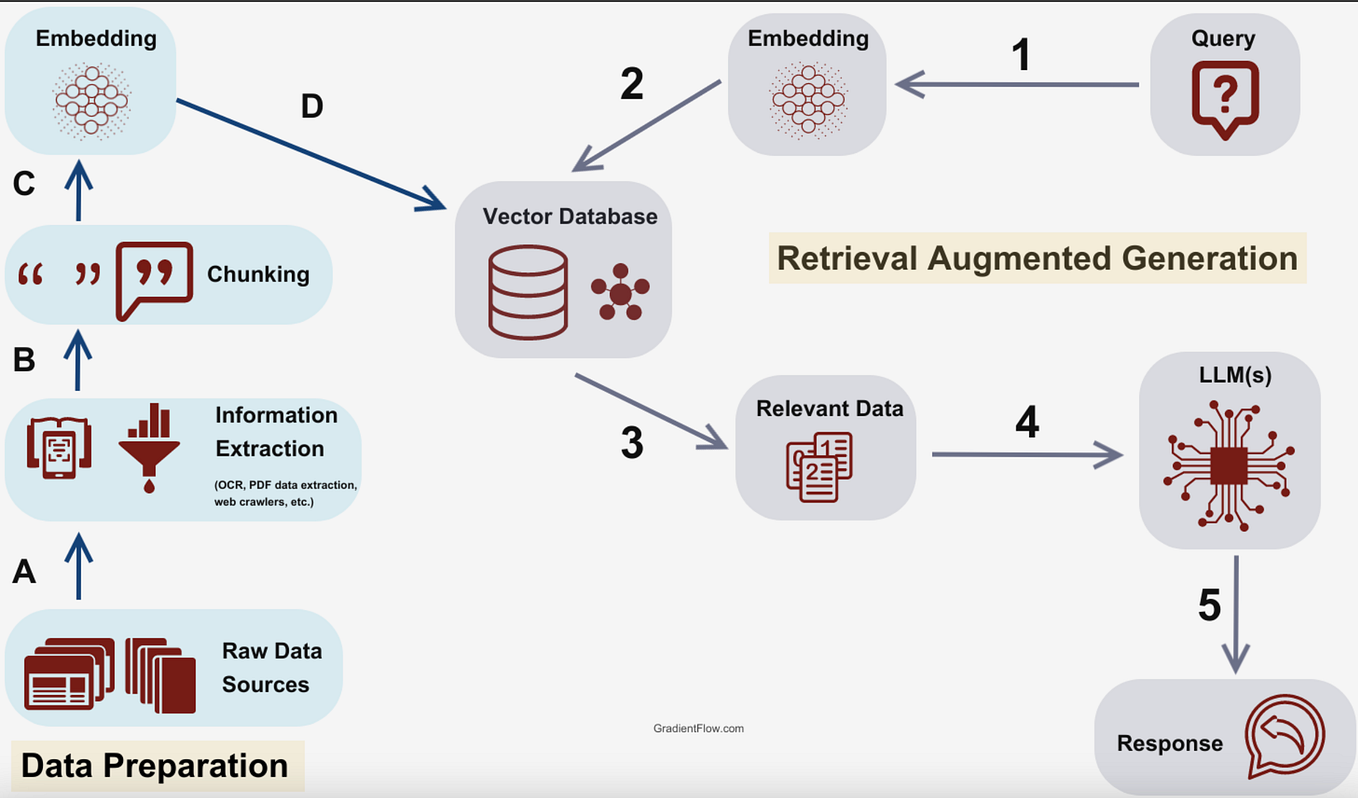

The attack pipeline uses three publicly available AI models in sequence:

- GPT-5.2 for reasoning: Analyzes a user's posting history to build a detailed psychological and demographic profile

- Grok 4.1 Fast for shortlisting: Searches the internet for potential matches based on the profile

- Gemini for matching: Compares the anonymous profile against candidate real identities to make final matches

The system operates fully automatically—no human intervention required—and works exclusively on unstructured text data. It doesn't need structured metadata, IP addresses, or browser fingerprints.

Key Results: The Numbers That Matter

Hacker News 338 users with LinkedIn links 67.0% 90% $1-4 0.1% Reddit 5,000 users, split 1-year histories 67.3% 90% Not specified 0.4% Hacker News 1-in-10,000 match scenario 9.0% 90% Not specified Near 0%The 450x improvement: The researchers compared their AI system against a method based on the famous Netflix Prize attack (which used collaborative filtering to re-identify users). Where the traditional method found 0.1% of people, the AI system found 45.1% at 99% precision.

The correlation with activity: Reddit users who discussed 10 or more movies across different communities were identified 48.1% of the time at 90% precision. The more distinctive content a user posts, the more vulnerable they become.

How the Attack Works in Practice

The researchers collected 338 Hacker News users who had publicly linked their LinkedIn profiles, then stripped all identifying information from their accounts—removing usernames, profile pictures, and any explicit personal details. The AI system was then tasked with re-linking these anonymized accounts to their corresponding LinkedIn profiles.

The system works by:

- Profile extraction: GPT-5.2 reads through a user's posts and comments, identifying interests, writing style, technical expertise, opinions, and implicit demographic clues

- Search generation: The profile is converted into search queries that look for real people matching the characteristics

- Candidate retrieval: Grok 4.1 Fast performs web searches and returns potential matches

- Verification: Gemini compares the writing style and content between the anonymous account and candidate real identities

All components use standard APIs available to any developer. The total computational cost ranges from $1 to $4 per identification attempt.

Why This Matters: The End of Pseudonymity

The researchers state unequivocally: "Pseudonymity does not provide meaningful protection online." They further warn: "Users who post under persistent usernames should assume that adversaries can link their accounts to real identities."

This isn't a theoretical vulnerability requiring nation-state resources. The attack uses:

- Publicly available AI models (GPT-5.2, Gemini, Grok 4.1 Fast)

- Standard APIs anyone can access

- Less than $5 per identification

Immediate applications:

- Governments tracking activists or dissidents

- Corporations building detailed consumer profiles for hyper-targeted advertising

- Stalkers or harassers finding real identities of pseudonymous users

- Background check companies augmenting their databases

Limitations and Caveats

The system works best on users with substantial posting histories (dozens to hundreds of posts). Users who post very little or exclusively on narrow technical topics without personal opinions are harder to identify. However, the 9% success rate even when only 1 in 10,000 users in the database had a possible match shows the system can find needles in haystacks.

The research hasn't yet been peer-reviewed, though it comes from reputable institutions (ETH Zurich and Anthropic). The methodology appears robust, using real-world data from Hacker News, Reddit, and LinkedIn.

gentic.news Analysis

This research represents a watershed moment for online privacy, effectively rendering persistent pseudonymity obsolete. The combination of three different foundation models—each from competing AI labs (OpenAI's GPT-5.2, Google's Gemini, and xAI's Grok 4.1 Fast)—creates a system more powerful than any single model could achieve alone. This aligns with the trend we've covered of emergent capabilities through model composition, where chaining specialized models produces results exceeding individual model performance.

The timing is particularly significant given Anthropic's recent focus on AI safety and alignment research. For Anthropic researchers to publish such a powerful attack vector suggests they view the privacy implications as sufficiently urgent to warrant disclosure despite potential misuse. This follows their pattern of transparent risk assessment we documented in their previous work on model evaluation frameworks.

The $1-4 cost makes this attack accessible to virtually any motivated actor, from corporate marketing departments to individual stalkers. This democratization of surveillance capability mirrors what we saw with deepfake technology in 2024-2025, where tools that once required specialized expertise became available to anyone with a credit card.

From a technical perspective, the most concerning aspect is the system's ability to work on raw text alone. Previous deanonymization attacks typically required structured data, metadata, or behavioral patterns. The fact that GPT-5.2 can extract such rich profiles from unstructured discourse suggests foundation models have developed sophisticated theory of mind capabilities—they're not just processing language but inferring personality traits, background, and identity markers.

This development will likely accelerate several trends we've been tracking: increased adoption of ephemeral messaging (messages that delete automatically), growth of federated learning approaches that keep data local, and renewed interest in differential privacy techniques for social platforms. It also raises urgent questions about whether platforms should implement automatic writing style obfuscation or provide tools for users to periodically change their pseudonyms while maintaining community reputation.

Frequently Asked Questions

How can I protect myself from this type of AI deanonymization?

The most effective protection is to avoid using persistent pseudonyms across multiple platforms. Consider creating separate identities for different types of content (technical discussions vs. personal interests vs. political opinions). Use different writing styles and vocabulary for each identity. Limit the amount of personal information—even indirectly—in any single account. For high-risk activities, use truly anonymous platforms with no persistent identity or regularly create new accounts.

Does this mean all anonymous posting is now useless?

For users with extensive posting histories under a single persistent username, yes—assume your identity can be discovered for approximately $4. For new accounts with limited activity, some protection remains, but the AI system still achieved 9% accuracy even when searching for 1-in-10,000 matches. The researchers' conclusion is clear: pseudonymity (consistent alternate identity) provides minimal protection, though complete anonymity (no persistent identity) remains somewhat effective.

Which platforms are most vulnerable to this attack?

Platforms where users build reputation over time through extensive posting are most vulnerable: Reddit, Hacker News, specialized forums, and even comment sections on news sites. Platforms with shorter-form, less substantive content (like some social media) may offer slightly more protection, but the AI's ability to extract patterns from even limited text makes all persistent identities vulnerable.

Are there legal protections against this type of identification?

Most jurisdictions have weak protections against this type of inference-based identification. Since the system uses publicly available information and doesn't hack into systems, it likely falls into legal gray areas. The European Union's AI Act may eventually regulate such uses, but current laws in most countries don't specifically prohibit compiling publicly available information to infer identities.

Will AI companies restrict access to prevent this misuse?

The models used (GPT-5.2, Gemini, Grok 4.1 Fast) are already subject to usage policies that prohibit harassment and stalking. However, enforcement is challenging since the same capabilities can be used for legitimate purposes like academic research or finding experts on topics. This creates the same dilemma we've seen with other dual-use technologies: restricting access harms legitimate uses while failing to restrict enables harmful ones.