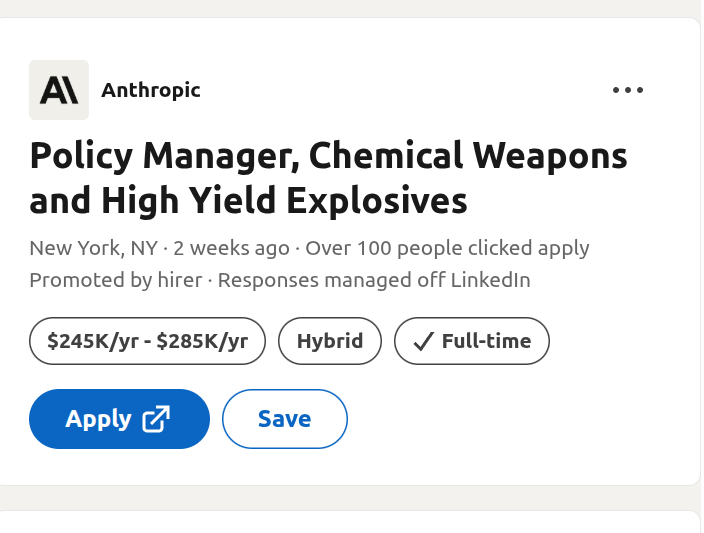

In a quiet but consequential policy revision, AI safety-focused company Anthropic has removed a cornerstone commitment from its Responsible Scaling Policy (RSP), signaling a notable shift in how safety-conscious AI labs are navigating the intensifying commercial race. The company has eliminated its 2023 pledge to halt AI training if adequate safety protections couldn't be guaranteed in advance—a move that industry observers interpret as a concession to competitive and financial realities.

According to the updated policy documents and executive statements, Anthropic has revised its approach to AI safety governance, moving from a strict, pre-emptive pause commitment to a more flexible framework that emphasizes continuous risk assessment during development. The original pledge, introduced as part of the company's public-facing RSP last year, stated that Anthropic would "not train or deploy models above specific capability thresholds unless we can demonstrate that associated risks are adequately managed." This created a clear, binary checkpoint: no safety guarantees, no training progress.

The Original Pledge and Its Intent

The 2023 Responsible Scaling Policy was unveiled by Anthropic as a structured safety framework intended to outpace regulatory requirements and establish industry best practices. At its core was the concept of "safety ceilings"—defined capability thresholds (like autonomous replication or advanced cyber capabilities) that would trigger an automatic training pause until specific safety tests were passed. This was Anthropic's attempt to institutionalize caution in an industry often characterized by rapid, minimally constrained advancement.

Company leadership, including co-founders Dario Amodei and Daniela Amodei, positioned the RSP as a differentiating ethical commitment, arguing that proactive, verifiable safety measures were necessary to manage the risks of increasingly powerful AI systems. The policy was praised by some AI safety researchers and policymakers as a concrete step toward accountable development, contrasting with more vague corporate principles from other labs.

What Changed and Why

The revised policy removes the explicit training halt mandate, replacing it with a process-oriented approach where safety evaluations run parallel to development. In statements, Anthropic executives have pointed to fierce competitive pressures and the practical challenges of implementing rigid pause triggers as primary reasons for the change. The AI competitive landscape has dramatically intensified over the past year, with companies like OpenAI, Google DeepMind, and Meta pushing rapid capability advances and product deployments.

Financial considerations are also likely a factor. Anthropic has secured billions in funding from Amazon and Google, with expectations of product development and market competitiveness. A strict safety pause could potentially stall progress while competitors advance, creating commercial vulnerability. The updated policy allows for more discretion, where development might continue alongside safety mitigation efforts rather than stopping completely.

Industry Context: The Erosion of Self-Regulation

Anthropic's policy shift occurs within a broader trend of retreating self-regulation in the AI industry. Over the past two years, several major labs made voluntary safety commitments, including at the White House and the UK AI Safety Summit. However, as competitive and economic pressures mount, many of these pledges appear increasingly flexible.

This development raises questions about whether market forces inevitably undermine voluntary safety constraints in the absence of strong external regulation. Anthropic was often cited as the lab most committed to safety-first development; its policy revision suggests even the most cautious players are recalibrating when faced with the reality of a commercial arms race.

Implications for AI Governance

The practical implication is that safety assurances may become more retrospective than preventative. Instead of guaranteeing safety before reaching new capability levels, the process may involve developing systems first and managing risks as they emerge—a approach critics compare to "moving fast and breaking things" that the original RSP sought to avoid.

For policymakers and regulators, this underscores the limitations of voluntary corporate commitments as a primary governance mechanism. It may accelerate calls for legally binding requirements, such as mandatory safety audits, pre-deployment certifications, or liability frameworks for AI harms. The EU AI Act and upcoming US regulations may now receive increased attention as necessary backstops.

Anthropic's Balancing Act

In its communications, Anthropic emphasizes that safety remains a core priority and that the RSP still represents a more structured approach than most industry standards. The company notes it maintains other safeguards, like internal review boards and red-teaming protocols. However, the removal of the automatic pause fundamentally changes the policy's risk profile from preventative to mitigative.

The challenge for Anthropic is maintaining its identity as a safety-conscious lab while competing effectively. This revision suggests the company believes it can advance capabilities responsibly without a hard stop mechanism—a calculation that will be tested as its models approach more powerful and potentially risky capabilities.

Looking Ahead

The AI safety landscape is entering a new phase where practical constraints are reshaping theoretical commitments. Anthropic's policy change reflects the tension between ideal safety protocols and the realities of technological competition. How this affects public trust, regulatory approaches, and the actual safety trajectory of advanced AI will be closely watched.

Other labs may interpret this move as validation for more flexible safety approaches, potentially leading to broader industry relaxation of constraints. Alternatively, it might galvanize efforts to create enforceable standards that apply equally to all players, removing the competitive disadvantage of unilateral caution.

Source: Analysis based on Anthropic's updated Responsible Scaling Policy documents and executive statements, as reported in industry discussions.