A brief update from Anthropic indicates a significant, quiet shift in how artificial intelligence safety research itself is conducted. According to the company, its automated alignment researchers are now "already outperforming human scientists" and are "discovering ideas that humans would not have considered, thus broadening our exploration space in science."

What Happened

Anthropic, the AI safety and research company behind the Claude models, has revealed that its internally developed AI systems designed to conduct alignment research—the study of how to make AI systems safe, controllable, and aligned with human intent—are achieving superior results compared to their human counterparts. The core claim is twofold: these AI researchers are more effective (outperforming), and they are generative, proposing novel scientific directions and hypotheses that fall outside typical human conceptual frameworks.

This suggests the tools are not merely automating literature review or data analysis but are actively participating in the creative, hypothesis-forming layer of scientific discovery for AI safety.

Context

This development is a direct evolution of Anthropic's long-stated research direction. The company has consistently emphasized mechanistic interpretability and scalable oversight as pillars of its alignment strategy. Creating AI assistants that can help humans solve alignment problems has been a stated goal. This update implies that goal is being realized, with the assistants now operating at a level that exceeds human baseline performance in specific research domains.

The concept of using AI to accelerate AI safety research is not new, but a public claim of superhuman performance in the core, creative scientific process marks a notable milestone. It transitions the role of AI from a tool for analysis to an active, lead researcher capable of expanding the field's intellectual frontier.

gentic.news Analysis

This announcement, while sparse on technical detail, points to a profound inflection point in the AI feedback loop. If validated, it means the primary tool for understanding and controlling advanced AI systems is becoming those very systems themselves. This creates a recursive dynamic: we are using increasingly capable AI to research the alignment of even more capable AI. The key question shifts from "Can we solve alignment?" to "Can we trust and interpret the solutions proposed by an AI that operates outside human cognitive patterns?"

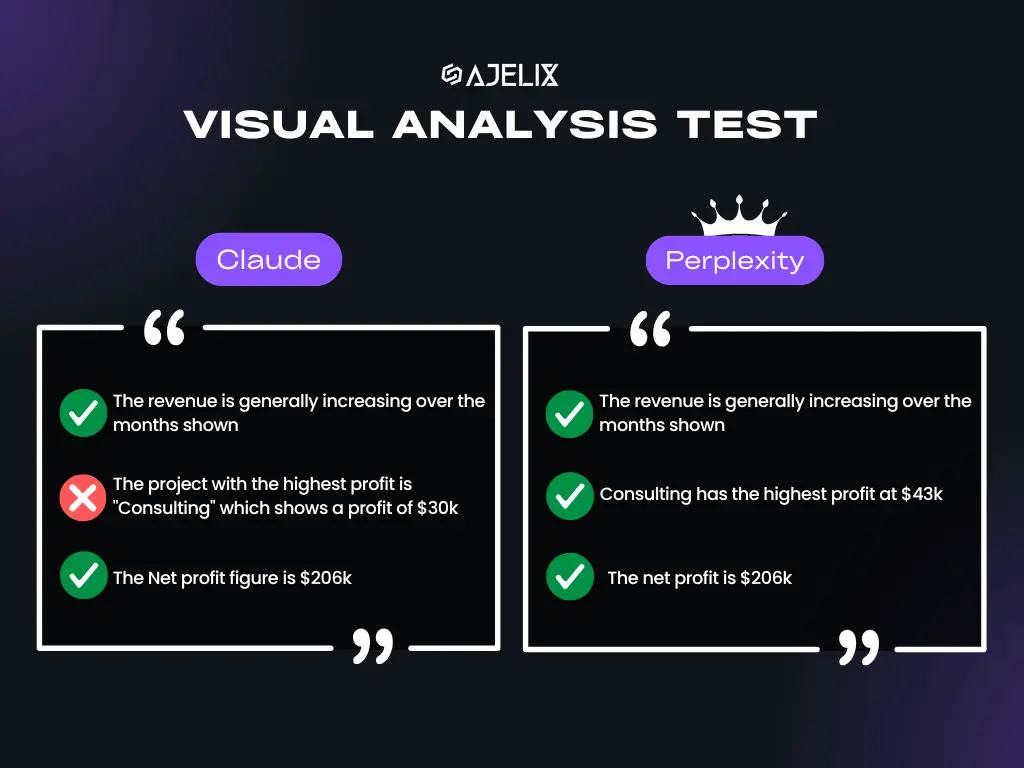

This development aligns with a broader industry trend toward AI-for-Science (AI4S) and automated research, but uniquely applies it to the meta-problem of AI's own safety. It follows Anthropic's established focus on constitutional AI and model self-critique, suggesting these internal "researchers" may be highly specialized instances or fine-tunes of Claude, trained on vast corpora of alignment literature and equipped with advanced reasoning and simulation capabilities. The lack of published benchmarks or peer-reviewed methodology is typical for Anthropic's early-stage announcements but leaves the community awaiting concrete evidence of what "outperforming" entails—faster literature synthesis, more robust mathematical proofs, or the generation of verifiably novel safety paradigms.

For practitioners, the immediate implication is the potential for dramatically accelerated progress in interpretability and robustness research. The long-term implication is more philosophical: it may necessitate developing new frameworks for scientific collaboration with non-human intelligence, where the researcher does not fully understand the provenance of its partner's insights.

Frequently Asked Questions

What are Anthropic's automated alignment researchers?

They are AI systems, likely based on or related to Anthropic's Claude model family, that have been specifically tasked with conducting research into AI alignment and safety. Their function is to generate hypotheses, design experiments, analyze results, and propose new directions for making AI systems safer and more controllable.

How can an AI outperform a human scientist?

The claim likely refers to metrics such as the volume of testable hypotheses generated, the speed of iterating through theoretical frameworks, or the ability to identify complex, non-intuitive patterns in large datasets of AI behavior that humans might miss. It does not necessarily mean the AI possesses broader understanding, but that it excels at specific, measurable research tasks within the constrained domain of alignment science.

What does "discovering ideas humans would not have considered" mean?

Human scientific exploration is bounded by cognitive biases, existing paradigms, and the limits of intuition. An AI, trained on a vast and diverse corpus and not subject to the same biases, can combine concepts in novel ways or explore parameter spaces that a human researcher might intuitively dismiss or simply never conceive of. This can literally broaden the "exploration space" of possible solutions to alignment problems.

Has Anthropic published papers from this AI research?

The source announcement does not mention specific publications. Typically, Anthropic releases significant research findings through its arXiv papers or technical reports. This tweet appears to be a high-level status update on an internal capability. The scientific community will be looking for subsequent publications that detail the methods and present evidence for the claims of novel discovery and superhuman performance.