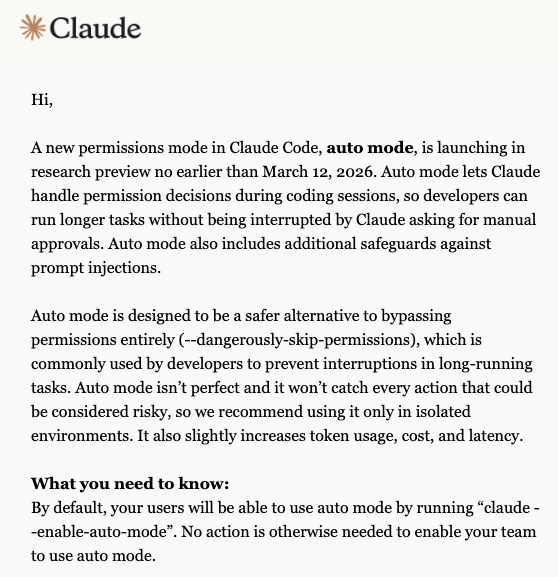

Anthropic is advancing its push into agentic AI by expanding the preview capabilities of Claude Code, its command-line coding tool. The core development is the enhancement of its "Auto Mode," a feature that allows the Claude AI to take autonomous control of a user's computer to execute tasks—editing files, running commands, managing processes—after the tasks have been classified as safe by a built-in safety classifier.

This move represents a concrete step beyond conversational AI and into the realm of AI agents that can perform multi-step operations on a real system with minimal human intervention. It is the latest release in a growing wave of "personal agent" applications designed to offload complex, time-consuming digital work.

What's New: From Assistant to Autonomous Agent

The expanded Auto Mode transforms Claude Code from a tool that suggests code or commands into an agent that can execute them. According to the report, the system works by:

- Task Analysis & Safety Classification: A user describes a task (e.g., "refactor this module," "set up a local dev environment for project X"). Claude Code analyzes the requested actions and runs them through a safety classifier.

- Autonomous Execution: If the task is classified as safe, Claude Code can proceed to execute the necessary terminal commands, file edits, and system interactions autonomously, without requiring step-by-step approval from the user.

- Hands-Off Operation: This enables a "set it and forget it" workflow for approved tasks, where the AI agent can work in the background to accomplish a defined objective.

This capability builds directly on the preview of Claude Code's Auto Mode that Anthropic launched in late March 2026, which introduced the initial safety-gated autonomous execution framework.

Technical Context: Built on MCP and Claude Opus

Claude Code is not a standalone application but an ecosystem. Its agentic capabilities are powered by:

- Model Context Protocol (MCP): An open protocol, developed in collaboration with Anthropic, that allows AI models to connect to and interact with external data sources and tools. Claude Code uses MCP servers to gain context and take actions within a developer's environment. As we covered in Stop Debugging MCP Servers Through Claude Code. Use This Inspector Instead., robust MCP tooling is critical for these advanced workflows.

- Claude Opus 4.6: Anthropic's most capable frontier model, which provides the complex reasoning and planning required to break down high-level user requests into a secure sequence of system actions.

The architecture suggests a focus on security and control. The safety classifier acts as a gatekeeper, and the entire system operates within the permissions and sandbox of the user's local or configured environment.

Market Position and Competition

Anthropic is executing a clear strategy to own the AI-powered developer workflow space. Claude Code, alongside the multi-agent Claude Agent framework, positions Anthropic directly against GitHub Copilot (from Microsoft/GitHub) and OpenAI's ChatGPT with advanced code execution features.

The key differentiator is the degree of autonomy and system integration. While Copilot focuses on code completion and ChatGPT on conversational assistance, Claude Code's expanded Auto Mode aims for end-to-end task completion. This aligns with the trend our readers have seen in articles like How to Build a Multi-Agent Dev System: One Developer's 40-Commit Field Report, which detailed the productivity gains from configuring such autonomous systems.

Limitations and Practical Considerations

- Safety Classifier Reliance: The entire promise of safe autonomy hinges on the accuracy and robustness of the underlying safety classifier. False positives (blocking safe tasks) hinder productivity, while false negatives (allowing unsafe tasks) pose security risks.

- Preview Status: This is an expanded preview, not a general availability release. It is likely limited to a select group of users or specific use cases as Anthropic gathers data on real-world performance and edge cases.

- Complexity of Real-World Environments: Developer environments are unique and often brittle. Autonomous agents must navigate unexpected errors, missing dependencies, and conflicting system states—a significant challenge for current AI systems.

gentic.news Analysis

This expansion is not an isolated feature drop but a deliberate next step in Anthropic's well-documented agentic roadmap. The Knowledge Graph intelligence shows Claude Code has been mentioned in 142 articles in the past week alone, indicating intense market and developer focus on this product category. This development follows directly from the late March 2026 launch of the Auto Mode preview and the subsequent release of the /dream command for memory consolidation, which together form a coherent package for creating persistent, autonomous AI workers.

Historically, Anthropic has competed with OpenAI on model capabilities, but with Claude Code and the Claude Agent framework, it is competing on system integration and workflow ownership. The entity relationship showing Claude Agent → uses → Claude Code is telling: Anthropic is building a layered ecosystem where Code is the execution engine for higher-level multi-agent systems. This strategic build-out occurs as Anthropic is projected to surpass OpenAI in annual recurring revenue by mid-2026, suggesting its product-led growth strategy around practical AI tools is gaining significant traction.

The critical technical dependency here is the Model Context Protocol (MCP), referenced in 24 prior sources. MCP is the plumbing that makes this autonomy possible. As covered in our guide How to Deploy Claude Code at Scale, managing MCP servers and skills is the key operational challenge for teams adopting this technology. The success of Auto Mode will depend as much on the maturity of the MCP ecosystem as on the reasoning of Claude Opus.

For practitioners, the takeaway is that the era of AI assistants that merely make suggestions is rapidly closing. The next phase is execution agents. The question is no longer "Can the AI understand my task?" but "Do I trust the AI to perform my task safely and correctly?" Anthropic's answer, for now, is a gated "yes" for classified-safe operations, pushing the industry toward a new standard for human-AI collaboration.

Frequently Asked Questions

What is Claude Code's Auto Mode?

Auto Mode is a feature within Anthropic's Claude Code command-line tool that allows the Claude AI to autonomously execute commands and control parts of your computer to complete a task. It first analyzes the user's request with a safety classifier. If the task is deemed safe, Claude Code can proceed to run terminal commands, edit files, and manage processes without requiring further manual approval for each step.

Is Claude Code taking control of my computer safe?

Anthropic's approach centers on a built-in safety classifier designed to block unsafe or destructive operations before they are executed. The system operates within the permissions of your user environment and is currently in a controlled preview phase to gather data and refine safety measures. However, as with any autonomous software, there is an inherent risk, and users should exercise caution, especially in critical production environments.

How does Claude Code differ from GitHub Copilot?

GitHub Copilot is primarily an AI-powered code completion tool that suggests snippets and lines within your IDE. Claude Code is an agentic tool designed for task execution. It can understand a high-level objective (like "set up a database container and connect the app"), formulate a plan, and then execute the necessary terminal commands, file creations, and edits to accomplish it, moving beyond suggestion to autonomous action.

What do I need to use Claude Code's Auto Mode?

You need access to the Claude Code tool, which is part of Anthropic's developer ecosystem. It relies on the Model Context Protocol (MCP) to connect to your system's resources and likely requires the latest Claude Opus 4.6 model for complex reasoning. As of this writing, the expanded Auto Mode is in preview, so access may be limited to certain users or require a specific enrollment.