Anthropic has released a new capability for its Claude AI assistant called Claude Code Auto Mode, which allows the AI to make autonomous permission decisions during code execution. The announcement, highlighted by developer @kimmonismus on X, represents another rapid iteration in the increasingly competitive AI coding assistant space.

What Happened

According to the social media post, Anthropic has enabled a new "auto mode" for Claude's coding functionality. The key feature mentioned is that Claude can now make "permission decisions on your behalf" during code execution. While specific technical documentation wasn't linked in the source, the announcement suggests this represents a shift toward more autonomous AI coding agents that can execute decisions without requiring explicit user approval for every step.

Context: The AI Coding Assistant Arms Race

This development comes amidst intense competition in the AI coding space. Anthropic's Claude has been competing directly with GitHub Copilot, Amazon CodeWhisperer, and increasingly capable open-source models like those from the BigCode project. The ability to make autonomous decisions represents a potential differentiator in this crowded market.

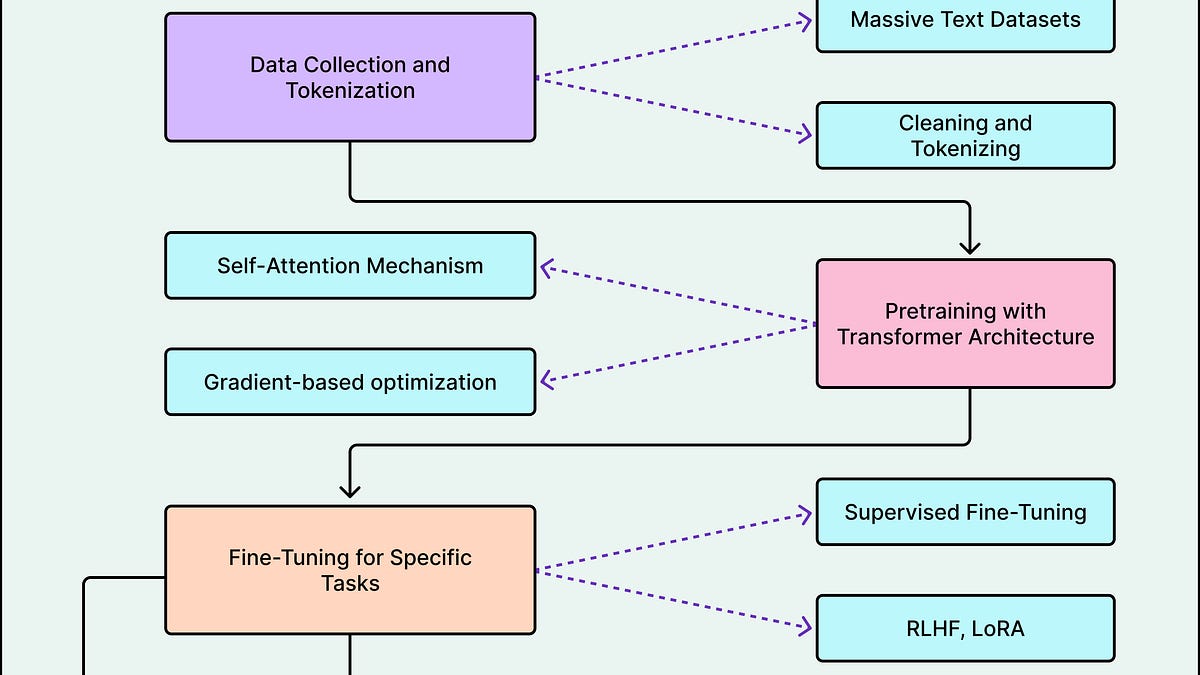

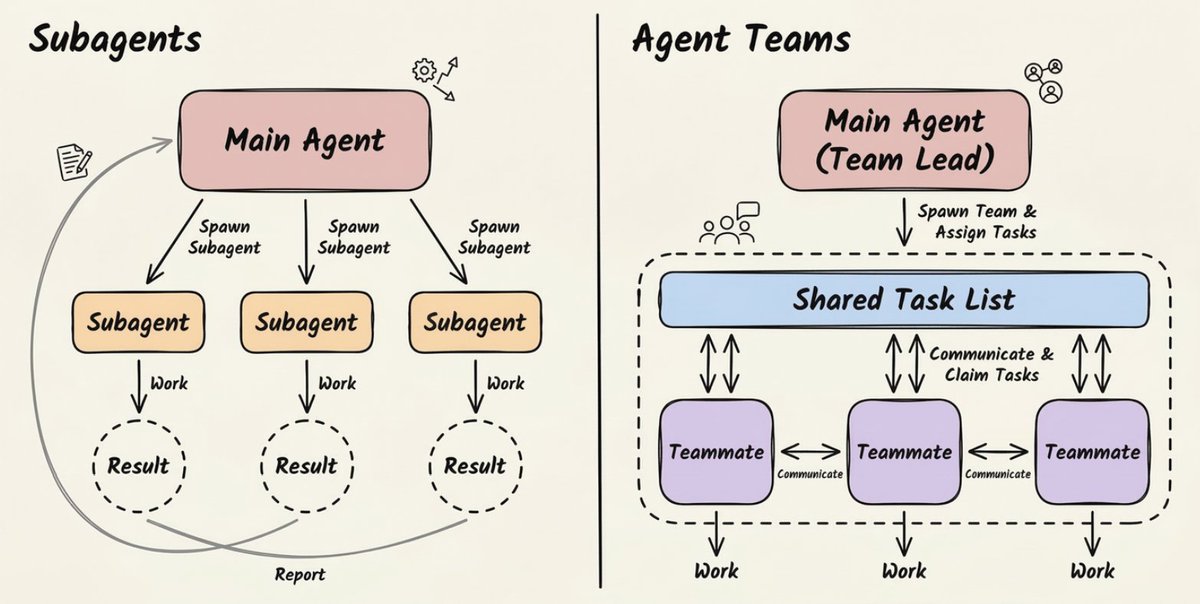

Traditional AI coding assistants operate primarily in a suggestion mode – they propose code completions, fixes, or explanations, but require explicit user approval before any action is taken. Claude Code Auto Mode appears to move beyond this paradigm toward a more agentic approach, where the AI can make certain decisions independently during the coding workflow.

Technical Implications

While the source doesn't provide detailed technical specifications, the phrase "permission decisions on your behalf" suggests several possible implementations:

- File system access decisions: The AI might determine when to read, write, or modify files

- Package installation permissions: Automatically deciding when to install dependencies

- API call authorization: Making decisions about when to call external services

- Code execution safety checks: Determining whether code is safe to run in different environments

This functionality would likely require sophisticated safety guardrails to prevent unintended consequences. Anthropic, known for its focus on AI safety through Constitutional AI, would presumably implement this feature with careful constraints.

What This Means for Developers

For developers using Claude, this could mean:

- Reduced cognitive load: Fewer interruptions for routine permission decisions

- Faster iteration cycles: More seamless flow between ideation and execution

- New trust paradigms: Developers must trust the AI's judgment on permission decisions

- Potential productivity gains: Less context switching between thinking and approving actions

However, it also raises questions about:

- Safety boundaries: What constraints prevent the AI from making dangerous decisions?

- Auditability: Can developers review and understand why certain permission decisions were made?

- Customization: Can developers configure what types of decisions the AI can make autonomously?

gentic.news Analysis

This announcement continues the pattern of rapid-fire releases in the AI coding assistant space that @kimmonismus noted with "They literally drop every freaking day." Just last month, we covered GitHub's Copilot Workspace announcement, which introduced a more agentic approach to coding tasks. Claude Code Auto Mode appears to be Anthropic's direct response to this trend toward more autonomous coding agents.

What's particularly notable is Anthropic's focus on permission decisions specifically. This suggests they're targeting one of the key friction points in current AI coding workflows – the constant need for human approval of routine actions. By automating these decisions, Anthropic may be betting that developers are ready to trade some control for significantly increased velocity.

This development also aligns with the broader industry trend toward AI agents rather than just AI assistants. While assistants suggest, agents execute. The distinction is crucial for productivity tools, as execution often requires making numerous small decisions that can bottleneck workflows if each requires human approval.

However, the success of this feature will depend entirely on its implementation. Too permissive, and developers won't trust it with their codebases. Too restrictive, and the productivity gains will be minimal. Anthropic's Constitutional AI approach, which trains models to align with predefined principles, may give them an advantage in striking this balance safely.

Frequently Asked Questions

What is Claude Code Auto Mode?

Claude Code Auto Mode is a new feature from Anthropic that allows the Claude AI assistant to make autonomous permission decisions during code execution. Instead of requiring explicit user approval for every action, Claude can now determine when certain permissions should be granted, potentially speeding up development workflows.

How does Claude Code Auto Mode differ from traditional AI coding assistants?

Traditional AI coding assistants like GitHub Copilot primarily operate in suggestion mode – they propose code but require explicit user approval before any action is taken. Claude Code Auto Mode represents a shift toward more autonomous, agentic behavior where the AI can make certain decisions independently during the coding process.

Is Claude Code Auto Mode safe to use with sensitive codebases?

While specific safety details weren't provided in the initial announcement, Anthropic is known for its strong focus on AI safety through its Constitutional AI approach. The feature likely includes guardrails to prevent dangerous decisions, but developers should exercise appropriate caution when using any autonomous AI tool with sensitive or production codebases.

Can developers customize what decisions Claude can make autonomously?

The source announcement didn't provide details about customization options. Typically, such features include configuration settings that allow users to define boundaries for autonomous decision-making. We expect Anthropic will release more detailed documentation about customization capabilities as the feature rolls out more broadly.