The Problem You're Already Feeling

You've experienced it: Claude Code generates a 200-line feature in seconds, but reviewing it takes 30 minutes. You can't ask "why did you choose this approach?" You're left reverse-engineering assumptions. According to Anthropic's research, this verification gap is the real bottleneck in AI-assisted development.

The issue isn't Claude's code quality—it's that our current workflow treats AI as a junior developer. We give vague prompts, get code back, and then debug the implementation. But bugs in AI-generated code are usually specification failures, not coding errors. The model implemented exactly what you asked for, but what you asked for was ambiguous.

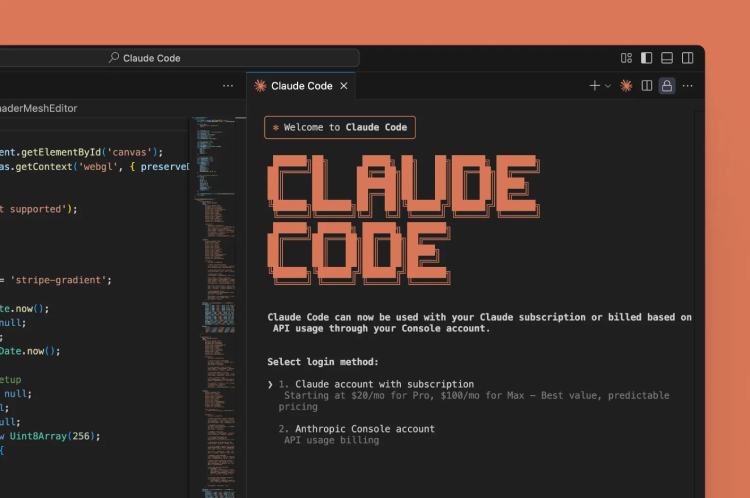

The Claude Code Workflow Shift: From Prompts to Specifications

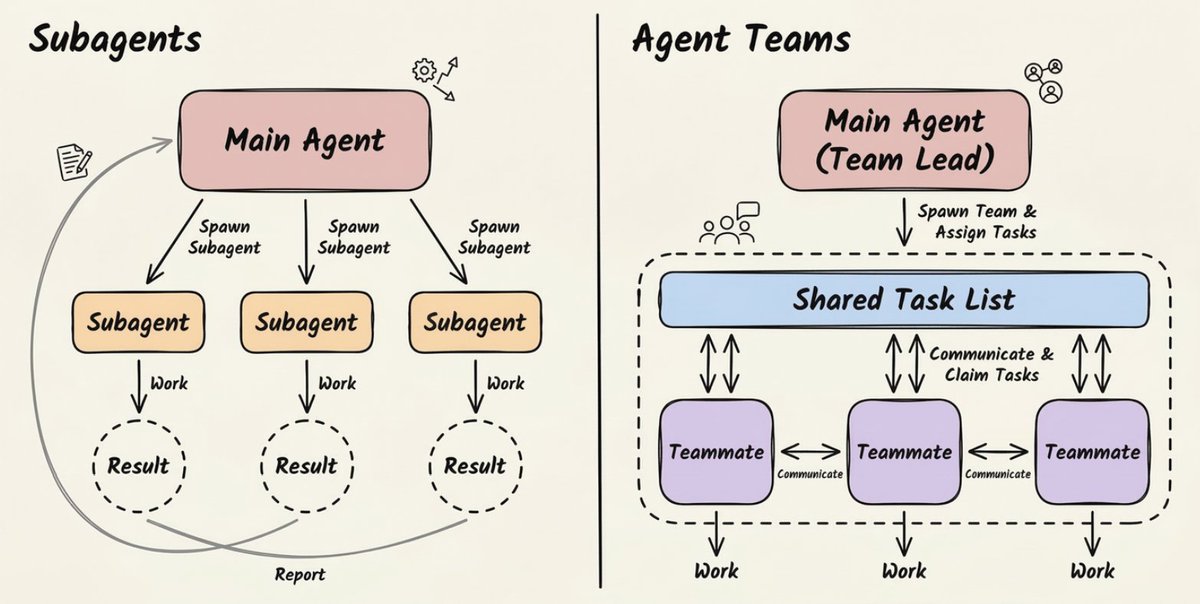

Anthropic's vision—backed by their development of Claude Agent and Claude Cowork—is that engineers should become AI system architects. Your primary output shifts from code to two things:

- Machine-readable specifications (not Jira tickets)

- Complete verification setups (tests that certify correctness)

For Claude Code users, this means fundamentally changing how you use the tool. Instead of typing "add user authentication," you need to write a specification that leaves no room for misinterpretation.

How To Apply This Today in Your CLAUDE.md

Your CLAUDE.md file should evolve from a context document to a specification framework. Here's what to add:

## Specification Format

When requesting new features, use this template:

### Component: [Name]

- **Inputs:** [Exact data structure, types, validation rules]

- **Outputs:** [Expected return structure, error formats]

- **Behavior:** [Step-by-step logic, edge cases handled]

- **Constraints:** [Performance requirements, security rules, dependencies]

- **Tests Required:** [List of specific test cases to generate]

## Verification First

Always generate tests BEFORE implementation code. Use this command:

```bash

claude code --spec "component_spec.md" --output tests/

Then review and augment the test suite. Only after tests are approved:

claude code --spec "component_spec.md" --tests "tests/" --output src/

## Example: From Vague Prompt to Precise Specification

**Bad (what you're probably doing):**

"Create a function that validates email addresses"

**Good (the new way):**

Component: Email Validator

- Inputs: String

email, optional Booleanstrict_mode(default: false) - Outputs: Object

{valid: boolean, reason: string|null, suggestions: string[]} - Behavior:

- Check format against RFC 5322 (use

regexlibrary) - If

strict_mode=true, also verify MX records exist - Common typos detection: gmail.com → gmail.com

- Reject disposable email domains from internal list

- Check format against RFC 5322 (use

- Constraints:

- Timeout: 100ms for DNS lookups

- No external API calls unless

strict_mode=true

- Tests Required:

- Valid standard emails

- Invalid formats (missing @, multiple @)

- Disposable domains

- MX record existence (mock DNS)

- Timeout handling

## Your New Review Process

Stop reviewing 500 lines of generated code line-by-line. Instead:

1. **Review the specification** in your `CLAUDE.md` or separate spec file

2. **Review the generated tests**—do they cover all edge cases?

3. **Run the tests** against the generated code

4. **Only examine the code** if tests fail or performance metrics are off

This follows Anthropic's own development pattern with Claude Agent, where the system is designed to work from structured specifications rather than free-form prompts.

## Handling Legacy Code

The biggest challenge? Existing codebases. Here's your migration strategy:

```bash

# Step 1: Generate specifications FROM existing code

claude code --analyze "src/legacy/" --output specs/legacy/

# Step 2: Review and refine the generated specs

# Step 3: Regenerate components with tests

claude code --spec "specs/legacy/auth.spec.md" --output src/refactored/

This creates a virtuous cycle: legacy code → specifications → tested, maintainable replacements.

The Tooling Gap (And How To Bridge It)

Anthropic acknowledges current models aren't deterministic compilers. The same spec might generate different code. Your defense:

- Version your specifications alongside your code

- Use Claude Code's

--seedflag for reproducible generations - Implement snapshot testing for generated code

# Reproducible generation

claude code --spec "api.spec.md" --seed 42 --output src/api/

# Snapshot test

claude code --spec "api.spec.md" --test-snapshot "tests/api.snapshot"

Start Tomorrow Morning

- Create a

specs/directory in your project - Write ONE specification for your next feature (not a prompt)

- Generate tests first, review them, then generate code

- Notice how much less time you spend debugging

This isn't futuristic thinking—it's the optimal way to use Claude Code today. The engineers who master specification-first development will ship faster with higher quality, while everyone else struggles with AI-generated technical debt.