Ethan Mollick, a professor at Wharton studying AI's economic impact, has highlighted a key takeaway from the release of Anthropic's Claude Opus 4.7: despite debates over implementation and "personality," the models keep getting better at tasks that matter for business and productivity, and the pace of improvement shows no signs of slowing.

What Happened

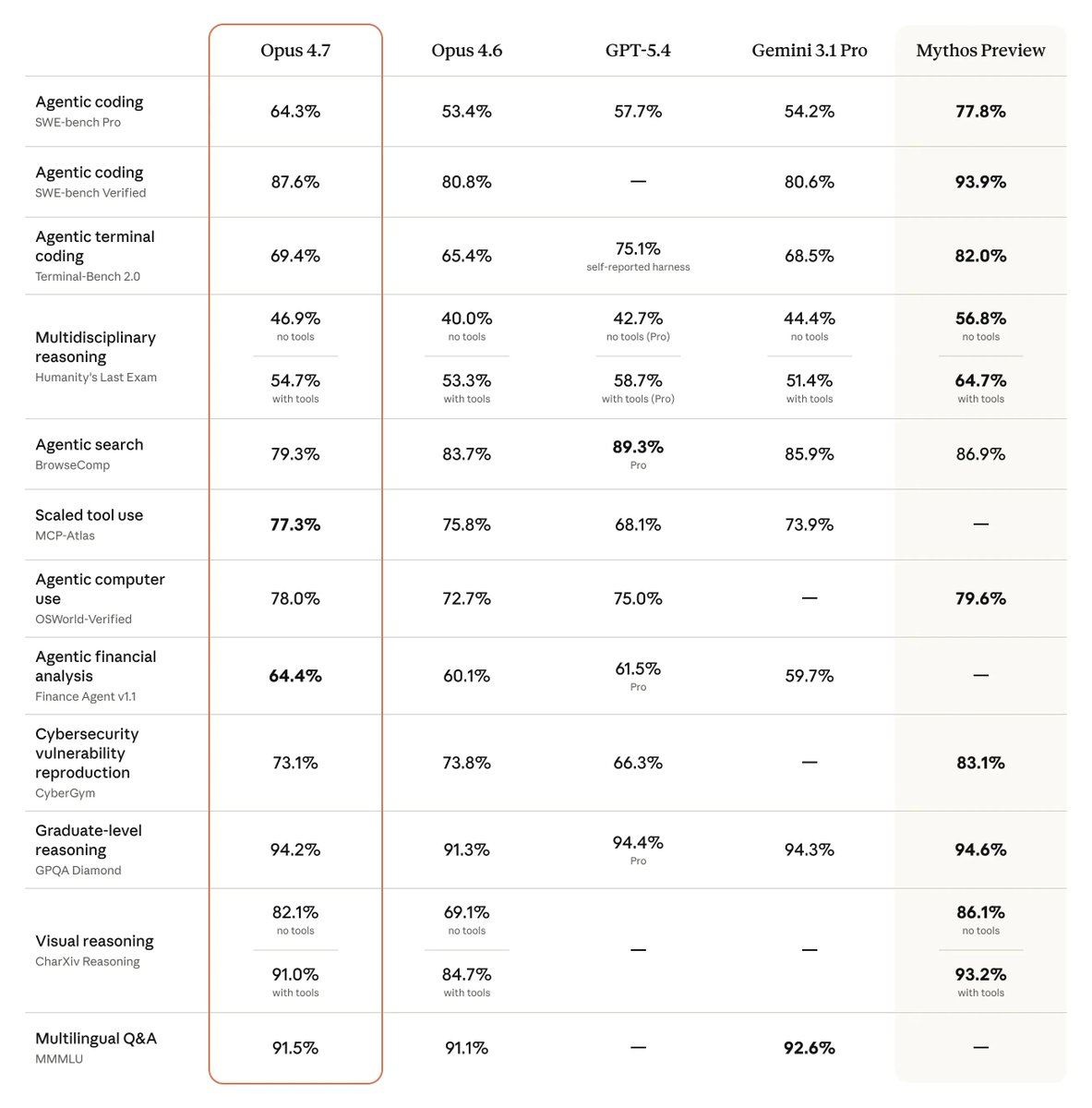

In a recent observation, Mollick pointed out that the release of Claude Opus 4.7, which follows the Opus 4.6 release by just two months, demonstrates a clear pattern of measurable performance improvements on economically important tasks with each iteration. This rapid cadence and consistent gain challenge any narrative of a plateau in frontier model capabilities, at least in the near term.

The core argument is that while the AI community often engages in heated discussions about model design choices, safety approaches, and even the perceived "personality" of assistants like Claude, these debates can obscure the fundamental, bottom-line trend: the raw capability on tasks that translate to economic value is increasing steadily and predictably.

Context: The Rapid Release Cadence

Anthropic's release strategy for its flagship Claude Opus model has accelerated. The jump from Opus 4.6 to 4.7 in approximately two months represents a faster pace than earlier cycles in the Claude 3 model family. This suggests Anthropic's research and engineering pipelines are highly optimized, allowing them to integrate advancements—whether in training techniques, data curation, or scaling—into production models more frequently.

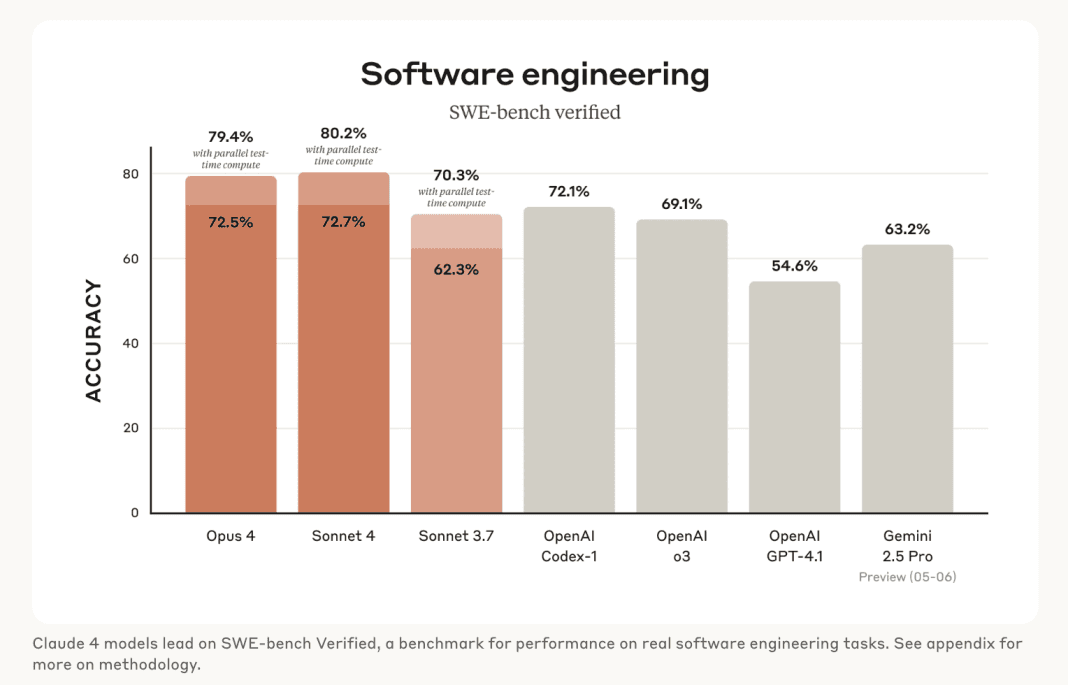

Mollick's focus on "economically important tasks" is significant. This likely refers to benchmarks and real-world evaluations in areas like complex reasoning, code generation, data analysis, strategic planning, and nuanced instruction following—skills directly applicable in professional and enterprise settings. Improvements here have immediate implications for productivity, automation potential, and the return on investment for companies integrating these models.

The Bigger Picture: No Slowdown in Sight

The most striking part of Mollick's statement is the conclusion: "with no signs of slowdown." This observation runs counter to some predictions that the scaling of large language models would face diminishing returns. While theoretical limits exist, the practical frontier, as demonstrated by Anthropic, OpenAI (with its GPT-4 series and o1 models), and Google DeepMind (with Gemini), continues to move forward at a remarkable clip.

For developers and businesses, this creates both an opportunity and a challenge. The opportunity is access to ever-more-capable tools. The challenge is the operational complexity of continuously evaluating and integrating new models, and the strategic uncertainty of building on a platform whose core capabilities are a rapidly moving target.

gentic.news Analysis

Mollick's observation fits into a clear trend we've been tracking: the industrialization of AI model development. This isn't about sporadic research breakthroughs anymore; it's about disciplined, continuous integration and deployment (CI/CD) for trillion-parameter models. Anthropic's two-month release cycle for its top-tier Opus model is evidence of this shift from a research lab to a product engineering mindset. It mirrors the rapid iteration we've seen from OpenAI with its GPT-4 turbo updates and the GPT-4o launch, which also focused on measurable performance and latency improvements.

This context connects directly to our previous coverage on the economics of AI inference. As models improve on "economically important tasks," the cost-benefit analysis for deploying them shifts. A model that is 10% better at legal document review or financial analysis can justify a significantly higher inference cost, which in turn fuels the revenue needed for the next training run. This creates a potential flywheel effect for well-funded frontier labs.

However, this rapid pace also raises critical questions about evaluation. When models update every two months, static academic benchmarks become less relevant. The real evaluation is happening in real-time, in the workflows of millions of users and enterprise clients. Mollick, by focusing on economic utility, is pointing to the metric that ultimately matters for the sustainability of the AI industry: can it do valuable work better than the last version? So far, for Opus, the answer keeps being yes.

Frequently Asked Questions

What are "economically important tasks" for AI models?

These are tasks where improved AI performance directly translates to business value or labor productivity. Examples include accurate code generation and debugging, complex data synthesis and report writing, sophisticated market and legal analysis, advanced customer support reasoning, and strategic planning assistance. Improvements in these areas can automate higher-value work and augment expert professionals.

How does Claude Opus 4.7's release cadence compare to competitors?

Anthropic's two-month cycle between Opus 4.6 and 4.7 is aggressive. OpenAI's major model releases (e.g., GPT-4 to GPT-4 Turbo) have had longer intervals, though they frequently update underlying systems. Google's Gemini releases have also been periodic. This rapid pace suggests Anthropic is prioritizing frequent, incremental capability gains to stay competitive and meet enterprise client demands for continuous improvement.

Does this mean AI model capabilities will keep improving forever?

No. Physical limits in compute, data, and algorithms will eventually apply. However, Mollick's point is that there is no observable slowdown yet. We are likely still on the steep part of the scaling curve for transformer-based LLMs, with improvements coming from better training techniques, novel architectures like mixture-of-experts, and higher-quality data, not just sheer scale. The plateau is theoretical; the current trajectory is still upward.

What should developers do in response to such rapid model updates?

Developers should architect applications for model agility. This means abstracting model calls through APIs, designing evaluation suites that test for their application's specific "economically important tasks," and being prepared to swap or compare models as new versions are released. Building rigid dependencies on a specific model's idiosyncrasies is riskier than ever. The focus should be on the task capability, not the model version.