In a significant policy shift that underscores the growing security concerns surrounding enterprise AI deployment, Anthropic has announced a ban on using OAuth tokens—including those from its Agent SDK—in third-party tools. This development, detailed in Anthropic's updated legal and compliance documentation, represents a strategic tightening of security protocols as the company positions Claude Code for broader enterprise adoption.

The Policy Change: What's Changing

According to Anthropic's updated documentation, the company has explicitly prohibited the use of OAuth tokens obtained through its systems in third-party applications and tools. This restriction extends to the Claude Agent SDK, which developers have been using to build custom AI-powered applications. The policy applies across all usage tiers, from free users to enterprise clients, though commercial agreements may include specific provisions for enterprise customers.

The documentation clarifies that whether users access Claude Code directly (first-party) or through platforms like AWS Bedrock or Google Vertex (third-party), existing commercial agreements govern usage unless mutually agreed otherwise. This suggests that while the policy is broadly applied, enterprise clients may have some flexibility through negotiated terms.

Context: Why Now?

This security tightening comes at a pivotal moment for Anthropic. Recent developments provide crucial context:

Enterprise Expansion: Just days before this policy announcement, Anthropic revealed a strategic partnership with Infosys to develop custom AI agents for enterprise markets. This partnership signals Anthropic's aggressive push into corporate AI solutions, where security and compliance are paramount.

Commercial Pressure: CEO Dario Amodei recently acknowledged the tension between safety principles and commercial pressures, suggesting the company is navigating complex trade-offs as it scales. The OAuth token ban appears to be one manifestation of this balancing act—prioritizing security even as commercial opportunities expand.

Government Scrutiny: Contract renewal negotiations with the Pentagon reportedly stalled over demands for additional safeguards, indicating heightened sensitivity around security protocols. This policy change may be partly responsive to government and enterprise demands for more stringent security measures.

Investment Influx: Anthropic recently received investment from Abu Dhabi's MGX, suggesting international expansion and the need for globally compliant security frameworks.

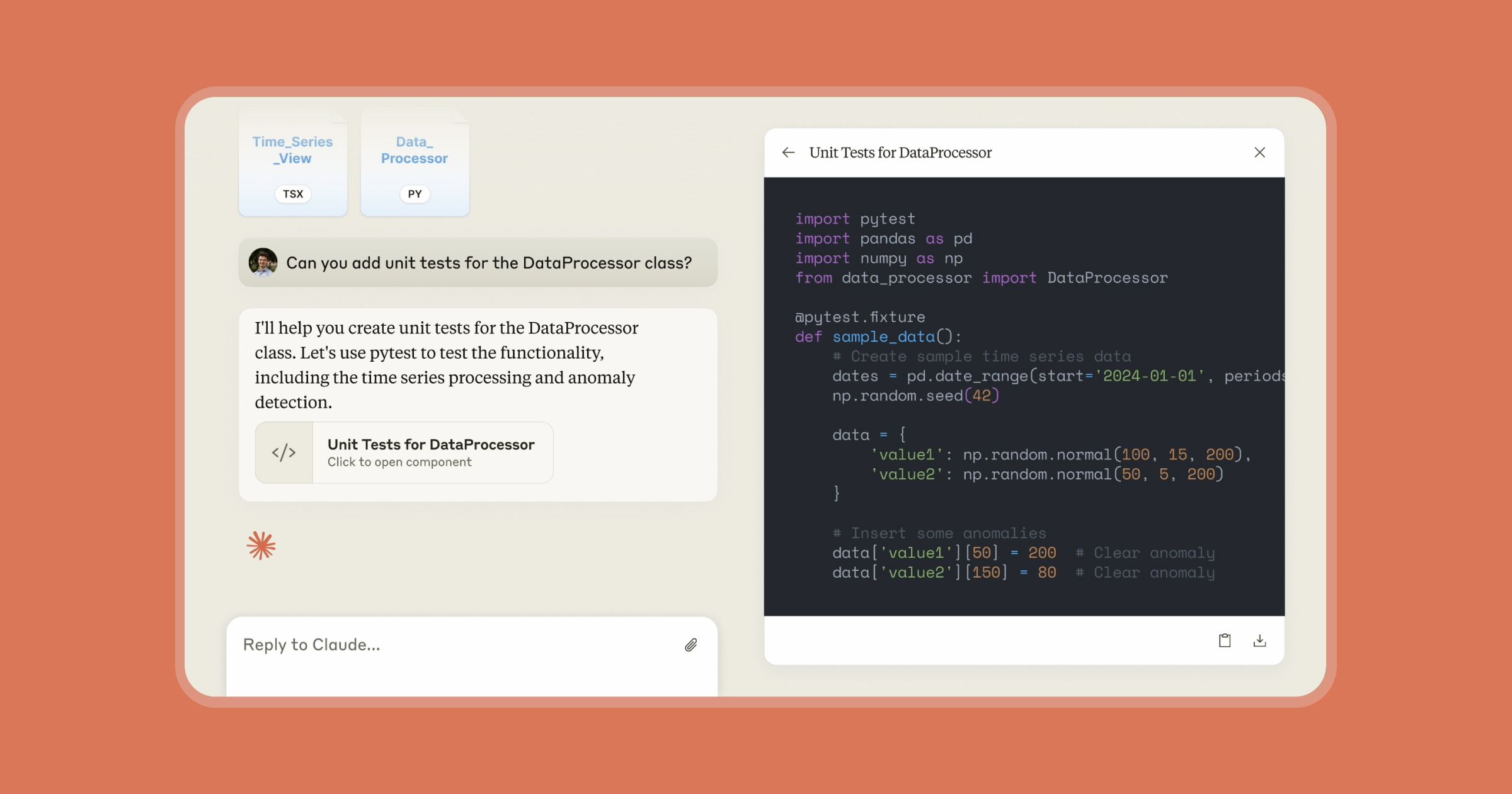

Technical Implications for Developers

For developers building on Anthropic's platform, this change represents a significant shift in how they can integrate Claude's capabilities:

Third-Party Tool Disruption: Tools that previously leveraged Anthropic's OAuth tokens for authentication will need to be reconfigured or may become incompatible.

SDK Limitations: The Claude Agent SDK can no longer be used in third-party contexts as originally intended, potentially affecting development timelines and deployment strategies.

Authentication Overhaul: Developers will need to implement alternative authentication methods, likely increasing development complexity and potentially affecting user experience.

Compliance Considerations: The policy explicitly addresses healthcare compliance (Business Associate Agreements), suggesting Anthropic is particularly focused on regulated industries where data security is critical.

Industry Context: The Security Arms Race

Anthropic's move reflects broader trends in the AI industry:

Competitive Dynamics: As Anthropic competes with OpenAI and other AI developers, security has become a key differentiator. The company's emphasis on AI safety—evident in its development of the "Claude Constitution" ethical framework—extends to security protocols that reassure enterprise clients.

Regulatory Preparation: With increasing scrutiny of AI systems globally, companies are proactively implementing stricter controls to anticipate regulatory requirements. The European Union's AI Act and similar legislation worldwide are pushing companies toward more conservative security postures.

Enterprise Demands: Large organizations, particularly in finance, healthcare, and government sectors, are demanding more robust security guarantees before adopting AI solutions. Anthropic's policy appears designed to meet these demands head-on.

Strategic Implications

This policy change suggests several strategic priorities for Anthropic:

Enterprise First: By tightening security, Anthropic signals its commitment to enterprise clients who require stringent security protocols. This aligns with their partnership with Infosys and expansion into corporate markets.

Risk Management: The move likely represents proactive risk management, reducing potential attack vectors and limiting liability in case of security breaches involving third-party tools.

Platform Control: By restricting third-party tool integration, Anthropic maintains greater control over the user experience and security posture of applications built on its platform.

Compliance Enablement: The explicit mention of healthcare compliance suggests Anthropic is positioning Claude Code for adoption in heavily regulated industries where security protocols are non-negotiable.

Looking Ahead: The Future of AI Security

Anthropic's policy shift is likely just the beginning of broader security tightening across the AI industry. As AI systems become more integrated into critical business processes and handle increasingly sensitive data, we can expect:

- More granular access controls and authentication requirements

- Increased scrutiny of third-party integrations

- Industry-standard security certifications for AI platforms

- Tighter regulatory frameworks specifically addressing AI security

For developers and enterprises, this means security considerations will become increasingly central to AI adoption decisions. Companies that can demonstrate robust security protocols while maintaining usability will have a competitive advantage in the enterprise market.

Source: Anthropic Legal and Compliance Documentation, Hacker News Discussion