In the rapidly evolving field of artificial intelligence, few-shot learning represents one of the most challenging frontiers—teaching models to recognize new concepts from just a handful of examples. Traditional approaches have hit fundamental limitations rooted in the very geometry they use to represent information. Now, researchers have proposed a groundbreaking solution that abandons conventional Euclidean space for the expansive realms of hyperbolic geometry, achieving unprecedented performance gains.

The Geometry Problem in Few-Shot Learning

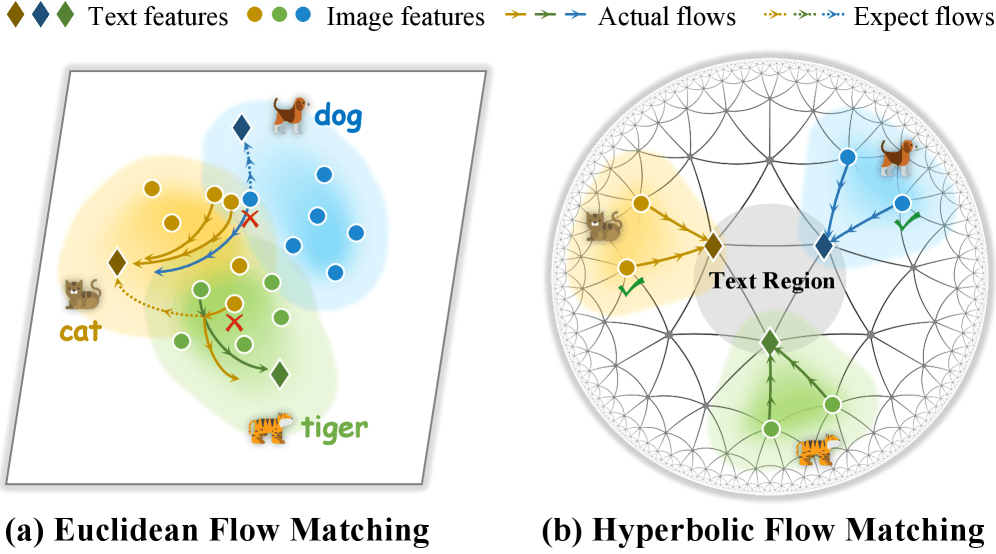

Recent advances in cross-modal few-shot adaptation have treated visual-semantic alignment as a continuous feature transport problem using Flow Matching (FM). This approach essentially moves features through a geometric space to align visual inputs with their semantic descriptions. However, as detailed in the arXiv preprint "Path-Decoupled Hyperbolic Flow Matching for Few-Shot Adaptation" (submitted February 24, 2026), Euclidean-based FM suffers from fundamental limitations inherent to flat geometry.

The core problem lies in polynomial volume growth—the way Euclidean space expands as you move outward from the origin. This relatively slow expansion creates what researchers describe as "severe path entanglement," where different classes' feature trajectories become hopelessly intertwined. Imagine trying to organize a library where books can only be placed along straight lines that inevitably cross—eventually, you can't find anything without confusion.

Enter Hyperbolic Geometry

The proposed solution, Path-Decoupled Hyperbolic Flow Matching (HFM), leverages the Lorentz manifold's exponential expansion properties to fundamentally restructure how features are organized and transported. Unlike Euclidean space, hyperbolic geometry expands exponentially as you move away from the center, providing vastly more "room" to separate different classes of information.

This geometric shift addresses the volume problem directly. Where Euclidean space forces features into crowded arrangements, hyperbolic space naturally creates hierarchical structures with ample separation between categories. The researchers describe this as creating "isolated class-specific geodesic corridors"—essentially dedicated pathways that keep different semantic categories from interfering with each other during the learning process.

Two Key Innovations

HFM incorporates two crucial design elements that make hyperbolic geometry work effectively for few-shot learning:

1. Centripetal Hyperbolic Alignment

This approach constructs what the researchers call a "centripetal hierarchy" by anchoring textual roots and pushing visual leaves toward the boundary. This initialization creates orderly flows from the start, preventing the chaotic entanglement that plagues Euclidean methods. The textual anchors serve as stable reference points around which visual features can organize themselves naturally.

2. Path-Decoupled Objective

Acting as a "semantic guardrail," this objective rigidly confines trajectories within their designated geodesic corridors through step-wise supervision. Rather than allowing features to wander freely through the geometric space, HFM provides continuous guidance that maintains separation between classes throughout the transport process. This prevents the cross-contamination of semantic information that typically degrades few-shot learning performance.

Adaptive Stopping Mechanism

A particularly clever innovation in HFM is the adaptive diameter-based stopping criterion. This mechanism prevents "over-transportation" into the crowded origin region based on intrinsic semantic scale. Different concepts naturally occupy different amounts of "semantic space"—consider how "animal" encompasses more variation than "tabby cat." The adaptive stopping recognizes these differences and adjusts the transport distance accordingly, preventing both underfitting and overfitting.

Performance Breakthrough

The results speak for themselves. Extensive ablation studies across 11 benchmarks demonstrate that HFM establishes a new state-of-the-art, consistently outperforming its Euclidean counterparts. These benchmarks likely include standard few-shot learning challenges across diverse domains, from image classification to cross-modal retrieval tasks.

What makes these results particularly significant is their consistency across different datasets and conditions. Few-shot learning methods often show impressive results on specific benchmarks but fail to generalize. HFM's geometric foundation appears to provide more robust improvements that transfer across different problem domains.

Implications for AI Development

This research represents more than just another incremental improvement in few-shot learning. It suggests a fundamental shift in how we think about representing information in AI systems. For decades, Euclidean geometry has been the default assumption in most machine learning architectures, from simple linear classifiers to complex neural networks.

HFM challenges this assumption directly, demonstrating that alternative geometries—particularly hyperbolic geometry—may be better suited to certain classes of problems. This opens up exciting possibilities for rethinking other AI architectures that might benefit from non-Euclidean representations.

The timing of this breakthrough is particularly significant as AI systems increasingly need to learn from limited data. In real-world applications, from medical diagnosis to rare event detection, we rarely have millions of labeled examples. Methods that can learn effectively from few examples will be crucial for deploying AI in domains where data is scarce or expensive to obtain.

Future Directions

The researchers have indicated they will release their codes and models, which should accelerate further exploration in this direction. Several promising avenues for future research emerge from this work:

- Hybrid geometric approaches: Combining Euclidean and hyperbolic representations where each excels

- Dynamic geometry selection: Systems that learn which geometric representation works best for different types of data

- Beyond hyperbolic geometry: Exploring other non-Euclidean spaces like spherical geometry for different problem types

- Architectural implications: How hyperbolic representations might influence neural network design more broadly

Conclusion

Path-Decoupled Hyperbolic Flow Matching represents a paradigm shift in few-shot learning, moving beyond the limitations of flat Euclidean geometry to leverage the exponential expansion properties of hyperbolic space. By creating orderly hierarchies and maintaining strict separation between semantic categories, HFM achieves state-of-the-art performance while addressing fundamental limitations of previous approaches.

As AI systems continue to advance toward more human-like learning capabilities—particularly the ability to learn quickly from limited examples—geometric innovations like HFM will play an increasingly important role. This research not only provides a practical solution to a specific problem but also points toward a broader reimagining of how we represent knowledge in artificial intelligence systems.

Source: arXiv:2602.20479v1 "Path-Decoupled Hyperbolic Flow Matching for Few-Shot Adaptation" (Submitted February 24, 2026)