Google Chrome is experimenting with a new capability that could significantly alter how interactive web content is integrated into 3D environments. The experimental feature, called html-in-canvas, allows developers to take any Document Object Model (DOM) element—such as a live webpage, a video player, or a complex UI component—and render it as a live, updating texture within a WebGL or WebGPU canvas.

What Happened

The feature was highlighted via a social media post from the technical account @HowToAI_. The core capability is straightforward: a webpage, or a subsection of it, is no longer a static image or a pre-rendered sprite. Instead, it becomes a dynamic texture that updates in real-time as the underlying HTML changes. This means a live video stream, a scrolling social media feed, or an interactive data dashboard could be mapped onto the surface of a 3D object in a browser-based experience.

Technical Details & Context

Traditionally, integrating web content into a WebGL scene required complex workarounds: rendering the HTML to a 2D canvas, converting that canvas to an image data URL, and then loading that static image as a texture. This process is manual, breaks interactivity, and introduces latency. The html-in-canvas API aims to automate and streamline this pipeline directly within the browser's rendering engine.

While the source material is a brief announcement, the technical implication is clear. This feature sits at the intersection of two major web graphics APIs:

- WebGL / WebGPU: For hardware-accelerated 2D and 3D rendering.

- The DOM & CSS: For structured, styled, and interactive document rendering.

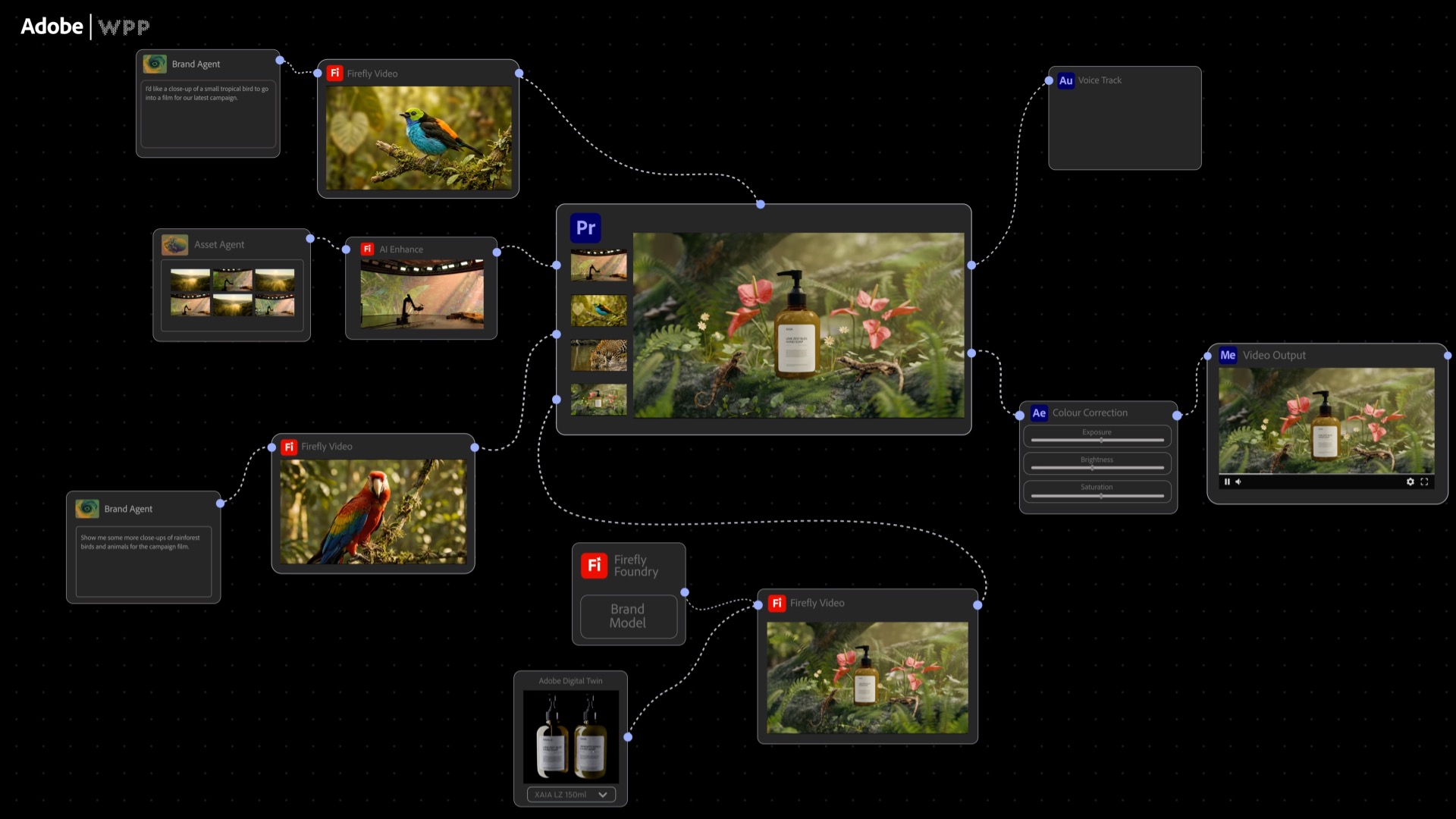

Bridging these two stacks natively could unlock new forms of hybrid web applications, where immersive 3D visualizations or game environments seamlessly contain fully functional web widgets.

Potential Applications & Implications

For AI and ML practitioners, this development is particularly relevant for data visualization and human-computer interaction (HCI) research conducted in the browser.

- Immersive Dashboards: A 3D data exploration environment could have live model training metrics, TensorBoard charts, or data tables rendered on in-world screens.

- Interactive AI Demos: A demo of a computer vision model could show the live camera feed and bounding boxes within a 3D scene, with control panels as HTML overlays on 3D panels.

- Research Prototyping: Rapid prototyping of mixed-reality or augmented reality interfaces that blend traditional web UI components with 3D contexts becomes more feasible directly in the browser, without needing a full game engine.

As an experimental feature in Chrome, its availability is limited to users who enable specific flags (chrome://flags). There is no announced timeline for a stable release, and its performance characteristics, security model, and API surface are subject to change.

gentic.news Analysis

This move by Google's Chrome team is a logical step in the long-term convergence of document-based and scene-based web rendering. It follows a pattern of Chrome aggressively integrating advanced graphics capabilities, such as its early and strong support for WebGPU, the successor to WebGL. WebGPU provides lower-level access to GPU hardware and is critical for demanding machine learning inference in the browser via frameworks like TensorFlow.js. The html-in-canvas feature can be seen as a complementary effort to WebGPU, reducing the friction for developers to build complex, application-like experiences that were previously the domain of native apps or game engines.

This development also aligns with broader industry trends in AI-powered digital twins and simulation. As companies like NVIDIA (with Omniverse) and Unity push for more interconnected, real-time 3D collaboration platforms, the web browser remains a crucial, zero-install deployment target. Enabling live web content inside 3D browser scenes makes the web a more viable platform for lightweight versions of these immersive simulation interfaces. For the AI engineering community, which increasingly relies on sophisticated visual tools for model monitoring, data labeling, and result interpretation, browser-based 3D is becoming a more capable and integrated option.

Frequently Asked Questions

What is html-in-canvas?

html-in-canvas is an experimental Chrome feature that allows a live HTML element (the DOM) to be rendered directly as a texture within a WebGL or WebGPU canvas. This means the HTML content updates in real-time and can be mapped onto 3D objects.

How is this different from past methods?

Previously, developers had to manually capture HTML as a static image (e.g., using html2canvas or rendering to a 2D canvas) and then load that image as a texture. This process was slow, broke all interactivity (like clicking links or playing video), and had to be repeated for every update. html-in-canvas aims to be a native, performant bridge between the two rendering systems.

Can I use html-in-canvas today?

Not for production. It is currently an experimental feature hidden behind flags in Chrome Canary or Beta channels. Developers can enable it to test and provide feedback, but the API is unstable and subject to change or removal.

What does this mean for AI/ML development?

It primarily enables richer, browser-based visualization and demo tools. AI researchers and engineers could build interactive 3D environments that display live training data, model outputs, or control panels using standard web technologies (HTML/JS) as textures within a 3D scene, creating more immersive and intuitive interfaces for complex systems.