What Happened

A new research paper, "Controllable Evidence Selection in Retrieval-Augmented Question Answering via Deterministic Utility Gating," was posted to the arXiv preprint server. The work addresses a core weakness in modern Retrieval-Augmented Generation (RAG) systems for question answering.

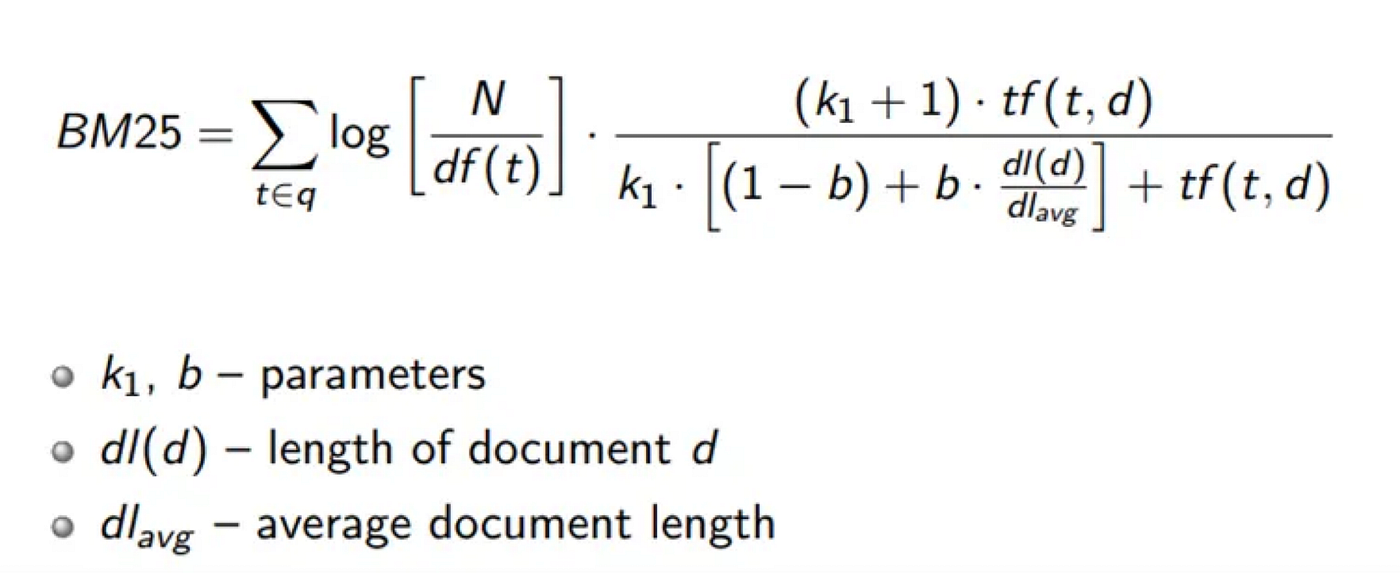

Current RAG pipelines typically convert a knowledge base and a user query into vector embeddings, then retrieve the text passages with the highest similarity scores. While effective for finding topically related content, this approach conflates relevance with utility. Two sentences can be semantically similar to a query but differ critically: one may state a required fact explicitly, while another might discuss a related concept, provide an incomplete detail, or be redundant with other retrieved passages. Relying solely on similarity scores can lead to answers based on incomplete, contradictory, or verbose evidence.

Technical Details

The proposed framework introduces a deterministic gating mechanism that sits between the retrieval and generation stages. Its goal is to establish a clear, auditable boundary between retrieved text and usable evidence. The process involves two fixed scoring procedures:

Meaning-Utility Estimation (MUE): This evaluates each retrieved text unit (e.g., a sentence or short passage) independently. It scores the unit based on explicit signals like:

- Semantic Relatedness: Does it connect to the core meaning of the query?

- Term Coverage: Does it contain the key entities or conditions mentioned in the query?

- Conceptual Distinctiveness: Does it introduce a necessary, unique piece of information?

Diversity-Utility Estimation (DUE): This step controls for redundancy. It ensures that a unit is only admitted if it provides conceptual information not already covered by other accepted units.

Crucially, the system employs a strict admissibility rule: A unit is accepted as evidence only if it independently and explicitly states the fact, rule, or condition required by the query. Units are not merged, paraphrased, or have missing information inferred. If no single retrieved unit meets this bar, the system returns no answer rather than generating a potentially unsupported response.

The authors emphasize that this is a deterministic, rule-based framework. It requires no model training or fine-tuning. The utility scores are calculated using fixed functions, making the evidence selection process completely transparent and reproducible.

Retail & Luxury Implications

While the paper is a technical contribution to general QA research, its principles have direct, high-stakes applications in retail and luxury AI systems where accuracy, auditability, and brand integrity are paramount.

1. High-Precision Customer Service & Product Q&A: Luxury brands operate knowledge-intensive customer service channels, both human and automated. A query like "Can I submerge my 18k gold watch with a leather strap in water?" requires a precise, correct answer. A standard RAG system might retrieve passages about water resistance, 18k gold properties, and leather care, potentially leading to a conflated or vague answer. The deterministic gating framework would only admit a sentence that explicitly states the rule: e.g., "Models with leather straps are not suitable for submersion." This prevents hallucination and provides clear, citable evidence for the agent or customer.

2. Auditable Compliance and Policy Engines: Retailers must navigate complex policies on returns, warranties, international shipping, and sustainability claims. An internal tool for staff needs to provide definitive answers backed by policy documents. This framework ensures that any generated answer is directly traceable to a specific, sufficient clause in a policy PDF, creating an audit trail for compliance.

3. Curation of Brand Narrative and Heritage: For heritage brands, communicating a consistent and accurate historical narrative is key. An AI tool used by marketing or sales associates to pull historical anecdotes must be prevented from merging unrelated events or inferring unsupported connections. The strict "independent sufficiency" rule acts as a guardrail, ensuring every stated "fact" in an output is directly attested in a single source.

The Gap Between Research and Production: It's important to note this is a research prototype. Implementing it in a production retail environment would require significant engineering: defining the precise utility scoring functions for a specific domain (e.g., product manuals vs. marketing copy), integrating it with existing vector databases and LLMs, and designing fallback behaviors for when the "no answer" result is unacceptable. However, the core concept—applying deterministic, explainable filters to LLM inputs—is a highly relevant design pattern for the industry.