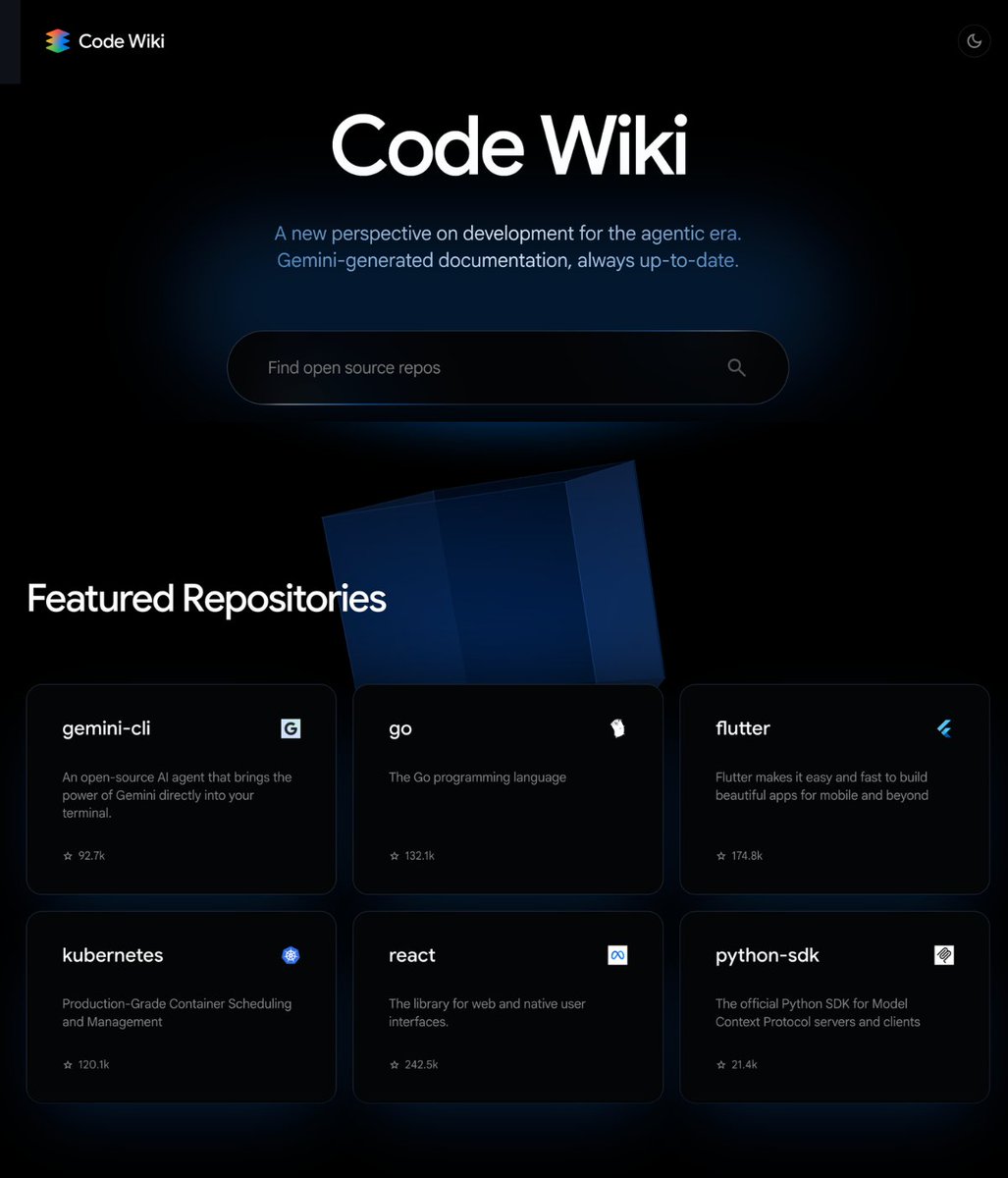

A GitHub repository described as a "real prompt engineering playbook," compiling research from Stanford, Google, and MIT, has gained significant traction with over 1,700 stars and 758 commits. The repository, maintained by the research lab EgoAlpha, aggregates and implements advanced prompting techniques published in recent academic papers, positioning itself as a free, open-source alternative to commercially sold prompt guides and packs.

What's in the Repository

The repository is structured as a living document and codebase, updated daily with implementations and summaries of cutting-edge research. According to the source, its contents are distinctly different from popular "YouTube guru tricks," focusing instead on methods actively used in top research labs and shipped products.

Key documented areas include:

- In-Context Learning (ICL): Techniques for improving a model's performance via examples provided within the prompt itself, based on the latest published papers.

- Chain-of-Thought (CoT) Methods: Advanced reasoning frameworks that guide models through step-by-step logic, moving beyond basic prompting.

- Retrieval-Augmented Generation (RAG) Architectures: Implementations of systems that combine language models with external knowledge retrieval, as deployed in production systems.

- Agent Frameworks: Research-backed designs for autonomous AI agents that can plan and execute multi-step tasks.

- Evaluation Methods: Frameworks for systematically testing and comparing the effectiveness of different prompts and techniques.

The core value proposition is accessibility: providing a centralized, open-source hub for the engineering principles that are advancing the field, rather than surface-level tips.

The Open-Source vs. Commercial Prompt Market

The development highlights a growing divide in the AI tools ecosystem. While a market has emerged for paid "prompt packs," templates, and courses, this repository represents a counter-movement grounded in published, peer-reviewed academic work. Its popularity suggests demand among developers and engineers for depth and methodological rigor over packaged solutions.

The repository's activity—758 commits and daily updates—indicates it is actively maintained to track the fast-moving research landscape in prompt engineering and LLM application design.

gentic.news Analysis

This repository's traction is a direct symptom of the maturation phase in LLM application development. As we covered in our analysis of Anthropic's Prompt Library release in late 2025, there is a clear industry push to systematize and professionalize prompt craft, moving it from an art to a reproducible engineering discipline. EgoAlpha's playbook takes this a step further by anchoring the practice directly in academic literature from elite institutions like Stanford, Google, and MIT, whose research labs (like Google's Brain team) are consistently trending entities (📈) in our knowledge graph for LLM innovation.

The compilation aligns with a broader trend we've tracked: the "democratization of advanced R&D." Previously, the implementation gap between a paper published on arXiv and a usable code module could be weeks or months. Curated, living repositories like this one accelerate the adoption cycle, allowing engineers at smaller firms or independent developers to build with state-of-the-art techniques much faster. This indirectly pressures commercial API providers (like OpenAI and Anthropic) and model vendors to innovate more rapidly, as their advanced features are quickly reverse-engineered and disseminated.

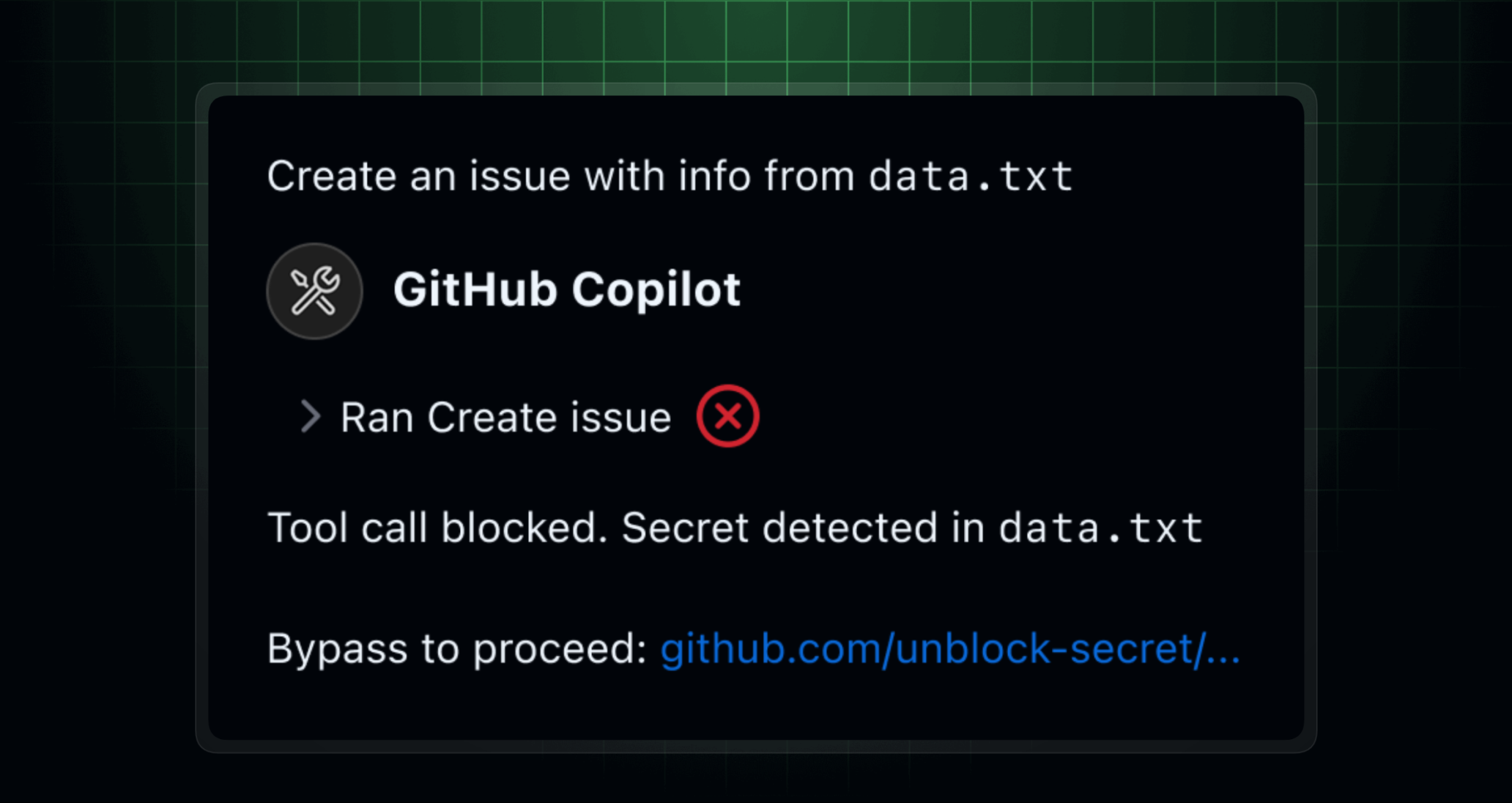

However, a critical question for practitioners is the translation from research to robust production systems. While the repository provides the "playbook," successful deployment still requires significant expertise in system design, evaluation, and monitoring—areas where commercial platforms still provide integrated advantages. The real impact may be in raising the floor for what's considered competent prompt engineering, potentially disrupting the low-end market for generic prompt packs.

Frequently Asked Questions

Where can I find the EgoAlpha Prompt Engineering Playbook?

The playbook is hosted as a public repository on GitHub. You can find it by searching for "EgoAlpha" and "prompt engineering playbook" or related terms on the platform. The repository has over 1,700 stars and is frequently updated.

Is this repository suitable for beginners in AI?

The repository is compiled from advanced research papers from institutions like Stanford, Google, and MIT. While it is an invaluable resource, it is likely geared towards developers, ML engineers, and researchers with a foundational understanding of large language models, APIs, and software engineering principles, rather than complete beginners.

How is this different from buying prompt packs online?

Commercial prompt packs often sell pre-written prompts for specific tasks. This repository focuses on teaching the underlying engineering methods and frameworks (like Chain-of-Thought, RAG architectures, and evaluation techniques) derived from academic research. It aims to provide the principles needed to design effective prompts and systems yourself, rather than offering static templates.

What are some examples of techniques included?

Based on the description, the repo includes implementations and explanations for advanced methods like Chain-of-Thought reasoning, which breaks down problems into steps; Retrieval-Augmented Generation (RAG) for incorporating external data; and frameworks for building autonomous AI agents. These are core techniques for building sophisticated, reliable applications with LLMs.