What Happened

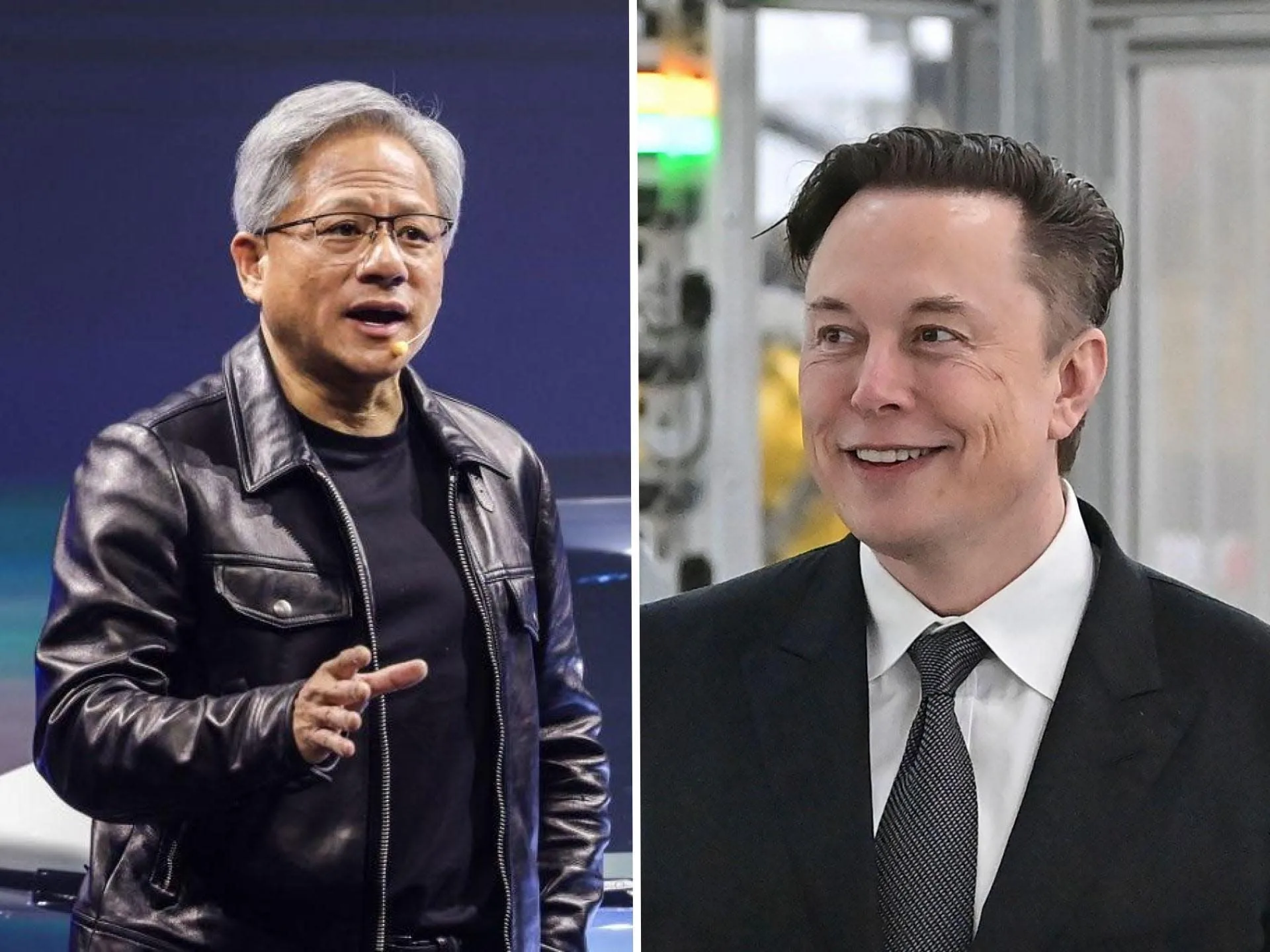

In a statement shared by AI researcher Rohan Paul, Elon Musk explained the fundamental driver behind Tesla's plan to build its own massive semiconductor fabrication facility, dubbed the "Terafab." According to Musk, the current global capacity for chip fabrication can supply only about 2% of the compute hardware Tesla would need for its AI ambitions, particularly for autonomous driving and robotics.

Musk's logic is straightforward: even if existing suppliers like TSMC, Samsung, or Intel were to expand their production capacity, and even if Tesla purchased every chip they could make, it would still fall drastically short of the company's projected demand. This supply gap makes building a dedicated, in-house fabrication facility—a terafab—a strategic necessity, not merely an option.

Context

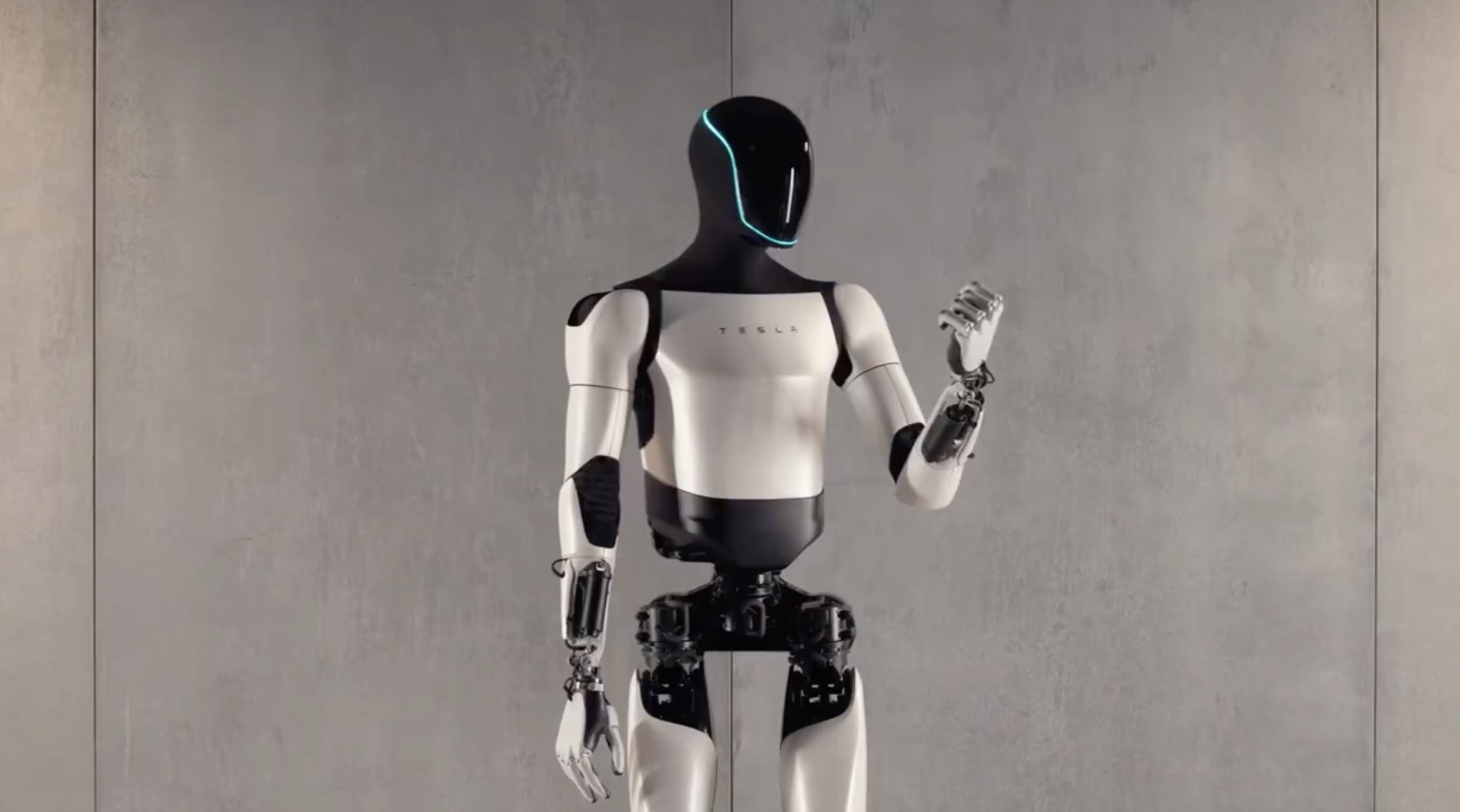

This statement aligns with Tesla's long-standing vertical integration strategy and its escalating compute needs. The company's Full Self-Driving (FSD) development relies heavily on massive AI training clusters. Tesla has already developed its own AI inference chip (the FSD Computer) and its Dojo supercomputer platform, which uses custom D1 training chips. Building a fab represents the next, most capital-intensive step in bringing the entire silicon supply chain in-house.

Musk's "terafab" concept suggests a facility designed for unprecedented scale, likely targeting production of tens or hundreds of thousands of wafer starts per month, far beyond the capacity of a typical "gigafab." The move pits Tesla against not only other automakers but also against tech giants like Google, Amazon, and Microsoft, who are also designing custom AI chips but largely rely on third-party fabs for manufacturing.

Key Implication: The primary bottleneck for scaling advanced AI is shifting from algorithmic innovation and data availability to physical hardware manufacturing capacity. Tesla's project highlights that the industry's growth is now constrained by the slow, expensive, and geopolitically sensitive semiconductor supply chain.