Key Takeaways

- Google DeepMind rolled out Deep Research Max and standard Deep Research agents on Gemini 3.1 Pro, enabling autonomous web and proprietary data research via the Gemini API.

- The Max variant uses extended test-time compute for thorough asynchronous reports.

What's New

Google DeepMind has launched two autonomous research agents built on the Gemini 3.1 Pro model: Deep Research and Deep Research Max. Both are now in public preview through the paid tiers of the Gemini API, targeting developers who need to automate heavy-duty research workflows.

The standard Deep Research agent replaces the preview version Google released in December 2025, with improvements in quality, lower latency, and reduced cost. It's designed for real-time interactions where speed matters, such as chat interfaces.

Deep Research Max takes the opposite approach, prioritizing depth over speed. It leverages extended test-time compute to reason, search, and iterate on its final report. Google positions it for asynchronous background tasks—like overnight cron jobs that produce full due diligence reports by morning.

Technical Details

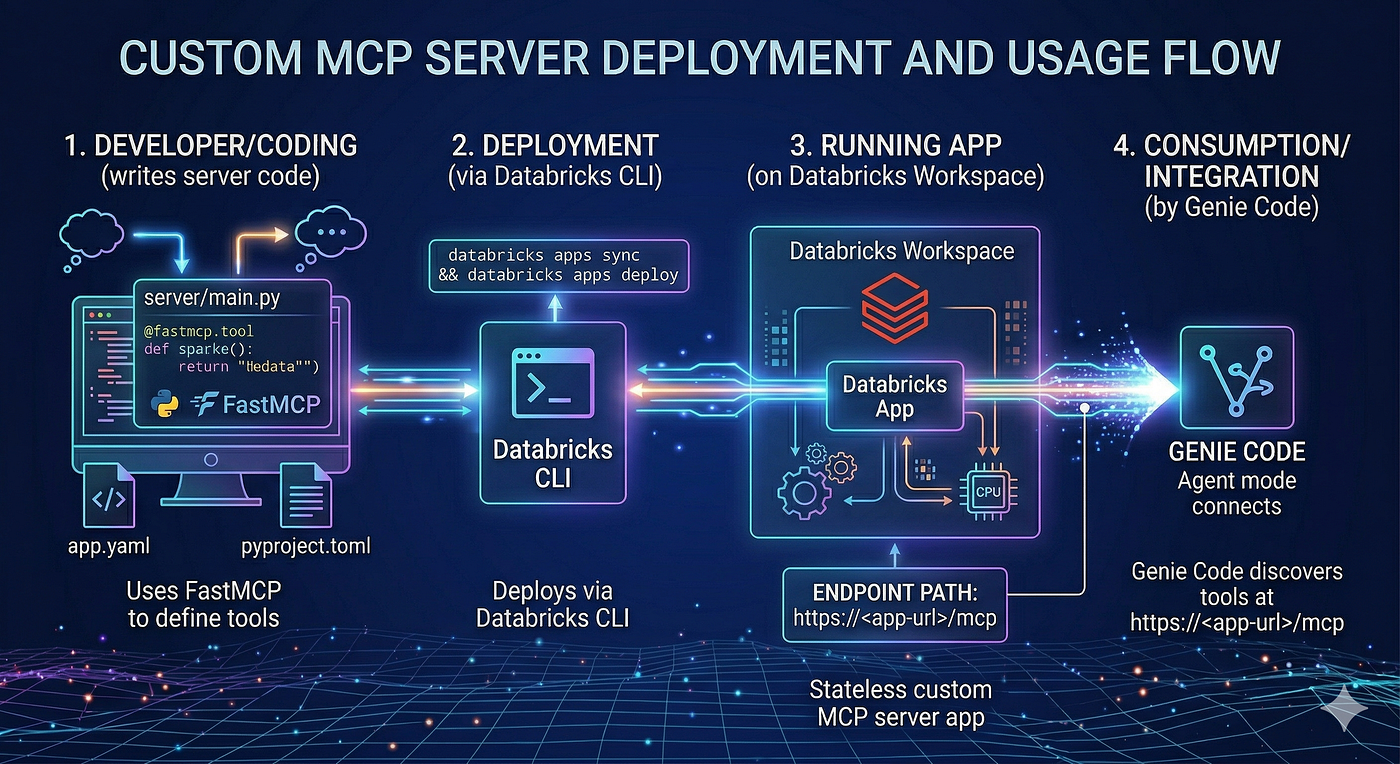

Both agents support the Model Context Protocol (MCP), enabling connections to proprietary data sources. For the first time, developers can plug in financial feeds and other specialized streams through MCP, expanding research beyond the open web.

The key differentiator is compute allocation:

- Deep Research: Optimized for low latency, lower cost, real-time responses

- Deep Research Max: Uses extended test-time compute for deeper reasoning and more thorough sourcing

A single API call kicks off the full research workflow, returning fully sourced analyses. The agents autonomously search, reason, and compile reports.

How It Compares

Google's benchmarks show Deep Research Max with a significant jump on retrieval and reasoning tasks compared to its predecessor. However, the comparison with competitors is nuanced.

Google's comparison reportedly omitted OpenAI's GPT-5.4 Pro, which scores highest on BrowseComp. Anthropic reports higher scores for Opus 4.6 than Google shows, noting the model performed better without high reasoning intensity.

These gaps likely stem from testing methodology differences—whether models were evaluated through raw API or wrapped in each lab's own tooling. Google's numbers aren't necessarily wrong, but they merit cautious interpretation.

What to Watch

Key limitations and caveats:

- Benchmark transparency: Google did not disclose BrowseComp scores for Deep Research Max, making direct comparison difficult

- Pricing: Not yet detailed for the Max tier beyond being available through paid Gemini API

- Real-world performance: Early benchmarks vs. actual production use cases remain unverified

- MCP adoption: Developer uptake of the Model Context Protocol will determine how useful proprietary data integration becomes

gentic.news Analysis

This launch follows a pattern we've tracked closely at gentic.news. Google's previous Deep Research preview in December 2025 was a narrower tool—this update represents a meaningful leap in capability by adding MCP support and the Max variant. The timing aligns with the broader industry trend toward agentic AI systems that can execute multi-step workflows autonomously.

Notably, Google is positioning these agents as developer tools via API, not just consumer features. This mirrors Anthropic's strategy with Opus 4.6 and OpenAI's GPT-5.4 Pro, both of which offer API-level access to research capabilities. The MCP integration is particularly interesting—it's a bet that proprietary data sources will be the killer app for research agents, not just web search.

However, the benchmark opacity is disappointing. Without disclosed BrowseComp scores for Deep Research Max, practitioners can't rigorously compare against GPT-5.4 Pro (89.3%) or Opus 4.6 (84%). Google's omission of OpenAI's strongest search model from comparisons raises questions about confidence in their own numbers. For developers evaluating which API to build on, this lack of transparency is a real friction point.

Frequently Asked Questions

What is Deep Research Max?

Deep Research Max is Google's new AI agent built on Gemini 3.1 Pro that autonomously conducts in-depth research across the web and proprietary data sources. It uses extended test-time compute to produce thorough, sourced reports asynchronously.

How does Deep Research Max compare to OpenAI's GPT-5.4 Pro?

Google has not disclosed BrowseComp scores for Deep Research Max. OpenAI's GPT-5.4 Pro scores 89.3% on BrowseComp, while GPT-5.4 scores 82.7%. Direct comparison is difficult due to different testing methodologies.

What is the Model Context Protocol (MCP)?

MCP is a protocol that allows AI agents to connect to proprietary data sources like financial feeds. Both Deep Research and Deep Research Max support MCP, enabling research beyond the open web for the first time.

How much does Deep Research Max cost?

Google has not announced specific pricing for Deep Research Max yet. It is available through the paid tiers of the Gemini API, but per-query costs have not been detailed.