Google researchers have introduced TurboQuant, a novel compression technique that reduces the memory footprint of Large Language Model inference by 6x without sacrificing accuracy. The method specifically targets the Key-Value (KV) cache—the "notebook" where LLMs store conversation history—which can consume up to 16GB of GPU memory in lengthy conversations.

What TurboQuant Solves: The Memory Bottleneck

When ChatGPT or similar models generate responses, they maintain a growing KV cache containing every token from the conversation history. This memory requirement scales linearly with context length: a 100,000-word conversation can demand approximately 16GB of GPU memory, consuming half the capacity of high-end GPUs like the H100.

"This is the #1 cost of running AI," notes AI commentator Lior OnAI. "Not the thinking. The remembering." As context windows expand toward millions of tokens, this memory bottleneck becomes increasingly prohibitive for real-world deployment.

How TurboQuant Works: PolarQuant + QJL

TurboQuant combines two innovations to achieve lossless compression at extreme ratios:

- PolarQuant: Randomly rotates numerical representations so they land on a predictable distribution curve, making them more compressible

- QJL (Quantization with Joint Learning): Uses one extra bit to correct residual quantization errors that remain after compression

The technique reduces each 32-bit floating point value in the KV cache to just 3 bits—comparable to replacing a full paragraph with three words while preserving meaning. Crucially, TurboQuant requires no model retraining and works instantly on any existing LLM.

Performance Results: Memory, Speed, and Accuracy

The research paper, presented at ICLR 2024, reports:

KV Cache Memory 16 GB < 3 GB 6x reduction Inference Speed (H100) Baseline 8x faster 8x speedup Search Indexing Time 500 seconds 0.001 seconds 500,000x faster Model Accuracy Baseline Identical Zero lossTurboQuant achieves compression within 2.7x of the theoretical mathematical limit for lossless compression, indicating the approach is nearing optimal efficiency.

Practical Implications: Cost and Accessibility

The memory reduction translates directly to cost savings: over 80% reduction in GPU memory costs for LLM inference. This dramatically lowers the barrier to deployment:

- Models requiring $200,000 server clusters can now run on single $2,000 GPUs

- AI agents can operate 24/7 without prohibitive infrastructure costs

- Longer context windows become economically feasible for production systems

"The race is no longer about bigger models," observes Lior OnAI. "It's about cheaper inference. The companies that win won't just have the best models. They'll have the best compression."

Technical Implementation Details

TurboQuant operates at the inference stage, intercepting and compressing the KV cache before it's stored in GPU memory. The 3-bit representations are decompressed on-the-fly during attention computation, adding minimal computational overhead. The method is model-agnostic, working with Transformer architectures regardless of size or training methodology.

The open-access paper includes complete implementation details and has been peer-reviewed for ICLR 2024, suggesting the results are reproducible and methodologically sound.

gentic.news Analysis

TurboQuant represents a significant shift in the LLM optimization landscape. While most research has focused on model architecture improvements or training efficiency, Google is attacking the increasingly critical inference cost problem. This aligns with our previous coverage of Speculative Decoding techniques and Mixture of Experts architectures—all approaches aimed at making LLM inference more practical at scale.

The timing is particularly noteworthy given the industry's push toward million-token context windows. As we reported in our analysis of Claude 3's 200K context, memory requirements grow linearly with context length, creating an unsustainable cost curve. TurboQuant directly addresses this by decoupling memory growth from context expansion.

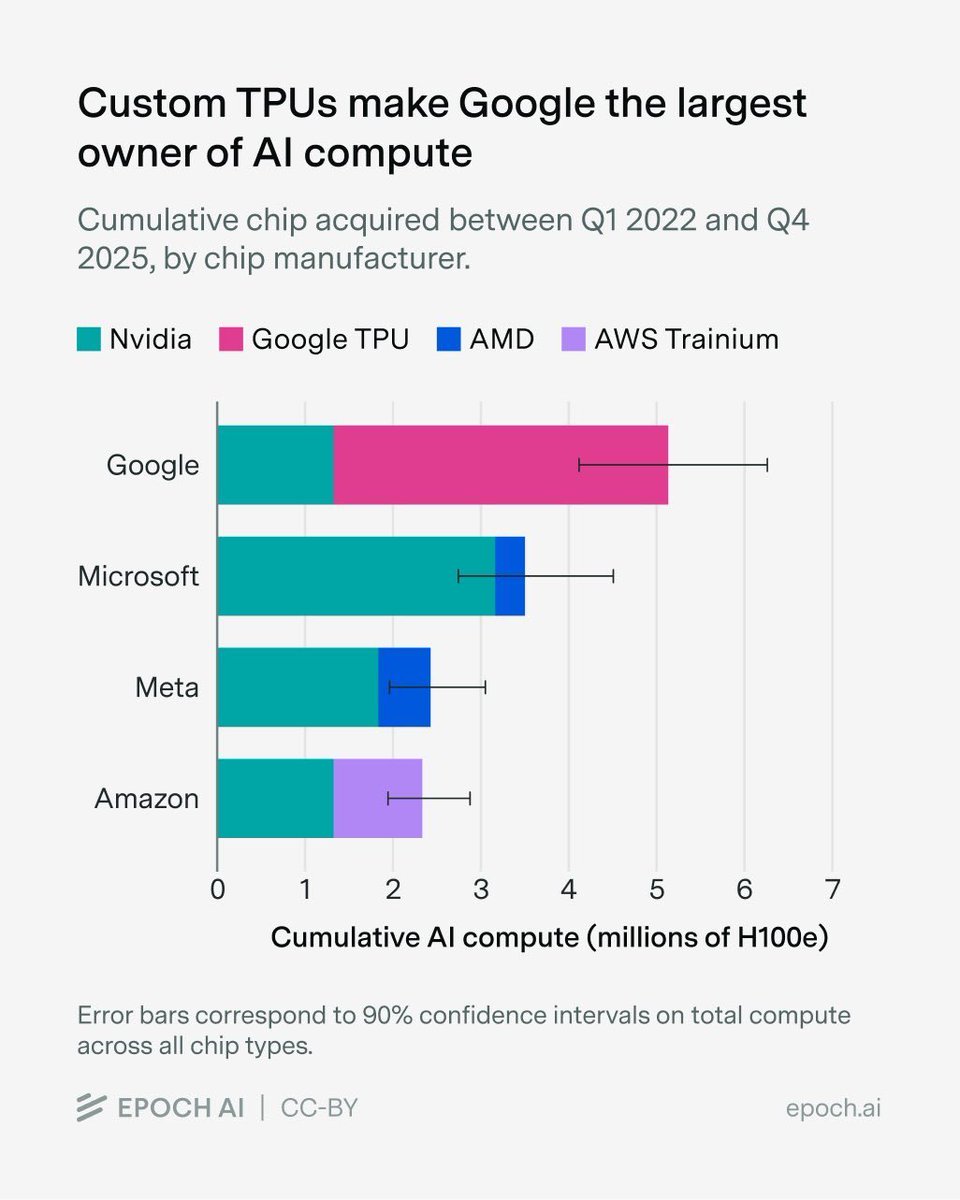

From a competitive standpoint, this positions Google favorably against OpenAI and Anthropic, both of whom have invested heavily in longer-context models. If Google can deploy TurboQuant across its Gemini models while maintaining accuracy, it could achieve significant cost advantages in the inference-as-a-service market. This follows Google's pattern of infrastructure-first innovations, similar to their earlier work on Pathways architecture and TPU optimizations.

Practitioners should note that while TurboQuant shows impressive results, real-world deployment will require careful integration with existing inference systems. The 8x speedup on H100 GPUs suggests substantial hardware optimization, and performance on consumer-grade hardware may differ. Nevertheless, the 6x memory reduction alone could enable new applications previously limited by GPU memory constraints.

Frequently Asked Questions

How does TurboQuant compare to traditional quantization methods?

Traditional quantization typically reduces precision uniformly (e.g., from 32-bit to 8-bit) and often requires retraining or fine-tuning to recover accuracy losses. TurboQuant uses a two-stage approach (PolarQuant + QJL) that preserves accuracy without retraining and achieves more aggressive compression (3-bit vs typical 8-bit).

Can TurboQuant be combined with other optimization techniques?

Yes, the researchers note that TurboQuant is complementary to other inference optimizations like speculative decoding, pruning, and distillation. Since it operates specifically on the KV cache, it can be layered with model compression techniques that reduce parameter counts.

What models does TurboQuant work with?

The paper demonstrates results on Transformer-based architectures, including GPT-style models. The method is model-agnostic and should work with any LLM that uses attention mechanisms with KV caching. The researchers tested on multiple model sizes from 7B to 70B parameters.

Is TurboQuant available for implementation now?

The research paper is open-access on arXiv and includes implementation details. However, production-ready implementations would need to be developed by inference engine providers (like vLLM, TensorRT-LLM, or Hugging Face) or cloud providers. Given Google's track record, we might see integration into Google Cloud's AI offerings first.