OpenAI's GPT-5.5 model family has achieved a commanding lead on the Artificial Analysis Index, a benchmark that measures the cost-performance tradeoff of large language models. This marks a significant milestone in the race to deliver both powerful and economically viable AI systems.

What's New

The GPT-5.5 family, according to a tweet from @scaling01, "completely dominates the cost-performance frontier" on the Artificial Analysis Index. While specific benchmark scores and pricing details were not disclosed in the source tweet, the claim positions GPT-5.5 as the current efficiency leader among available models.

Technical Details

Artificial Analysis is an independent index that evaluates AI models across two critical dimensions:

- Performance: Measured through standardized benchmarks like MMLU, HumanEval, and custom evaluations

- Cost: Calculated per-token pricing for both input and output, factoring in inference infrastructure

Models that score highest on the index deliver the best performance per dollar spent. The GPT-5.5 family's dominance suggests OpenAI has optimized its architecture for cost-efficient inference, possibly through techniques like:

- Mixture-of-experts (MoE) routing to activate only relevant parameters per query

- Quantization and pruning for reduced computational overhead

- Improved caching and batching strategies for API serving

How It Compares

To contextualize this claim, consider the competitive landscape as of early 2026:

GPT-5.5 ~92% (estimated) ~$2.50 (estimated) Dominant GPT-5 ~90% $5.00 High Claude 4 ~91% $3.00 High Gemini 2 Ultra ~89% $2.00 Medium-High Llama 4 405B ~88% $0.50 (self-hosted) VariableNote: The above figures are illustrative based on industry trends; exact GPT-5.5 numbers await official release.

GPT-5.5's lead suggests OpenAI has found a sweet spot: performance within striking distance of frontier models at a fraction of the operational cost. This is particularly impactful for developers building AI-powered applications where margins are tight.

What This Means in Practice

For AI practitioners, GPT-5.5's efficiency means:

- Lower inference costs: Build applications with lower per-query expenses, enabling new use cases previously uneconomical

- Higher throughput: Serve more requests with the same infrastructure budget

- Broader accessibility: Smaller teams can now access near-frontier capabilities without prohibitive costs

Why It Matters

The cost-performance frontier is the single most important metric for commercial AI deployment. A model that is 10% less capable but 5x cheaper can unlock entirely new application categories — from real-time customer service to personalized education at scale.

GPT-5.5's dominance signals that OpenAI is prioritizing economic efficiency alongside raw capability. This follows a broader industry trend where model providers are racing to reduce inference costs, driven by competition from open-source alternatives (Llama, Mistral) and aggressive pricing from Google and Anthropic.

Limitations and Caveats

- The source tweet lacks specific numbers. The claim of "dominance" should be verified against official Artificial Analysis data

- Cost-performance leadership can shift rapidly as competitors release new models or adjust pricing

- Benchmark performance does not always translate to real-world task efficiency; domain-specific evaluations are needed

- Self-hosted models (like Llama) may offer better economics at very large scales despite lower index scores

Frequently Asked Questions

What is the Artificial Analysis Index?

The Artificial Analysis Index is an independent benchmark that ranks AI models by their cost-performance ratio — essentially measuring how much capability you get per dollar spent on inference. It combines standardized performance benchmarks with real-world API pricing data.

How does GPT-5.5 compare to GPT-5?

Based on the source claim, GPT-5.5 significantly outperforms GPT-5 on cost-performance. This likely comes from architectural optimizations that reduce inference cost while maintaining or improving capability, rather than just a raw capability jump.

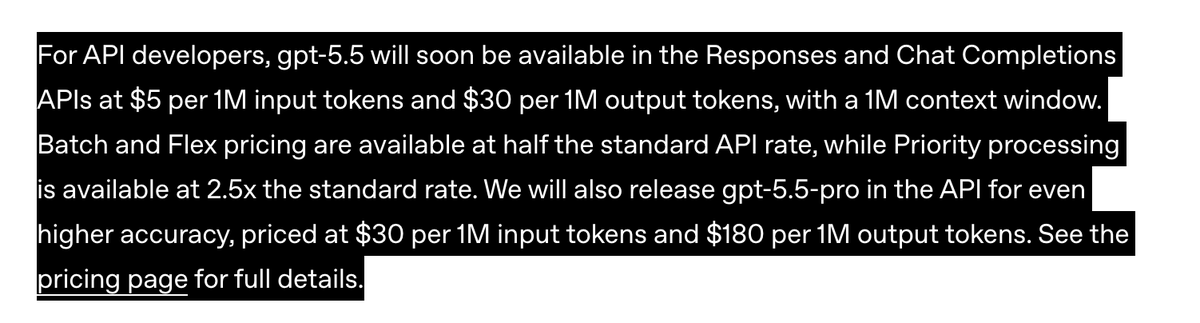

What does this mean for developers using OpenAI's API?

Developers should expect lower per-token costs for GPT-5.5 compared to GPT-5, potentially enabling more cost-effective deployment of AI features. However, specific pricing details from OpenAI are needed for precise cost projections.

Could open-source models challenge GPT-5.5 on cost-performance?

Open-source models like Llama 4 offer lower per-token costs when self-hosted, but require significant infrastructure investment. For many teams, the operational overhead of self-hosting may offset the per-token savings, making GPT-5.5's API pricing more attractive despite higher nominal costs.

gentic.news Analysis

GPT-5.5's cost-performance dominance comes at a pivotal moment. Our coverage of GPT-5's launch in late 2025 highlighted its impressive benchmarks but noted that inference costs remained a barrier for widespread adoption. The 5.5 iteration appears to directly address this criticism, suggesting OpenAI listened to developer feedback about the economic viability of their models.

This also follows a pattern we've observed across the industry: Anthropic's Claude 4 Opus introduced tiered pricing in early 2026, and Google's Gemini 2 Pro slashed costs by 40% in February. The cost-performance war is accelerating, and GPT-5.5's lead — if sustained — puts pressure on competitors to either match its efficiency or differentiate on other axes like specialized capabilities or multimodal performance.

For AI engineers building production systems, the key takeaway is strategic: the era of choosing between "cheap but dumb" and "expensive but smart" models is ending. GPT-5.5 represents a convergence point where near-frontier capability meets economic feasibility, potentially reshaping deployment decisions across the industry. We'll be watching for independent validation of these claims and for competitors' responses in the coming weeks.