What Happened

A new research paper proposes "Stateless Decision Memory for Enterprise AI Agents," addressing a critical bottleneck in production-grade agent deployments: the inability to scale stateful agents horizontally. The work, highlighted by AI researcher @omarsar0, focuses on the plumbing of agent systems rather than raw capability, emphasizing auditability, fault tolerance, and container-native deployment.

The Problem: Stateful Agents Don't Scale

In enterprise environments, agents are often stateful, meaning each instance maintains its own persistent memory (e.g., conversation history, context, decision state). This works fine for a handful of agents, but when you need thousands of concurrent instances running across containers, per-agent state becomes the bottleneck. Stateful agents cannot scale horizontally because each instance carries its own baggage, making load balancing, fault recovery, and resource allocation complex.

The Solution: Immutable Decision Logs

The paper proposes replacing active memory with immutable decision logs using event-sourcing principles from distributed systems. Under this model:

- Any agent instance can reconstruct context by replaying the log.

- Decision logic stays separate from storage.

- Agents become stateless compute units that read from a shared, append-only log.

This approach is inspired by event sourcing, a pattern used in distributed databases and microservices architectures. Instead of storing the current state, the system records every decision as an immutable event. When an agent needs context, it replays the relevant events to reconstruct the state. This makes agents stateless from a compute perspective, enabling horizontal scaling: any instance can pick up any task by reading the log.

Why It Matters for Enterprise

For enterprises deploying AI agents in regulated environments, this approach addresses three key requirements:

Auditability: Every decision is recorded as an immutable log entry, providing a complete audit trail. This is essential for compliance with regulations like GDPR, HIPAA, or financial reporting standards.

Fault Tolerance: If an agent crashes, another instance can reconstruct its state from the log. No state is lost because the log is persistent.

Container-Native Deployment: Stateless agents fit naturally into container orchestration platforms like Kubernetes. They can be scaled up or down based on demand, without worrying about state affinity.

Context: The State of Agent Memory Research

The paper stands out because most agent memory research focuses on capability (e.g., how to store and retrieve information better). This work is about the operational side: how to make agents work reliably at scale. It's a reminder that production deployments often require different tradeoffs than research prototypes.

What This Means in Practice

For teams building enterprise AI agents, this approach means:

- You can scale agents horizontally without custom state management solutions.

- You get a built-in audit trail for every decision.

- You can use standard container orchestration tools without special handling for stateful agents.

gentic.news Analysis

This paper addresses a pain point that many in the AI agent community have been feeling but few have articulated: the operational complexity of stateful agents. While the community is rightly excited about agent capabilities—tool use, planning, memory retrieval—the reality of deploying these systems in production is often glossed over. This work brings much-needed attention to the infrastructure layer.

The event-sourcing approach is not new in distributed systems (it's been used in databases like Apache Kafka and event-driven architectures for years), but applying it to agent memory is novel. It suggests that the future of enterprise agents may look more like microservices than monolithic chatbots. This aligns with broader trends we've seen in the industry: companies are moving away from single-agent architectures toward multi-agent systems with specialized roles, where statelessness becomes even more critical.

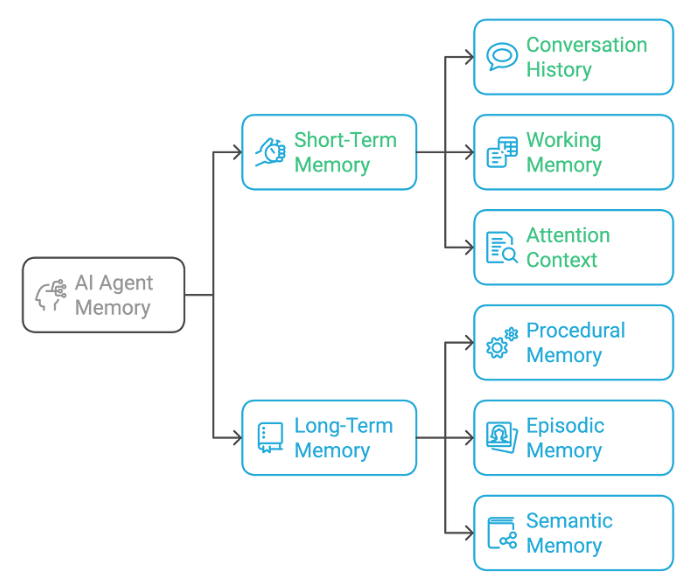

One limitation: the paper focuses on decision memory, not all memory. Agents still need some form of working memory for the current task, but the heavy lifting of context reconstruction can be offloaded to log replay. This is a pragmatic tradeoff that prioritizes scalability over perfect recall.

Frequently Asked Questions

What is stateless decision memory for AI agents?

Stateless decision memory replaces the persistent, per-agent state with immutable decision logs. Instead of storing the current state of an agent, every decision is recorded as an event. When an agent needs context, it replays the relevant events to reconstruct the state, making the agent itself stateless.

How does this help with scaling AI agents?

By making agents stateless, they can be scaled horizontally across containers without worrying about state affinity. Any instance can pick up any task by reading the shared event log, enabling load balancing, fault tolerance, and efficient resource utilization.

Is this approach suitable for regulated industries?

Yes. The immutable decision logs provide a complete audit trail of every decision the agent made, which is essential for compliance with regulations like GDPR, HIPAA, and financial reporting standards. The approach also supports fault tolerance and container-native deployment, common requirements in enterprise environments.

How does this compare to other agent memory approaches?

Most agent memory research focuses on improving retrieval or storage mechanisms (e.g., vector databases, attention mechanisms). This work is different: it addresses the operational challenge of scaling agents in production. It's less about making agents smarter and more about making them reliable at scale.