What Happened

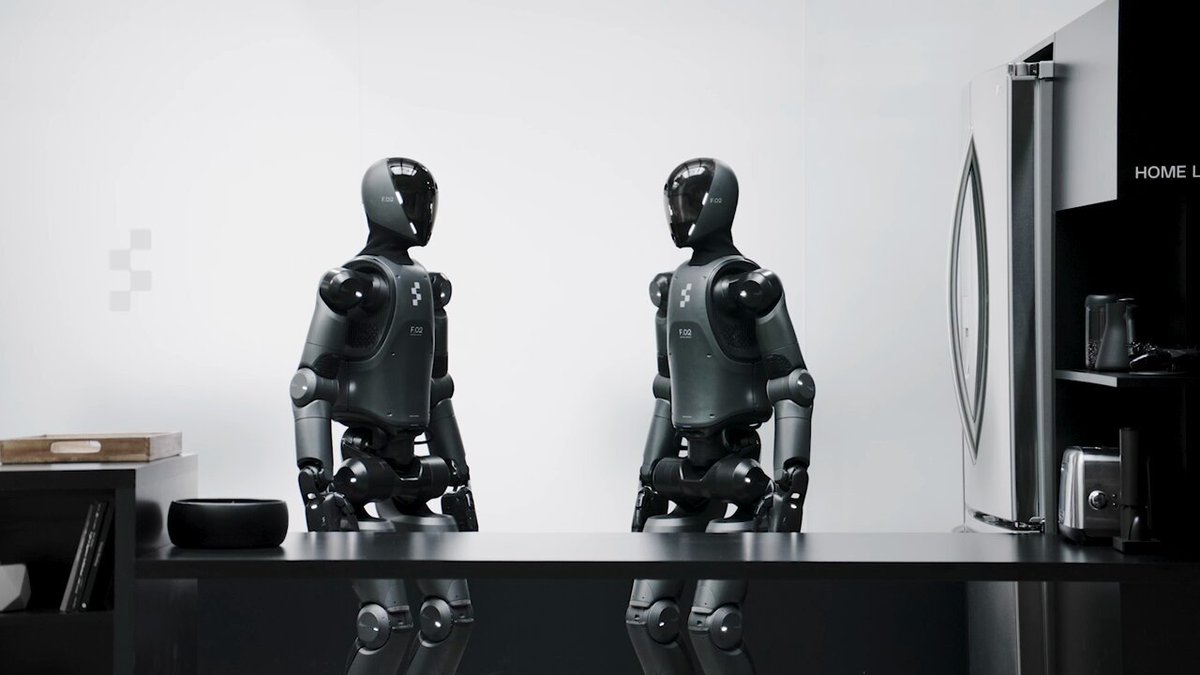

Kinetix AI has teased its new humanoid robot, KAI, via a post on X (formerly Twitter) by the account @kimmonismus. The announcement highlights several key specifications: 36 degrees of freedom (DOF), a hybrid dexterous hand design, and 18,000 sensors embedded across a soft, flexible body. The company claims this makes KAI the most human-like robotic system to date.

Context

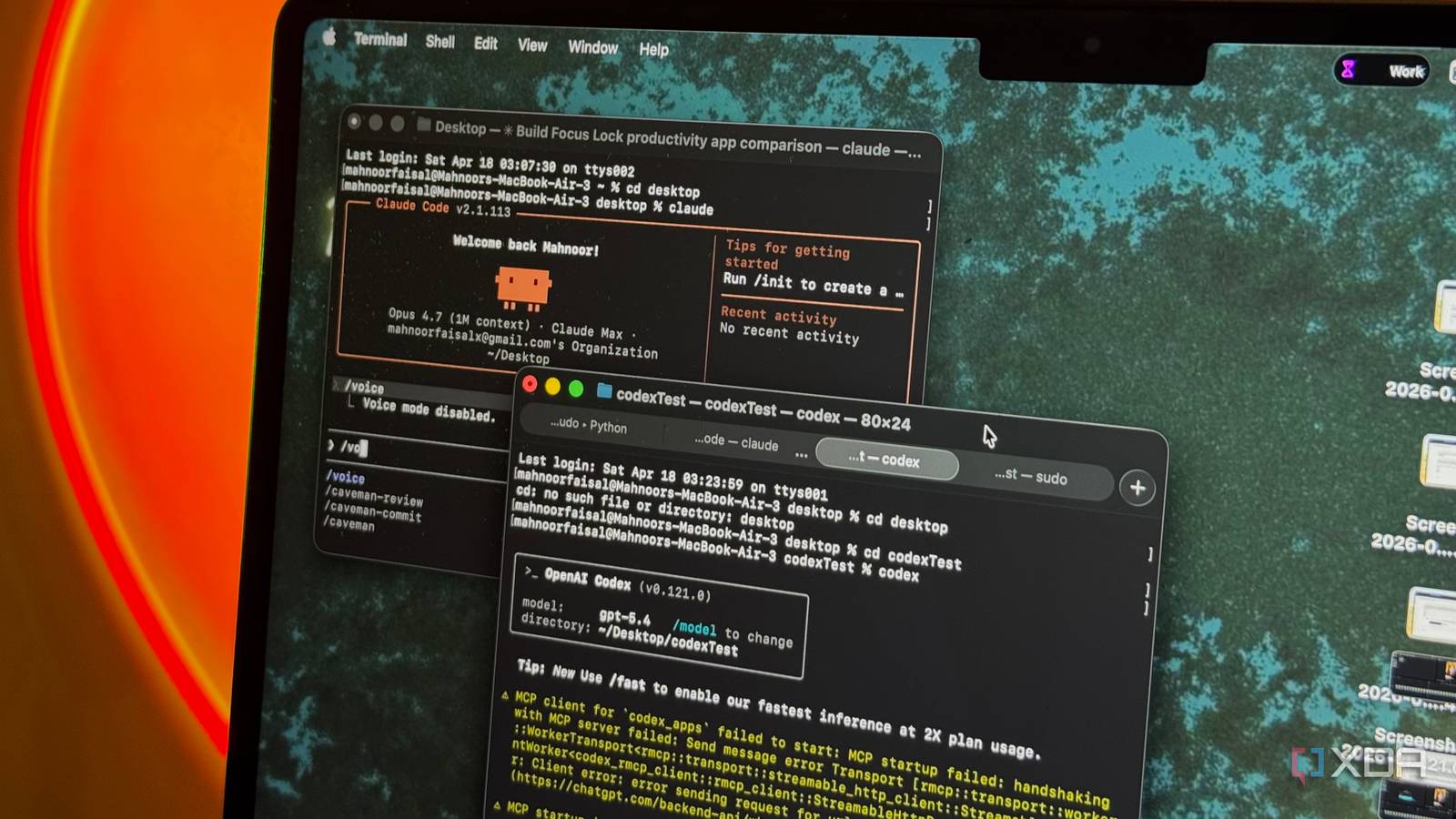

Humanoid robotics is a rapidly evolving field. While many robots focus on either industrial precision (like Boston Dynamics' Atlas) or social interaction (like Hanson Robotics' Sophia), KAI appears to target a middle ground—combining high-DOF articulation with soft robotics and dense sensor arrays. The 36 DOF figure is notably high; for comparison, Tesla Optimus has around 40 DOF across the whole body, and Figure 02 has roughly 30-35. The hybrid dexterous hand suggests Kinetix is prioritizing manipulation tasks, a key bottleneck in real-world robot deployment.

The 18,000 sensors are notable for their density. Most humanoids embed fewer than 1,000 sensors, relying on external cameras and LIDAR. Embedding sensors across a soft body could enable better force sensing, tactile feedback, and collision detection—critical for safe human-robot interaction.

What to Watch

The teaser is just that—a teaser. No video, benchmarks, or deployment timeline were provided. Key questions remain:

- Is KAI a research prototype or a product aimed at commercial deployment?

- What is the compute architecture? Onboard inference or cloud-dependent?

- How does the soft, flexible body handle durability and maintenance?

- What is the power consumption and thermal management strategy?

Without more details, it's hard to assess whether KAI is a genuine leap forward or a carefully curated spec sheet. The humanoid robotics space is full of impressive demos that fail to translate into reliable, affordable systems.

gentic.news Analysis

Kinetix AI's teaser comes at a time when humanoid robotics funding and interest are at an all-time high. We've covered Figure AI's $675 million raise, Tesla's Optimus updates, and the ongoing competition between Boston Dynamics and Agility Robotics. KAI's emphasis on sensor density and soft robotics could differentiate it from the rigid, hydraulically actuated designs common in the space.

The 36 DOF figure is competitive, but DOF alone doesn't determine capability—control software, power density, and reliability matter more. The hybrid dexterous hand suggests Kinetix is targeting manipulation, which is the hardest unsolved problem in robotics. Most humanoids can walk; few can reliably pick up and manipulate arbitrary objects.

The 18,000 sensors figure is attention-grabbing but raises questions: What types of sensors? How are they read and processed? What latency is acceptable? A dense sensor array is useless without a real-time processing pipeline.

Kinetix AI appears to be a relatively new player in the humanoid space. The company's previous work is not widely known. This teaser may be an attempt to attract talent, funding, or strategic partnerships. The humanoid robotics market is expected to grow to $38 billion by 2035, according to some analysts, so timing is favorable.

We'll be watching for a live demo, benchmark results, or a technical paper. Until then, KAI remains an intriguing concept with impressive specs on paper.

Frequently Asked Questions

What is Kinetix AI's KAI robot?

KAI is a humanoid robot teased by Kinetix AI, featuring 36 degrees of freedom, a hybrid dexterous hand, and 18,000 sensors embedded across a soft, flexible body.

How does KAI compare to other humanoid robots?

KAI's 36 DOF is competitive with Tesla Optimus (~40 DOF) and Figure 02 (~30-35 DOF). Its 18,000 sensors are significantly more than most humanoids, which typically embed fewer than 1,000 sensors.

When will KAI be available?

No availability or timeline has been announced. The teaser is an early announcement without a delivery date.

What is a hybrid dexterous hand?

A hybrid dexterous hand combines elements of underactuated and fully actuated designs, aiming to balance dexterity, strength, and simplicity. It typically allows for precise manipulation of objects while maintaining robustness.