What Happened

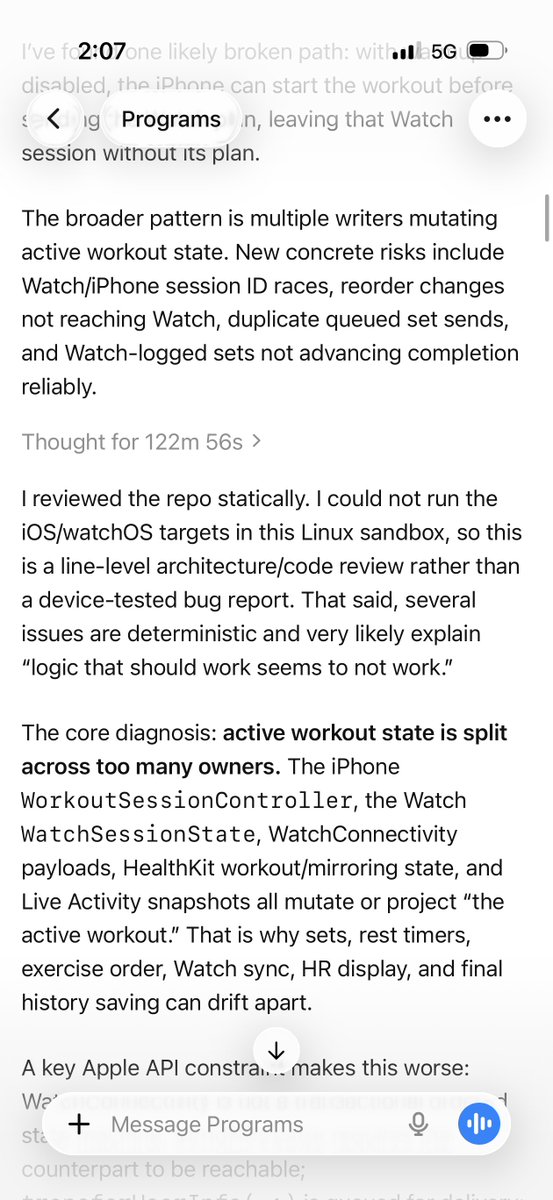

A developer on X (formerly Twitter) reported that GPT-5.5 Pro now "works consistently for 2 hours finding all of the issues for me" when fixing code — a significant improvement in sustained task performance for an AI coding assistant.

The user, @mweinbach, noted this as their "favorite way of making the clankers fix things," suggesting the model is being used for automated bug detection and repair in what appears to be a robotics or hardware-adjacent context ("clankers" is slang for robots, popularized by sci-fi).

Context

GPT-5.5 Pro was released by OpenAI in early 2026 as an incremental upgrade to GPT-5, focusing on reliability and consistency rather than raw benchmark gains. The model introduced improved context adherence for long conversations and better handling of multi-step tasks.

Previous versions of GPT-5 and GPT-5 Pro were known to degrade in performance after 30-45 minutes of continuous use, particularly on complex coding tasks where the model would lose track of earlier context or begin making inconsistent suggestions.

The 2-hour consistent performance claim, if reproducible at scale, represents a meaningful improvement for developers who rely on AI assistants for extended debugging or refactoring sessions.

What This Means in Practice

For developers using GPT-5.5 Pro as a coding assistant, this translates to longer uninterrupted work sessions without needing to reset context or restart conversations. The practical impact: less time managing the AI and more time actually fixing code.

gentic.news Analysis

This user report aligns with what we've been tracking in our coverage of the GPT-5 series. In our February 2026 analysis of GPT-5.5 Pro's release, we noted that OpenAI had specifically optimized for "conversation coherence over extended interactions" — this appears to be the first real-world evidence that those optimizations are paying off.

However, we should be cautious about extrapolating from a single user's experience. The claim lacks specifics about the codebase complexity, programming language, or type of issues being fixed. A 2-hour session on a simple Python script is very different from 2 hours on a distributed systems bug.

What's notable is the specific use case: automated bug fixing, not code generation. This suggests GPT-5.5 Pro may be more reliable for debugging tasks than for writing new code from scratch — a pattern we've seen in other models where verification tasks outperform generation tasks.

Frequently Asked Questions

How does GPT-5.5 Pro compare to GPT-5 for coding?

GPT-5.5 Pro focuses on consistency and reliability improvements rather than raw capability gains. Early reports suggest better context retention over long sessions and more consistent output quality, though benchmark scores are similar to GPT-5.

Is GPT-5.5 Pro available to all users?

GPT-5.5 Pro is available to ChatGPT Pro subscribers and through the OpenAI API. Pricing remains at $200/month for the Pro tier as of April 2026.

Can GPT-5.5 Pro replace human developers for bug fixing?

No. While the model shows improved sustained performance, it still requires human oversight. The user's phrasing "making the clankers fix things" suggests a tool-assisted workflow rather than fully autonomous debugging.

What types of bugs can GPT-5.5 Pro find?

Based on user reports, the model handles syntax errors, logic bugs, and common pattern-matching issues well. Edge cases requiring deep domain knowledge or understanding of proprietary systems remain challenging.