A key finding for practitioners using OpenAI's multimodal image generation is emerging: the choice of the underlying Large Language Model (LLM) is not just a text interface but a core determinant of final image quality. Unlike previous standalone image generators like DALL-E 3 or Midjourney, the output from GPT-ImageGen-2 varies dramatically based on whether it's powered by GPT-5.4, GPT-5.4 Thinking, or GPT-5.4 Pro.

Key Takeaways

- The quality of images generated by GPT-ImageGen-2 is heavily dependent on the underlying LLM used for reasoning.

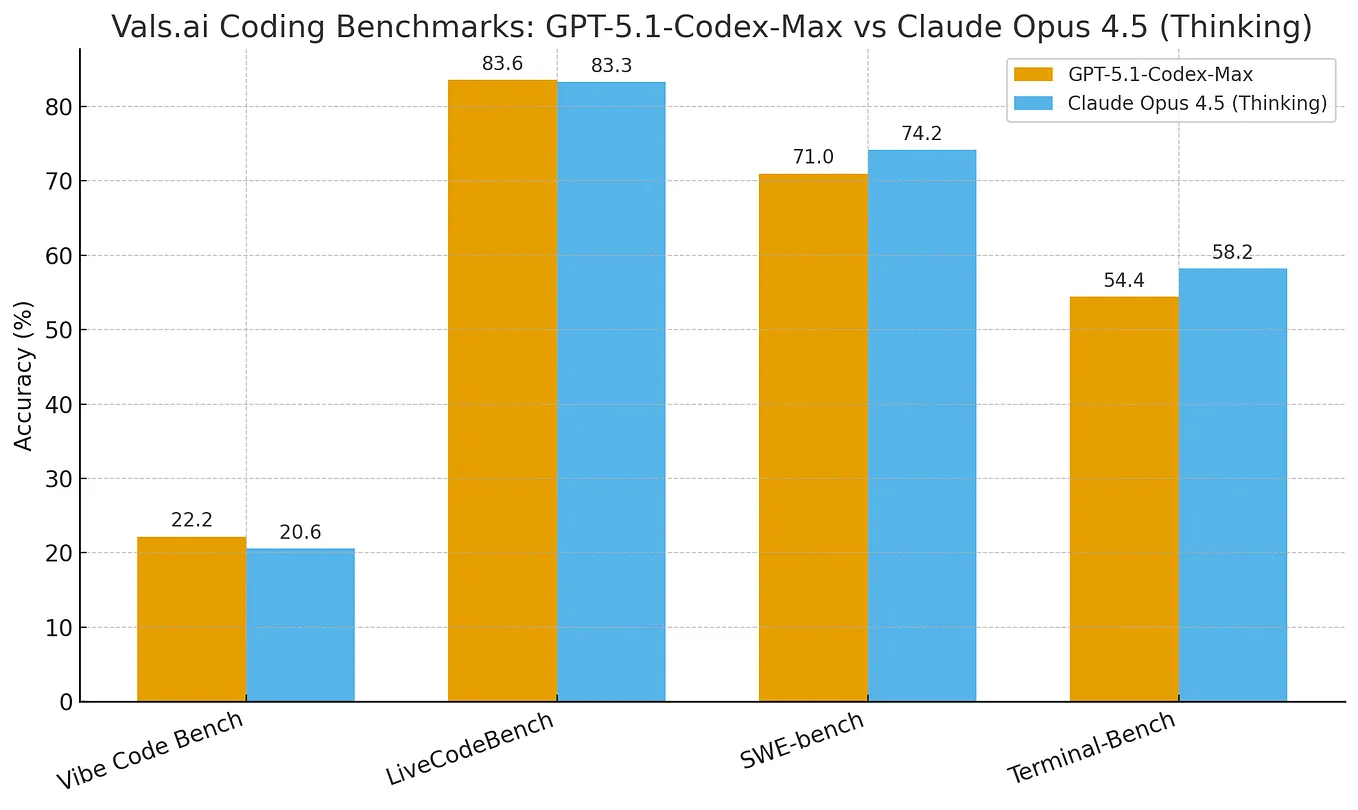

- GPT-5.4 'Thinking' and 'Pro' models produce superior outputs, especially for complex concepts, a non-intuitive finding not documented by OpenAI.

What Happened

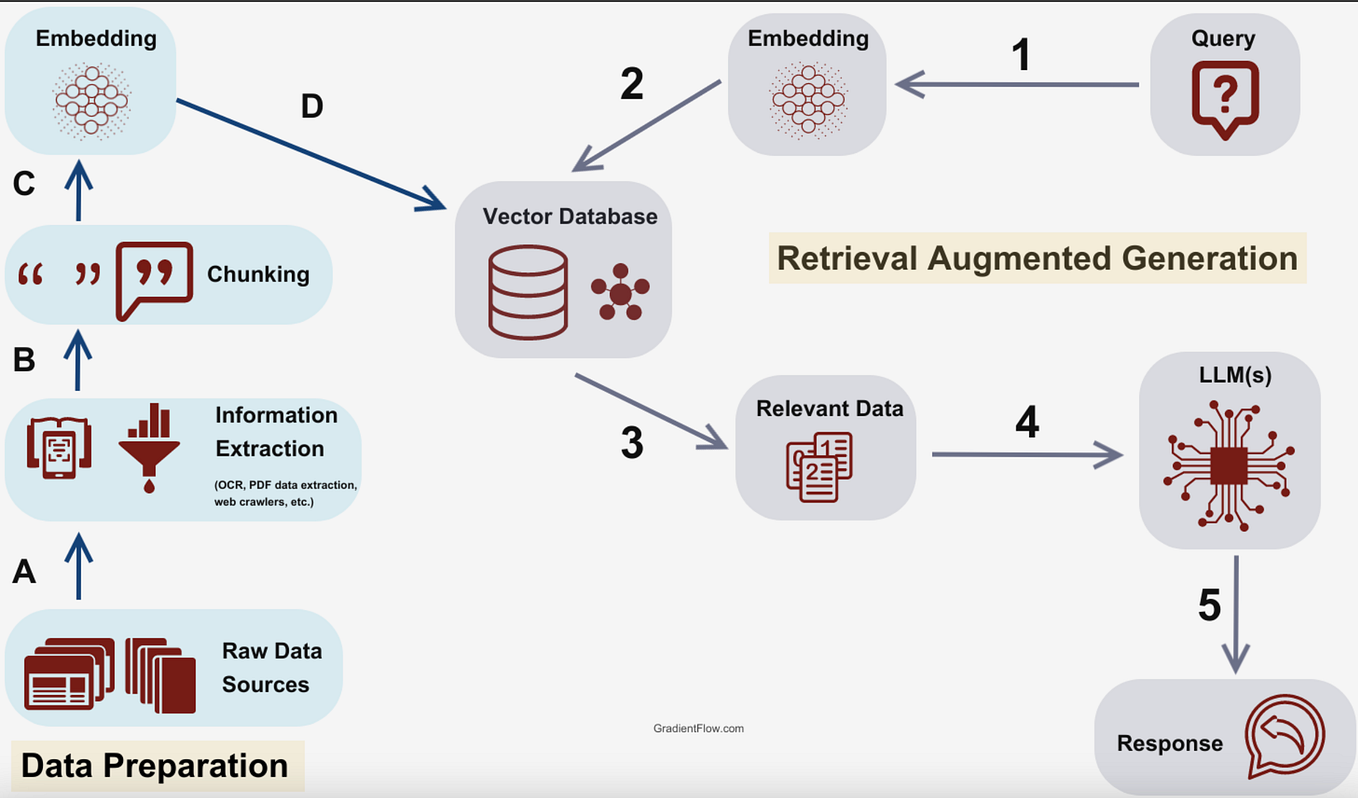

Observations shared by researcher Ethan Mollick highlight a significant, undocumented characteristic of OpenAI's integrated image generation system. When a user submits a prompt to create an image via ChatGPT or the API using GPT-ImageGen-2, the system uses an LLM to interpret, reason about, and refine the prompt before the image model generates the pixels. This intermediate reasoning step is where the model choice matters.

Mollick's testing indicates that GPT-5.4 Pro and GPT-5.4 Thinking produce "much better images, especially for complex things" compared to the standard GPT-5.4 model. This effect was not present in previous generations of image models, which operated more independently of the chat interface's reasoning capabilities.

The Technical Implication: Reasoning as a Bottleneck

This finding points to the architecture of GPT-ImageGen-2. It is not a simple text-to-image pipeline but a system where the LLM acts as a prompt optimizer and scene decomposer. For a complex prompt like "a futuristic library where books are made of light and librarians are AI holograms," the LLM must:

- Parse the abstract concepts.

- Break them down into composable visual elements.

- Structure a detailed, technically sound description for the image model.

Advanced LLMs like GPT-5.4 Pro, with their enhanced reasoning and instruction-following capabilities, perform this decomposition more effectively. They likely generate more coherent, detailed, and logically consistent scene descriptions, which the image model then translates into higher-fidelity visuals.

Why This Isn't Intuitive

For users, the LLM selector in ChatGPT is typically associated with textual reasoning speed, cost, and conversation quality. The interface provides no indication that this choice also governs the quality of a separate modality's output (images). This creates a hidden performance tier:

- GPT-5.4 (Standard): Faster/cheaper chat, potentially lower-quality image generation for complex prompts.

- GPT-5.4 Thinking/Pro: More expensive chat, but unlocks significantly higher-quality image generation.

This bundling is a departure from the industry norm, where image generation quality is a function of the image model version (e.g., Stable Diffusion 3 vs. SDXL) and its dedicated parameters, not the attached chat model.

gentic.news Analysis

This observation fits a clear trend in OpenAI's product strategy: the deep integration and bundling of capabilities into a unified "GPT" stack. As we covered in our analysis of the GPT-5.4 launch, OpenAI has been moving away from selling discrete, best-in-class models (like a standalone DALL-E API) and toward selling access to a reasoning-centric platform where all outputs—text, code, image, audio—are mediated and enhanced by the core LLM's intelligence.

This creates both a powerful synergy and a form of vendor lock-in. The image model's performance is now intrinsically linked to the LLM's reasoning power, making it difficult to benchmark or use GPT-ImageGen-2 independently. It also suggests that future improvements to OpenAI's image generation may come as much from advances in the GPT-series LLMs (like the anticipated GPT-5.5) as from breakthroughs in the dedicated image model architecture.

The finding also highlights the ongoing black-box problem in commercial AI. Critical performance parameters that directly affect output quality and cost are not exposed in the UI or fully detailed in the documentation. Practitioners must rely on community benchmarking and shared findings, like this one, to optimize their workflows—a significant hurdle for professional, reproducible use.

Frequently Asked Questions

Does this mean GPT-5.4 Pro is always better for images?

For simple, concrete prompts ("a red apple on a table"), the difference may be negligible. The performance gap becomes most apparent with prompts requiring abstract reasoning, multi-object composition, or adherence to complex constraints. For professional or creative work, using GPT-5.4 Pro or Thinking is likely worth the cost.

Is this the same for the GPT-5.4 API?

Yes, the same principle should apply to the API. The model parameter you select (e.g., gpt-5.4-pro) when calling the endpoint that generates images will determine the reasoning engine used, thereby affecting image quality. Developers should benchmark their specific use cases.

How does this compare to using Midjourney or Stable Diffusion?

This creates a key differentiator. Services like Midjourney have highly specialized image models but less sophisticated prompt reasoning. OpenAI's integrated approach uses a world-class LLM to understand the prompt better, which can lead to superior results for linguistically complex requests, even if the base image model might be different. The trade-off is less transparency and control over the image-generation-specific parameters.

Has OpenAI commented on this?

As of now, OpenAI has not officially documented this behavior or explained the technical integration between the LLM and GPT-ImageGen-2 in public-facing materials. The discovery comes from user experimentation and observation.