A recent observation by developer Simon Willison suggests that OpenAI's rumored next-generation image model, internally referred to as gpt-imagegen-2 or GPT-ImageGen-2, likely functions differently than its predecessors. Instead of users writing detailed text prompts, the system appears to work as a tool where the AI models themselves generate the prompts based on simpler user input.

This inference is drawn from the model's naming convention and its integration pattern within OpenAI's ecosystem. The designation as a "tool" aligns with how OpenAI's models, like GPT-4, can call external functions or APIs. In this hypothesized architecture, a user might provide a high-level instruction (e.g., "an illustration of a robot gardening"), and a language model would then generate a detailed, optimized prompt that is fed to the image generation engine.

Key Takeaways

- Evidence suggests OpenAI's upcoming image model, GPT-ImageGen-2, operates as a tool where AI models generate the prompts, not users.

- This marks a shift from the transparent prompt display seen in DALL-E 3.

What Happened

Simon Willison noted on X that GPT-ImageGen-2 (also referenced as ChatGPT Images 2.0 or gpt-image-2) "works as a tool which the models generate prompts for." This technical speculation is based on the model's listing in system contexts or API documentation, where it is defined as a callable tool.

The key lament from Willison, echoed by many in the AI community, is the lack of transparency: "I wish we could see those prompts, like back in the DALL-E 3 days." When OpenAI launched DALL-E 3 integrated into ChatGPT, it initially showed users the verbose, model-generated prompt it used to create an image. This feature was later removed, moving to a more opaque "black box" interaction.

Context: The Shift from Prompt Transparency

This development continues a clear trend away from user-facing prompt engineering for image generation.

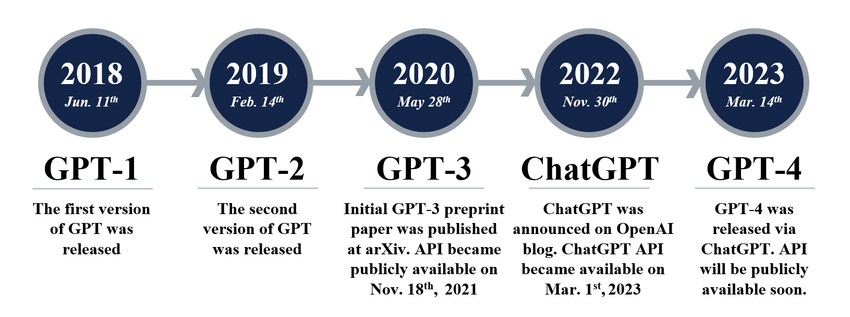

- DALL-E 2 (2022): Users wrote detailed prompts. Success depended heavily on prompt-crafting skill.

- DALL-E 3 (2023): Initially in ChatGPT, it accepted simple instructions, generated a long, detailed prompt internally, and showed that prompt to the user. This was an educational window into the model's "thinking."

- DALL-E 3 (Later 2023/2024): OpenAI removed the prompt display. Users gave an instruction and received only an image.

- GPT-ImageGen-2 (Rumored for 2025/2026): Evidence suggests the system formalizes this opacity. The prompt generation is not just hidden but is an explicit, separate tool-calling step performed by an AI model, potentially a language model like

o1or a successor.

This architectural shift fundamentally changes the user's role from prompt engineer to creative director. The AI handles the technical linguistic optimization, theoretically producing higher-quality images from vaguer instructions but reducing user control and learnability.

Technical Implications of the "Tool" Architecture

If the speculation is correct, GPT-ImageGen-2's design has significant technical implications:

- Multi-Model Orchestration: The image generation becomes a two-step process: 1) A language model interprets the request and crafts a robust prompt, 2) The image model executes it. This is a move towards agentic AI workflows where models use tools.

- Prompt Optimization at Scale: The AI-generated prompts are likely fine-tuned to bypass the image model's weaknesses and adhere to its safety filters more reliably than human-written prompts.

- API and Development Impact: For developers, accessing

GPT-ImageGen-2would likely involve a different API call pattern, possibly through the Assistants API or a similar tool-calling framework, rather than a directimages.generateendpoint.

gentic.news Analysis

This architectural rumor, if confirmed, represents a strategic consolidation of OpenAI's multi-model ecosystem rather than a mere image model upgrade. It signals the full absorption of image generation into the LLM-as-orchestrator paradigm that the company has been advancing since the launch of GPT-4's function calling and the o1 reasoning models. The image model becomes a specialized tool in the LLM's kit, similar to code interpreters or web search.

This move aligns with a broader industry trend we've covered, such as in our analysis of Google's Gemini Advanced, which also employs complex, hidden prompt chaining to improve output quality. However, it starkly contradicts the approach of open-source and competitor platforms like Midjourney, which still rely on and foster deep user expertise in prompt crafting. OpenAI is betting that consistency and ease-of-use for the average user outweigh the benefits of transparency and granular control demanded by power users.

The removal of prompt visibility, now potentially hard-coded into the system's architecture, carries business implications. It creates a stronger product lock-in; users cannot easily replicate the style or precise output by migrating to another platform because the critical "secret sauce"—the optimized prompt—is hidden. This follows OpenAI's pattern of moving from open research to closed, productized AI systems.

Frequently Asked Questions

What is GPT-ImageGen-2?

GPT-ImageGen-2 is the rumored internal name for OpenAI's next-generation image synthesis model, potentially the successor to DALL-E 3. Evidence suggests it is designed not as a standalone product but as a "tool" that is called by other AI models (like ChatGPT's language models) to generate images.

How is GPT-ImageGen-2 different from DALL-E 3?

The core difference appears to be architectural. DALL-E 3, especially in its initial release, showed users the detailed prompt it generated from their simple instruction. GPT-ImageGen-2 seems to formalize this prompt-generation step as a separate, opaque tool call made by an AI model, further distancing the user from the actual text prompt used to create the image.

Why would OpenAI hide the prompts?

There are likely three reasons: 1) Quality Control: AI-generated prompts may be more consistently effective and better at adhering to safety guidelines than user-written ones. 2) Simplicity: It makes the technology more accessible to users who don't want to learn prompt engineering. 3) Product Differentiation: It makes the output harder to replicate on competing platforms, as the optimized prompt is a hidden intermediate.

Can developers access GPT-ImageGen-2?

It is not publicly released. If and when it launches, based on its "tool" description, access would likely be through OpenAI's platform APIs that support tool calls (e.g., the Assistants API or Chat Completions API with function calling), rather than a simple, direct endpoint.