What Happened

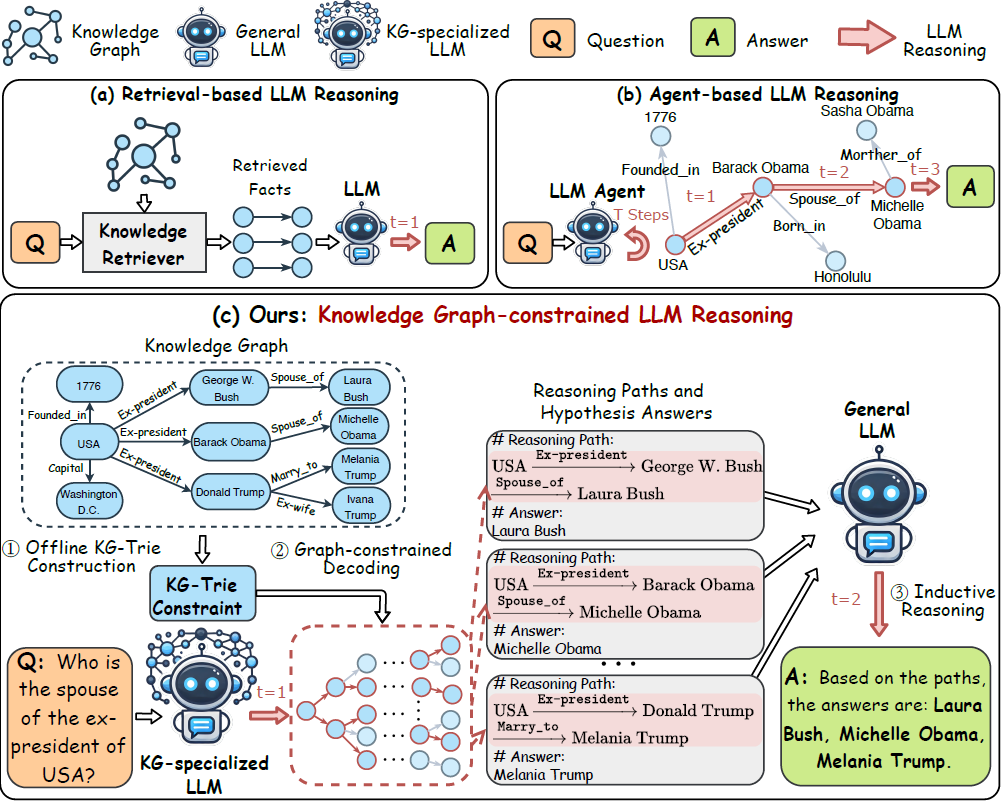

A new technical paper, "Working Notes on Late Interaction Dynamics: Analyzing Targeted Behaviors of Late Interaction Models," was posted to the arXiv preprint server on March 27, 2026. The research investigates two specific, understudied behaviors within a class of advanced information retrieval models known as Late Interaction models. These models, which include architectures like ColBERT, are foundational to modern semantic search and Retrieval-Augmented Generation (RAG) systems. They work by representing queries and documents with multiple contextualized embeddings (one per token) and calculating relevance through a late, token-level interaction.

The study focuses on two core questions:

- Length Bias in Multi-Vector Scoring: Does a theoretical bias—where longer documents accumulate higher similarity scores simply by having more tokens—manifest in practice?

- Efficiency of the MaxSim Operator: The standard MaxSim operator pools token-level scores by taking the maximum similarity for each query token against any document token. Is significant similarity information being discarded by only looking at the top-1 match per query token?

The researchers analyzed these behaviors using state-of-the-art models on the NanoBEIR benchmark.

Technical Details

The Length Bias Problem

Late Interaction models score a query-document pair by summing the maximum cosine similarities between each query token's embedding and all document token embeddings. A long-standing theoretical concern is that longer documents have more "chances" to produce a high maximum similarity for each query token, potentially inflating their scores independent of true relevance.

The study's key finding is that this theoretical bias holds in practice for causal Late Interaction models (which process text sequentially). More surprisingly, the research found that bi-directional models (which process full context) can also exhibit this bias in extreme cases, challenging the assumption that they are immune.

The MaxSim Operator's Efficiency

The MaxSim operator is computationally efficient but could be a bottleneck if it discards valuable signal. The paper investigated whether the similarity scores of the second-best, third-best, etc., document tokens for a given query token show any meaningful, exploitable trend.

The analysis concluded that no significant similarity trend exists beyond the top-1 matched document token. This validates that the MaxSim operator is an efficient choice; it effectively captures the necessary signal without needing to aggregate information from lower-ranked matches, which appear to be noise.

Retail & Luxury Implications

While this is a fundamental IR research paper, its findings have clear, practical implications for retail and luxury companies building next-generation search and discovery engines.

1. Search Relevance and Product Discovery: Luxury e-commerce platforms rely on semantic search that understands nuanced queries like "evening bag with gold chain" or "summer linen blazer." If the underlying retrieval model (often a Late Interaction model in high-performance systems) has a length bias, it could systematically favor longer, more verbose product descriptions over concise, accurate ones. This could distort search rankings, placing a verbose but less relevant item above a perfectly matching, succinctly described product. For luxury, where detail accuracy (materials, craftsmanship, provenance) is paramount, this bias could degrade the customer experience.

2. RAG Systems for Internal Knowledge and Customer Service: Many brands are implementing RAG systems for internal knowledge bases (e.g., product manuals, sourcing guidelines) and customer-facing chatbots. The retrieval component of these systems is critical. Understanding that the MaxSim operator is efficient confirms a standard design choice, allowing teams to focus optimization efforts elsewhere. However, the confirmed length bias is a risk factor. In a customer service RAG, a long, meandering internal policy document might be retrieved over a short, precise FAQ entry that directly answers the question, leading to poorer chatbot responses.

3. Benchmarking and Model Selection: The study uses the NanoBEIR benchmark, part of a trend toward more rigorous, focused evaluation in AI. For technical leaders, this underscores the importance of domain-specific benchmarking. A model's performance on a general academic benchmark may not reveal biases that become critical in a luxury context—where data (product descriptions, clienteling notes) has unique length and stylistic characteristics. Evaluation must test for these specific failure modes.

Implementation Consideration: Mitigating length bias typically involves score normalization techniques (e.g., dividing by document length or applying a learned penalty). Teams deploying these models must audit their retrieval outputs for this bias and implement appropriate normalization within their search pipelines to ensure fair ranking.