Key Takeaways

- The source critiques traditional LLM benchmarks as inadequate for assessing performance in live applications.

- It proposes a shift toward creating continuous test suites that mirror actual user interactions and business logic to ensure reliability and safety.

What Happened

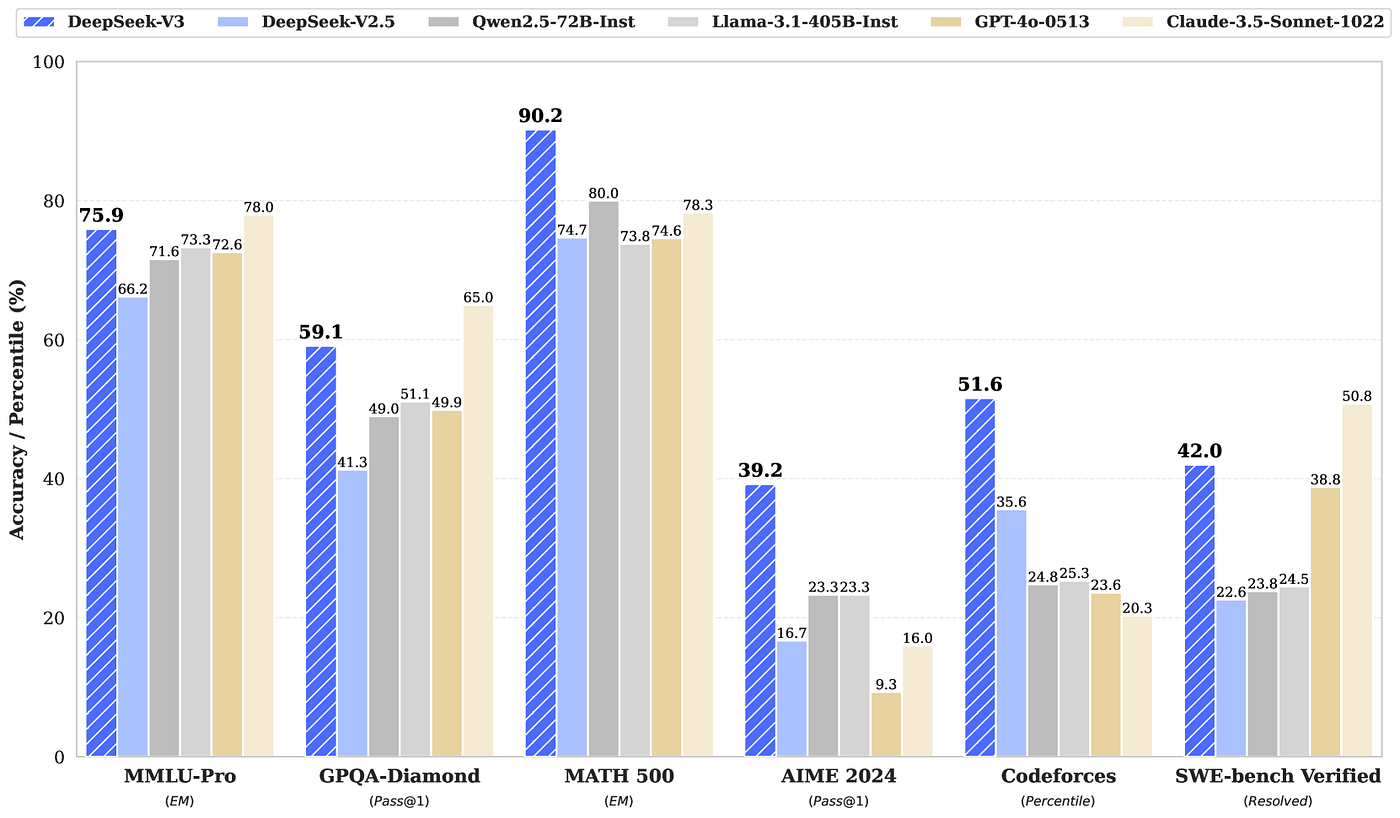

A new article published on AI Mind, titled "LLM Evaluation Beyond Benchmarks: Building Test Suites for Real-World User Workflows," makes a critical argument for the AI engineering community. It posits that the standard practice of evaluating Large Language Models (LLMs) using static, academic benchmarks—like MMLU, HellaSwag, or GSM8K—is fundamentally misaligned with the needs of production systems. These benchmarks, while useful for comparing raw model capabilities on general tasks, fail to capture how a model will perform within the specific, often complex, workflows of a real application.

The core thesis is that for LLMs deployed in business-critical environments, evaluation must evolve. Instead of relying on a one-time benchmark score, teams should build and maintain dynamic test suites. These suites are composed of test cases derived from actual user interactions, edge cases encountered in production, and the precise business logic the application is meant to enforce. This approach shifts evaluation from a pre-deployment checkpoint to a continuous, integrated process that directly measures what matters: the model's ability to execute its assigned role reliably and safely within the live product.

Technical Details: From Benchmarks to Workflow-Centric Testing

The article implicitly outlines a methodology that moves beyond traditional evaluation:

- The Limitation of Benchmarks: Standard benchmarks test broad knowledge or reasoning in a vacuum. They don't account for an application's unique prompt templates, retrieval-augmented generation (RAG) context, guardrails, output parsers, or the chain-of-thought required for multi-step tasks.

- Defining the "Test Suite": A workflow-centric test suite is a collection of scenario-based tests. Each test defines:

- Input: A realistic user query or system prompt, often pulled from logs of a staging or production environment.

- Expected Behavior: Not just a string match, but criteria for success. This could be functional correctness (e.g., "extracts the correct product SKU"), safety (e.g., "refuses to generate promotional text for a restricted product"), tone adherence (e.g., "maintains a luxury brand voice"), or structured output validity.

- Continuous Integration: These test suites are integrated into CI/CD pipelines. Every model change, prompt engineering update, or new data source integration triggers a run of the suite, providing immediate feedback on regression or improvement.

- Key Metrics: Success is measured by metrics like pass@k (does the output meet criteria in one of k trials?), workflow completion rate, and guardrail violation rate, rather than aggregate accuracy on unrelated tasks.

This framework treats the LLM application as a software component with specified requirements, to be tested as rigorously as any other critical system.

Retail & Luxury Implications

For retail and luxury brands deploying AI—whether in customer-facing chatbots, internal knowledge assistants, or content generation systems—this shift in evaluation philosophy is not just relevant; it is essential for managing brand risk and ensuring utility.

Why Generic Benchmarks Fall Short in Luxury: A model that scores 85% on a general knowledge benchmark could still:

- Misstate a brand's heritage or product composition.

- Fail to adhere to a meticulously crafted tone-of-voice guideline.

- Hallucinate inventory availability or pricing.

- Provide styling advice that contradicts the brand's current seasonal narrative.

Building a Luxury-Focused Test Suite: A practical implementation for a high-end retailer might involve:

- Customer Service Workflow Tests: Simulate complex, multi-turn conversations where a customer asks about product care for a specific material, checks for store availability, and requests alternative recommendations—all while expecting responses that reflect brand expertise and empathy.

- Personal Shopping Agent Tests: Evaluate the model's ability to use a customer's purchase history and profile to generate coherent, on-brand outfit recommendations, ensuring it never suggests stylistically clashing items or out-of-stock products.

- Content Generation Guardrails: Test the model against generating marketing copy that uses unauthorized discount language, makes unsubstantiated sustainability claims, or deviates from the approved lexicon.

- Data Extraction Validation: From unstructured customer feedback or supplier emails, test the model's precision in extracting entities like order numbers, product references, or specific complaint types into a structured format.

Adopting this methodology transforms LLM evaluation from an abstract data science exercise into a concrete quality assurance (QA) and compliance function, directly tied to protecting brand equity and ensuring operational reliability.