A developer's attempt to purchase a 128GB Mac Studio to run a 70B parameter model locally has revealed a significant, global hardware shortage, signaling a potential end to the brief era of affordable, high-performance local AI development on consumer-grade Apple hardware.

Key Takeaways

- Developers report a global shortage of high-memory Apple Silicon Macs, with 128GB Mac Studios unavailable worldwide.

- This pushes practitioners toward renting cloud H100 GPUs at ~$3/hr, marking a shift from the recent local AI trend.

What Happened

Developer George Pu reported that while Apple's online store listed the 128GB Mac Studio as "Available," every configuration with more than 24GB of unified memory was, in fact, unavailable for purchase. This shortage appears to be global, affecting multiple countries. According to the report, Apple's memory suppliers are prioritizing datacenter orders—fueled by an estimated $650 billion in commitments this year—leaving consumer Mac products last in line.

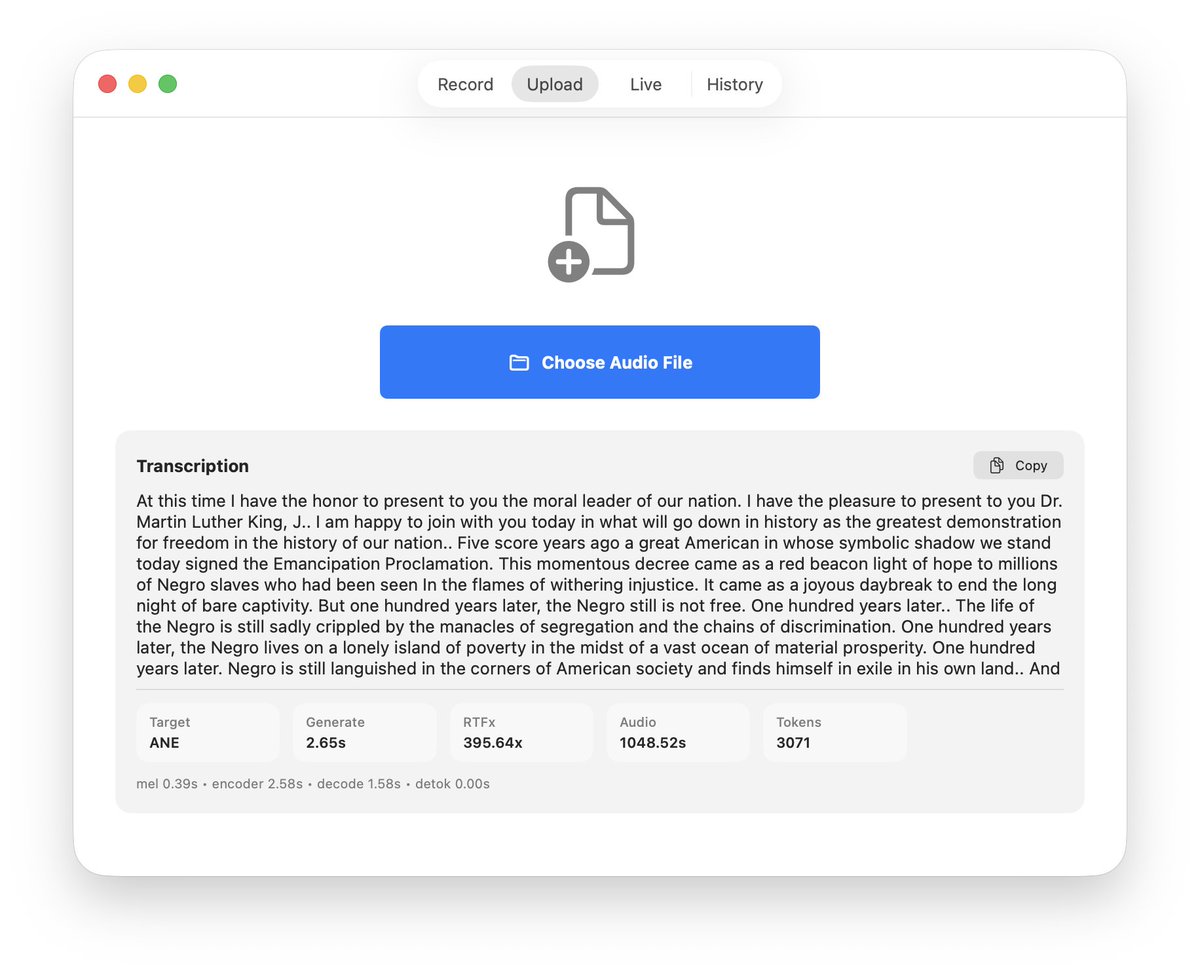

Faced with this supply constraint, Pu's solution was to rent an NVIDIA H100 GPU in Toronto for $2.99 per hour. This pivot underscores a practical reality: when ownership of the necessary hardware is impossible, control over the model, its jurisdiction, and the software stack shifts back to the cloud.

The End of the "Mac Mini AI Era"

The incident highlights what Pu calls the conclusion of the "'just buy a Mac Mini' AI era," which lasted roughly a year. This period was defined by the accessibility of Apple's M-series Silicon, particularly the M2 Ultra and M3 Max chips, which offered sufficient memory bandwidth and unified memory to run billion-parameter models locally on relatively affordable hardware. The promise was one of sovereignty: developers could own and control their AI inference and fine-tuning workflows entirely on their desks, avoiding cloud costs, egress fees, and vendor lock-in.

That promise is now colliding with supply chain economics. The explosive demand for high-bandwidth memory (HBM) and advanced packaging from hyperscalers and AI cloud providers is creating a scarcity that trickles down, making high-specification consumer workstations a low-priority item for component manufacturers.

The Cloud Rental Alternative

With local ownership blocked, the immediate alternative is the spot market for cloud GPUs. Services like vast.ai, RunPod, and Lambda GPU Cloud offer hourly rentals of high-end accelerators like the H100, A100, and even the newer H200. At approximately $3 per hour for an H100, the cost calculus changes from a one-time capital expenditure (a ~$4,000+ Mac Studio) to a variable operational expense.

For developers, this means:

- Loss of Local Control: Models and data must leave the local environment.

- Recurring Costs: Intermittent development work becomes a persistent hourly cost.

- Jurisdictional Complexity: Data must reside in specific cloud regions, potentially complicating compliance.

What to Watch: WWDC and the M5 Ultra

The next potential inflection point is Apple's Worldwide Developers Conference (WWDC) in June 2026, where the company is expected to announce the M5 family of chips. Developers are specifically watching for the M5 Ultra, which would likely succeed the M2 Ultra in the Mac Studio. The key questions will be:

- Availability: Will Apple secure enough high-bandwidth memory to meet demand for high-memory configurations at launch?

- Performance: Will the memory bandwidth and neural engine improvements be sufficient to justify waiting and to compete with the raw throughput of cloud-based H100s?

If the shortage persists or the M5 Ultra is delayed, the migration of AI development workloads back to the cloud will accelerate.

gentic.news Analysis

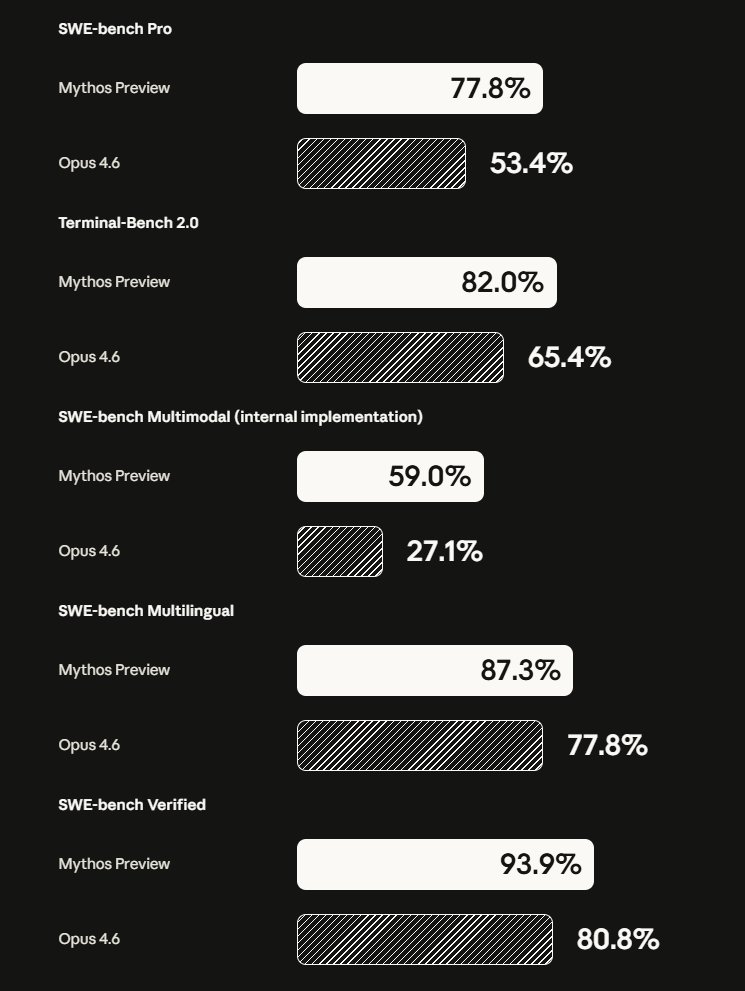

This shortage is not an isolated incident but a direct symptom of the macro-trend we identified in our 2025 analysis, "The $650B Chip Crunch: How AI Capex Is Starving Other Tech Sectors." The capital expenditure war among hyperscalers (AWS, Google Cloud, Microsoft Azure) and GPU cloud providers (CoreWeave, Lambda) has created a voracious demand for the most advanced semiconductor components, particularly HBM3e and TSMC's CoWoS packaging capacity. Consumer electronics giants like Apple, which operate on thinner margins and different procurement cycles, are losing bidding wars for these same components. This aligns with recent reports from analysts like Dylan Patel of SemiAnalysis, who have detailed how datacenter orders are consuming over 90% of TSMC's advanced packaging output.

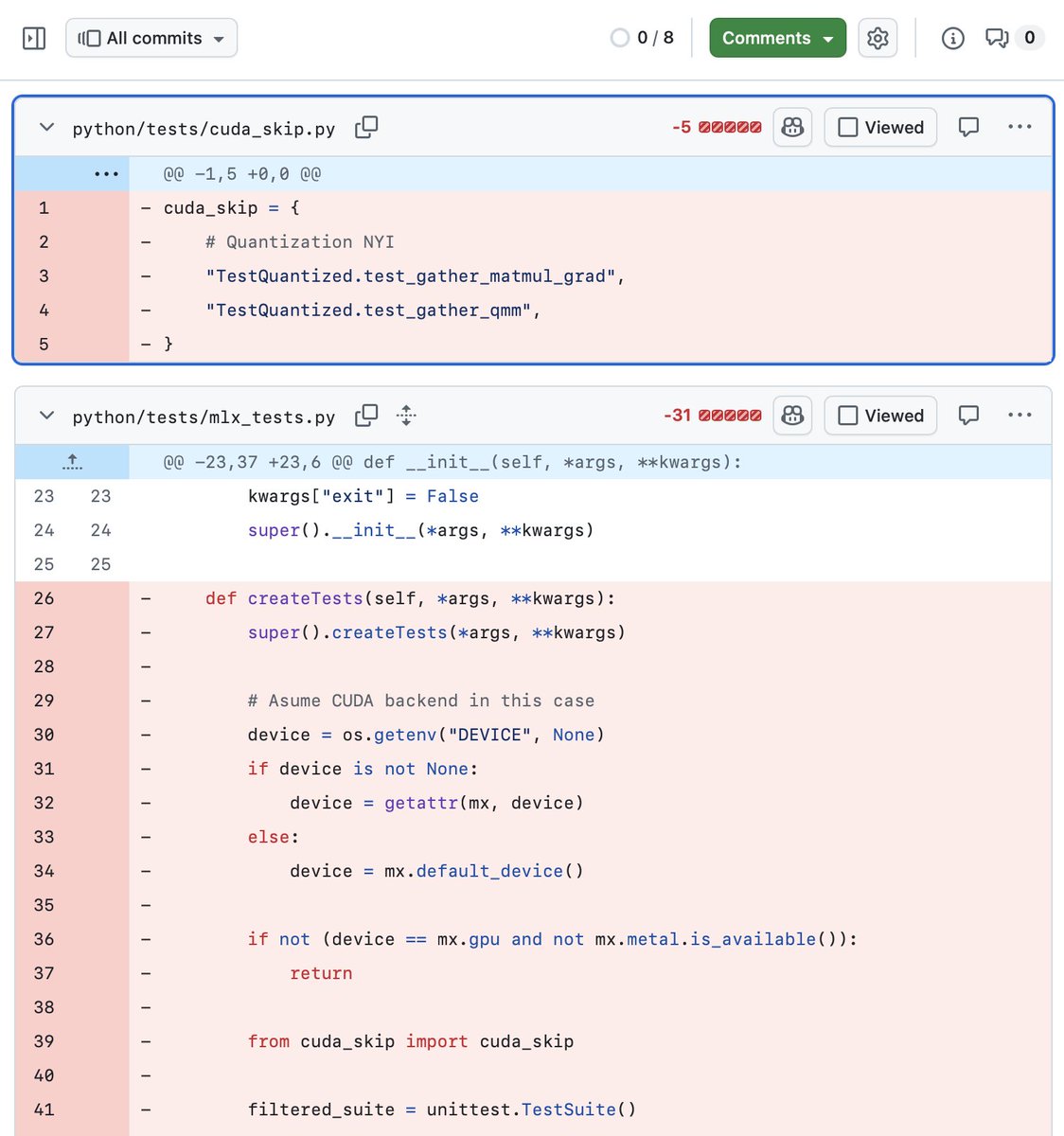

The practical implication for AI engineers is a re-evaluation of the "local-first" strategy that emerged in 2024-2025. Frameworks like llama.cpp, MLX, and Ollama made local execution viable, but they depend on accessible hardware. This shortage effectively re-erects a hardware barrier to entry, potentially centralizing advanced model experimentation back with well-funded labs and corporations that have secured GPU clusters. The democratizing wave of local AI may be hitting a supply-chain sandbar. For practitioners, the lesson is to build workflows that are hybrid by design, capable of running locally when hardware is available but failing over seamlessly to cloud endpoints when it is not.

Frequently Asked Questions

Why are high-memory Mac Studios unavailable?

Apple's memory suppliers are reportedly prioritizing orders for datacenter-grade components, driven by massive capital expenditure from AI cloud providers. Consumer products like the Mac Studio are receiving lower allocation, creating a global shortage of configurations with 64GB or 128GB of unified memory.

What are the best alternatives for running large models locally?

Without a 128GB Mac Studio, alternatives include building a PC with an NVIDIA RTX 4090 (24GB) and using model quantization to run smaller variants of 70B models, or using multiple older GPUs in a home server. However, neither matches the simplicity or memory capacity of the high-end Mac Studio. The other path is to use cloud GPU rentals.

How much does it cost to rent an H100 GPU?

As of this report, spot market prices for an NVIDIA H100 GPU (80GB SXM) can be as low as $2.99 to $4.50 per hour on platforms like vast.ai, depending on location and availability. This is for raw compute; storage and network transfer costs are additional.

Will the new M5 Ultra Mac Studio fix this shortage?

It is unclear. While the M5 Ultra, expected at WWDC in June 2026, will offer performance improvements, the core constraint is the supply of high-bandwidth memory, not the Apple Silicon chip itself. Unless the supply chain dynamics change, the new model could face similar availability issues at launch, especially for high-memory configurations.